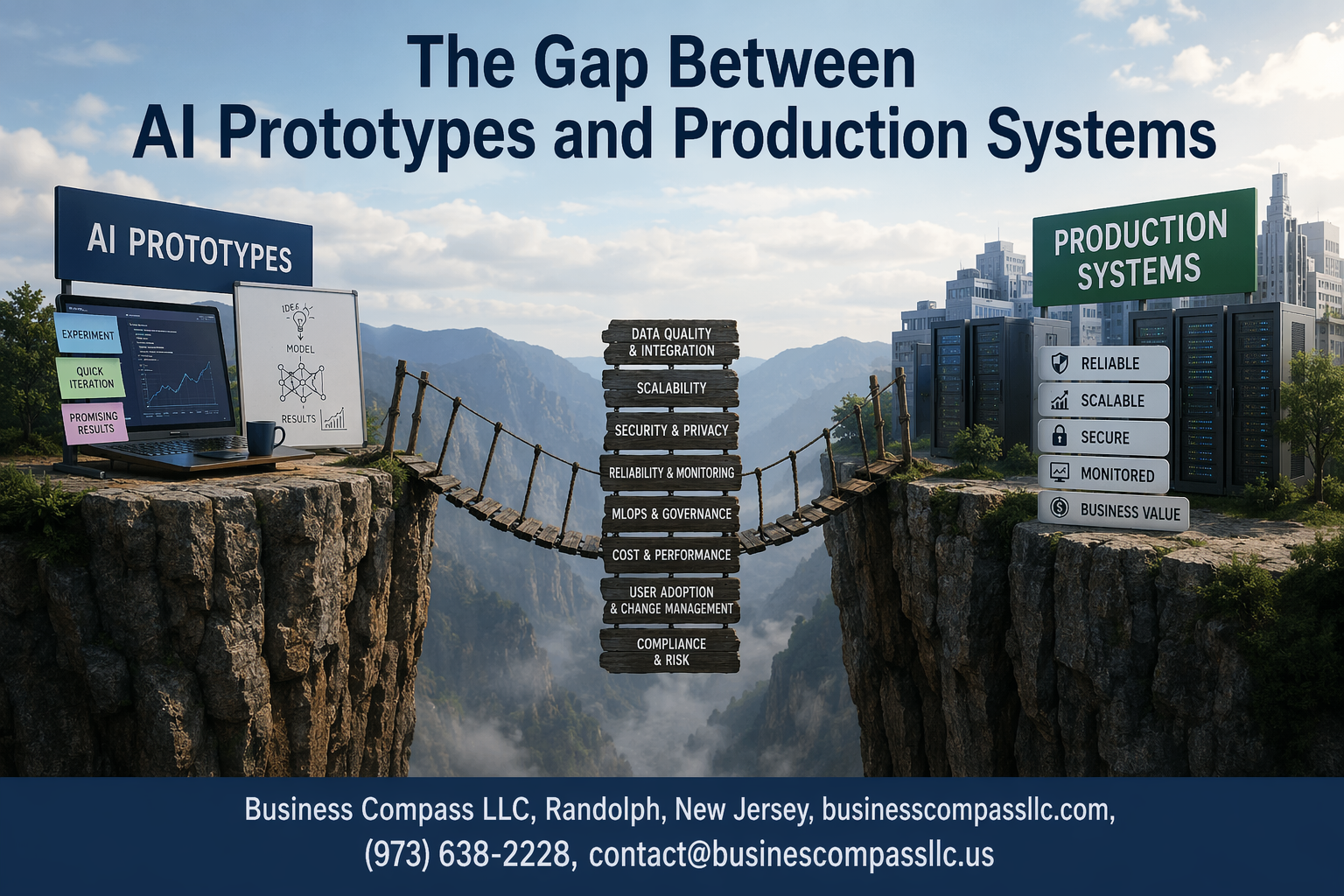

The Gap Between AI Prototypes and Production Systems: Why Most AI Projects Never See the Real World

Getting your AI prototype to production is like the difference between cooking a perfect meal for two people versus running a restaurant that serves thousands daily. This guide breaks down the AI prototype to production challenges that trip up data scientists, ML engineers, and tech leaders who’ve built brilliant models that somehow fall apart when real users get their hands on them.

Who This Guide Is For:

Data scientists struggling with machine learning deployment challenges, ML engineers facing production AI challenges, and tech managers trying to bridge the AI implementation gap in their organizations.

What You’ll Learn:

We’ll cover the fundamental differences between prototype vs production AI systems that catch teams off guard, dive into the technical and data-related obstacles that create bottlenecks in your machine learning production pipeline, and share strategic approaches for building scalable AI production systems. You’ll also get practical insights into machine learning operations MLOps practices that actually work when your AI model deployment needs to handle real-world complexity and AI system scalability demands.

Understanding the Fundamental Differences Between Prototypes and Production Systems

Defining AI prototypes and their limited scope

AI prototypes serve as proof-of-concept demonstrations, built quickly with limited datasets and simplified assumptions. These experimental models focus on validating core algorithms rather than handling real-world complexities. Prototypes typically operate in controlled environments with clean data, basic error handling, and minimal security considerations.

Exploring production system requirements and constraints

Production AI systems must meet stringent reliability standards, processing millions of requests with sub-second response times while maintaining 99.9% uptime. Unlike prototypes, these systems require robust data pipelines, comprehensive monitoring, security protocols, and seamless integration with existing infrastructure. MLOps practices become essential for managing the complete machine learning production pipeline.

Identifying key performance and reliability gaps

The AI prototype to production journey reveals significant performance disparities. Prototypes achieving 95% accuracy often drop to 70-80% when exposed to real-world data variations and edge cases. Production systems demand consistent performance across diverse user scenarios, automatic failover mechanisms, and the ability to handle unexpected inputs gracefully while maintaining operational stability.

Technical Challenges That Create the Implementation Gap

Scaling model performance across diverse data environments

Moving an AI prototype from controlled lab conditions to real-world environments reveals performance inconsistencies that rarely surface during initial testing. Production systems encounter data variations, edge cases, and distribution shifts that prototype environments simply can’t replicate. Models trained on curated datasets often struggle when faced with incomplete records, varying data quality, and unexpected input formats that production environments deliver daily.

Managing computational resources and infrastructure demands

Resource allocation challenges become apparent when AI models transition from development to production scale. Prototype systems typically run on limited datasets with generous computational budgets, while production deployments must handle thousands of concurrent users with strict latency requirements. The infrastructure gap widens as organizations discover their existing hardware can’t support real-time inference demands, forcing expensive upgrades or cloud migration strategies.

Ensuring consistent accuracy under real-world conditions

Performance degradation hits production AI systems harder than anticipated, as real-world data contains noise, bias, and anomalies absent from training datasets. Models that achieved 95% accuracy in testing might drop to 70% when processing actual user inputs. Seasonal variations, user behavior changes, and data drift compound these accuracy challenges, requiring continuous monitoring and model retraining that wasn’t part of the original prototype timeline.

Integrating with existing enterprise systems and workflows

Legacy system integration creates technical debt that prototype developers rarely consider during initial AI development phases. Production AI must communicate with databases, APIs, and workflows built on different architectures and technologies. Authentication protocols, data format conversions, and security compliance requirements add layers of complexity that can triple deployment timelines and require specialized expertise beyond the core AI development team.

Data-Related Obstacles in Production Deployment

Addressing data quality and consistency issues at scale

Production AI systems face massive data quality challenges that rarely surface during prototype development. While prototypes typically work with clean, curated datasets, production environments deal with messy, incomplete, and inconsistent data streams from multiple sources. Real-world data contains missing values, formatting inconsistencies, and unexpected edge cases that can break AI model deployment pipelines.

Scaling data validation becomes exponentially complex when transitioning from prototype to production AI challenges. Organizations must implement robust data monitoring systems that automatically detect anomalies, validate incoming data against established schemas, and maintain data lineage tracking across distributed systems.

Handling data drift and model degradation over time

Data drift represents one of the most critical machine learning deployment challenges in production systems. Unlike static prototype environments, production data continuously evolves as user behaviors change, market conditions shift, and business requirements adapt. This evolution gradually degrades model performance, creating the need for sophisticated MLOps monitoring frameworks.

Successful machine learning operations require automated drift detection mechanisms that compare current data distributions against baseline training sets. Teams must establish clear thresholds for acceptable performance degradation and implement automated retraining workflows to maintain model accuracy over time.

Managing privacy, security, and compliance requirements

Production AI systems must navigate complex regulatory landscapes that prototypes typically ignore. Data privacy regulations like GDPR and CCPA impose strict requirements on data processing, storage, and model explainability that significantly impact AI production systems architecture. Security vulnerabilities become critical concerns when models process sensitive customer data at scale.

Compliance requirements often necessitate complete redesigns of AI system scalability approaches, forcing teams to implement data anonymization, audit trails, and model governance frameworks. These operational constraints can fundamentally alter the machine learning production pipeline, creating substantial gaps between prototype functionality and production capabilities.

Operational and Maintenance Complexities

Building robust monitoring and alerting systems

Production AI systems need comprehensive monitoring that tracks model performance, data quality, and system health in real-time. Smart alerting mechanisms detect anomalies before they impact users, while dashboard visualizations help teams understand system behavior patterns and identify potential issues early.

Implementing automated model retraining and updates

Automated retraining pipelines keep AI models fresh by detecting performance degradation and triggering updates when needed. These systems handle version control, testing, and gradual rollouts while maintaining backward compatibility. MLOps frameworks streamline the entire process from data preparation to deployment validation.

Establishing governance frameworks for AI systems

AI governance requires clear policies for model approval, risk assessment, and compliance monitoring. Documentation standards ensure reproducibility while access controls protect sensitive models and data. Regular audits verify that production AI systems meet regulatory requirements and ethical guidelines.

Creating effective human oversight and intervention protocols

Human-in-the-loop systems enable domain experts to review critical decisions and intervene when models behave unexpectedly. Escalation procedures define when human judgment overrides AI recommendations. Training programs ensure operators understand model limitations and know how to handle edge cases effectively.

Developing disaster recovery and fallback mechanisms

Robust fallback strategies include graceful degradation modes when AI systems fail and backup models for critical functions. Recovery procedures minimize downtime through automated failover systems and manual override capabilities. Testing these mechanisms regularly prevents surprises during actual incidents.

Strategic Approaches to Bridge the Production Gap

Adopting MLOps practices for streamlined deployment

MLOps transforms chaotic AI prototype to production workflows into systematic, repeatable processes. Organizations implementing MLOps see 60% faster deployment times through automated testing, version control, and continuous integration pipelines. Key practices include automated model validation, infrastructure as code, and monitoring frameworks that catch performance degradation before users notice.

Implementing gradual rollout and A/B testing strategies

Smart companies never deploy AI models to 100% of users immediately. Canary releases start with 1-5% traffic, gradually expanding as confidence grows. A/B testing strategies compare new models against existing baselines, measuring business metrics alongside technical performance. This approach minimizes risk while gathering real-world validation data that prototype environments can’t provide.

Building cross-functional teams with production expertise

Production AI success requires more than data scientists working in isolation. Cross-functional teams blend machine learning expertise with DevOps, security, and domain knowledge. Site reliability engineers ensure system uptime, while product managers translate technical capabilities into business value. This collaborative approach prevents the “throw it over the wall” mentality that kills most AI initiatives.

Leveraging cloud platforms and containerization technologies

Cloud platforms eliminate infrastructure headaches that derail AI production systems. Docker containers ensure models run consistently across development and production environments, while Kubernetes orchestrates scaling based on demand. Major cloud providers offer managed ML services that handle deployment complexity, letting teams focus on model quality rather than infrastructure management. Container registries maintain version control for both code and runtime environments.

Building AI prototypes is like creating a beautiful concept car – it looks amazing and shows what’s possible, but there’s a huge difference between that shiny demo and something that can handle real-world roads every single day. The jump from prototype to production involves solving complex technical puzzles, managing messy real-world data, and creating systems that won’t break when thousands of people start using them at once. These aren’t just minor tweaks – they’re fundamental challenges that require different skills, tools, and mindsets.

The good news is that this gap isn’t impossible to cross. Companies that succeed focus on planning for production from day one, investing in robust data pipelines, and building teams that understand both the AI magic and the boring-but-critical infrastructure work. If you’re working on AI projects, start thinking about scalability, monitoring, and maintenance early in the process. The prototype might get you excited, but the production system is what will actually change your business and deliver real value to your users.