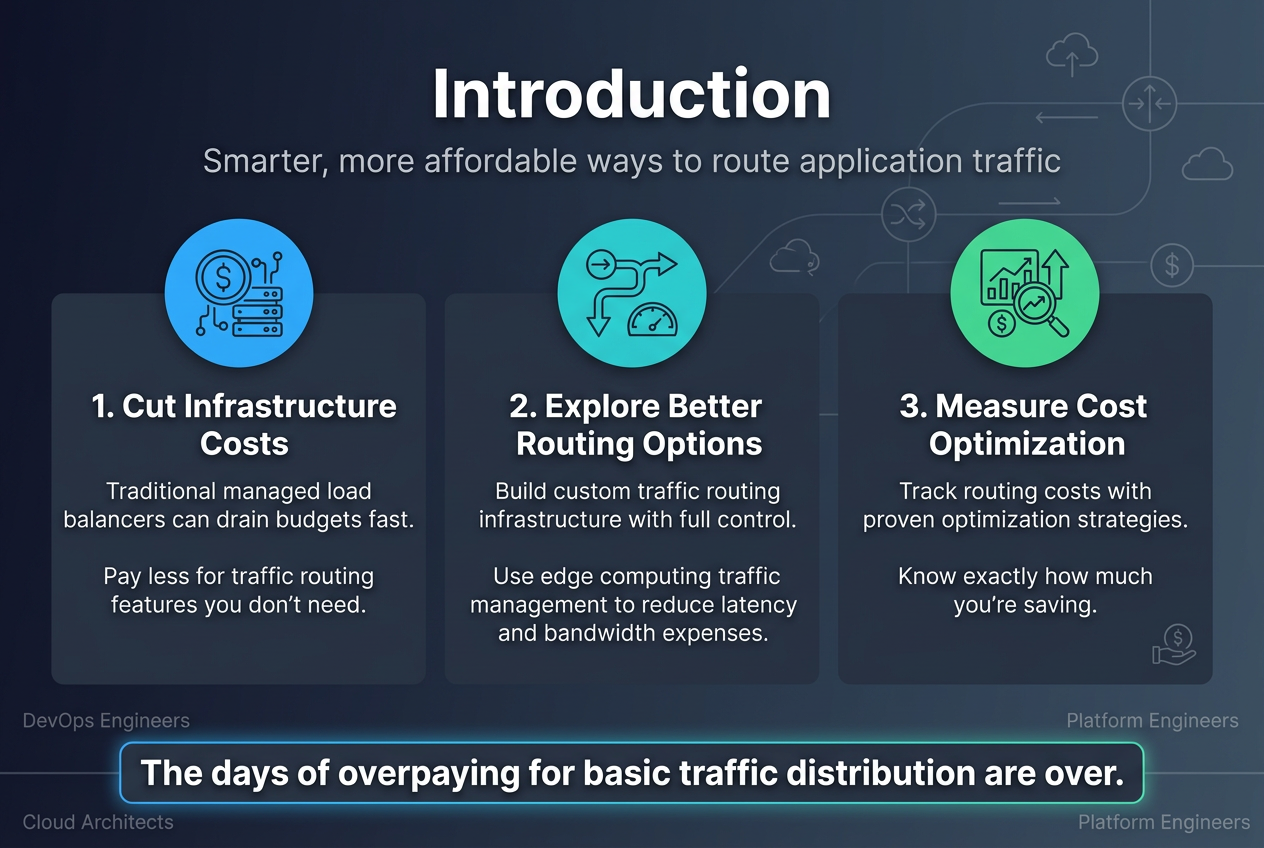

Traditional managed load balancers can drain your infrastructure budget fast. Many DevOps teams and cloud architects pay premium prices for features they don’t need while missing opportunities to optimize traffic routing costs.

This guide is for DevOps engineers, cloud architects, and platform engineers ready to explore cost-efficient traffic routing beyond expensive managed solutions. You’ll discover practical load balancer alternatives that cut costs without sacrificing performance.

We’ll walk through building custom traffic routing infrastructure that gives you full control over your routing decisions and costs. You’ll also learn how edge computing traffic management can reduce latency while slashing bandwidth expenses. Finally, we’ll cover proven strategies for measuring your routing cost optimization efforts so you know exactly how much you’re saving.

The days of overpaying for basic traffic distribution are over. Let’s dive into smarter, more affordable ways to route your application traffic.

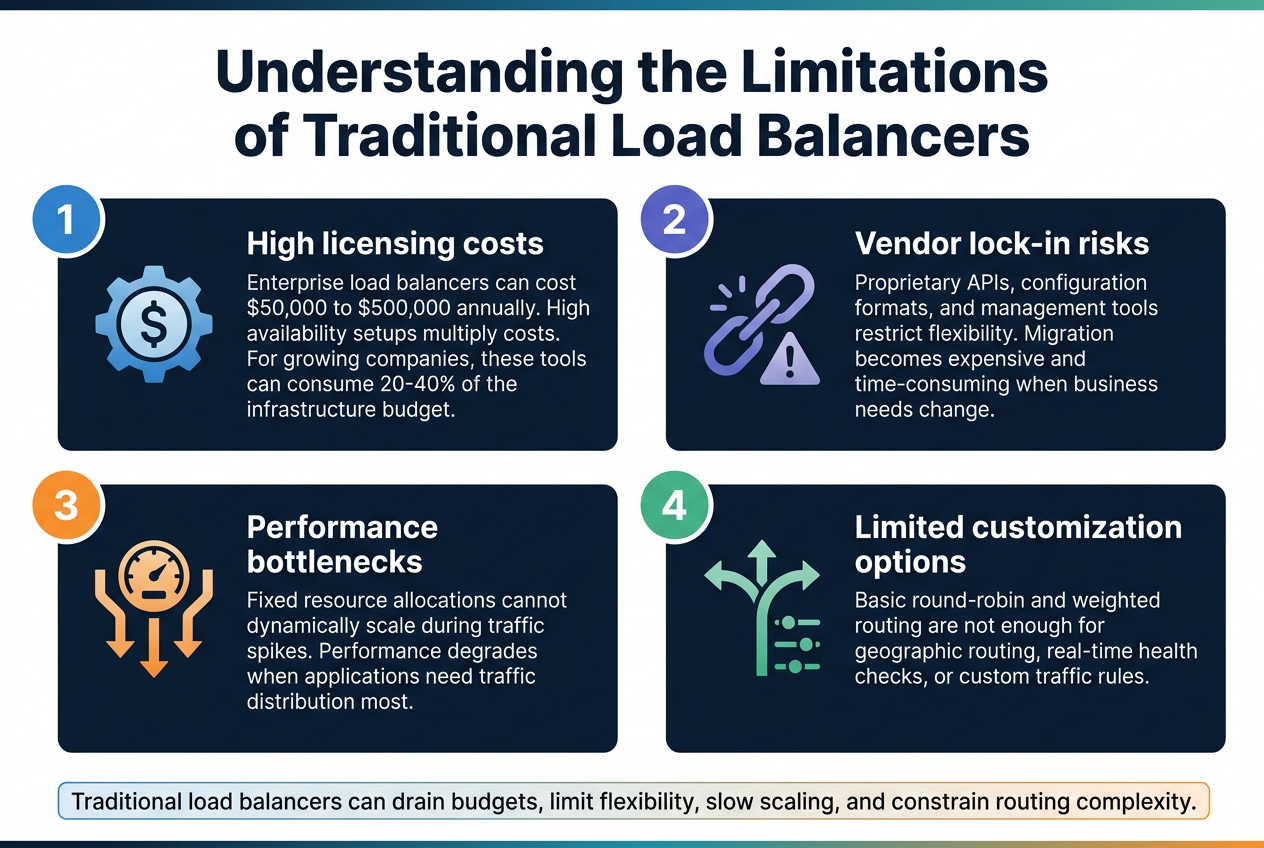

Understanding the Limitations of Traditional Load Balancers

High licensing costs that drain infrastructure budgets

Enterprise load balancers can cost organizations $50,000 to $500,000 annually in licensing fees alone. These expenses multiply when businesses need high availability setups requiring multiple instances. For growing companies handling moderate traffic volumes, traditional load balancer costs often consume 20-40% of their entire infrastructure budget.

Vendor lock-in risks that restrict architectural flexibility

Proprietary load balancer solutions create dangerous dependencies that limit future architectural decisions. Organizations find themselves trapped using specific APIs, configuration formats, and management tools. When business needs change or better cost-efficient traffic routing alternatives emerge, migration becomes expensive and time-consuming due to these vendor-specific implementations.

Performance bottlenecks during traffic spikes

Traditional load balancers become single points of failure during unexpected traffic surges. Their fixed resource allocations can’t dynamically scale like cloud-native routing solutions. This creates performance degradation when applications need traffic distribution most. Many organizations discover their expensive load balancers actually limit growth rather than enable it.

Limited customization options for complex routing needs

Most managed load balancers offer basic round-robin or weighted routing algorithms with minimal customization capabilities. Complex applications requiring geographic routing, real-time health checks, or custom traffic routing infrastructure find themselves constrained by vendor-imposed limitations. These restrictions force engineering teams to implement workarounds that add complexity and reduce system reliability.

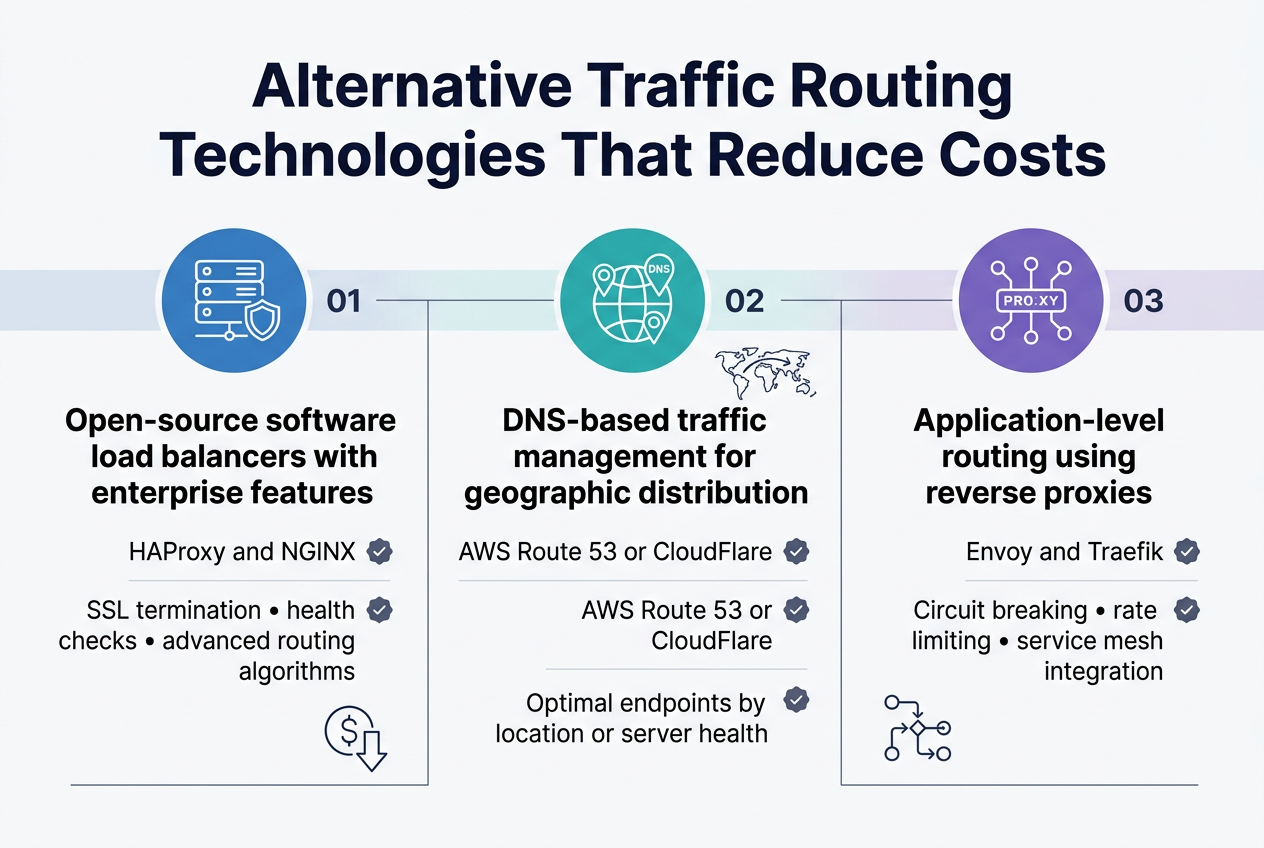

Alternative Traffic Routing Technologies That Reduce Costs

Open-source software load balancers with enterprise features

HAProxy and NGINX stand out as powerful cost-efficient traffic routing solutions that rival expensive managed alternatives. These open-source platforms deliver enterprise-grade features like SSL termination, health checking, and advanced routing algorithms without licensing fees. Organizations can achieve significant cost savings while maintaining full control over their traffic management infrastructure.

DNS-based traffic management for geographic distribution

DNS-based routing offers a lightweight approach to distributed traffic management by directing users to optimal endpoints based on geographic location or server health. Services like AWS Route 53 or CloudFlare provide intelligent DNS routing at a fraction of traditional load balancer costs. This method works particularly well for global applications requiring geographic distribution without complex infrastructure overhead.

Application-level routing using reverse proxies

Reverse proxies like Envoy and Traefik enable sophisticated application-level routing directly within your infrastructure stack. These solutions support advanced features including circuit breaking, rate limiting, and service mesh integration while eliminating expensive managed load balancer dependencies. Teams gain granular control over traffic patterns and can implement custom routing logic tailored to specific application requirements.

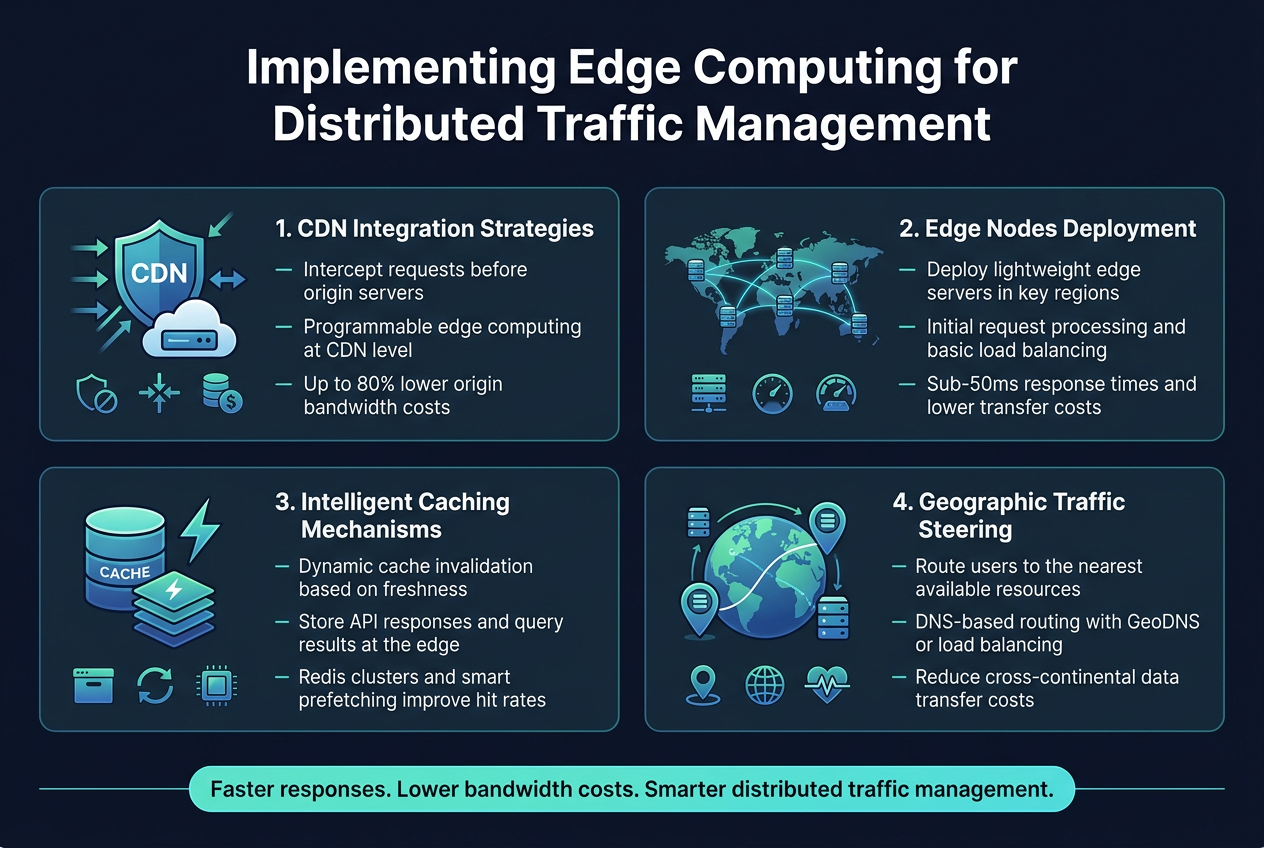

Implementing Edge Computing for Distributed Traffic Management

CDN Integration Strategies That Minimize Origin Server Load

Smart CDN integration transforms your traffic routing economics by intercepting requests before they reach your origin servers. Modern CDNs like CloudFlare, AWS CloudFront, and Fastly offer programmable edge computing capabilities that handle traffic distribution logic directly at the edge. By implementing intelligent routing rules at CDN level, you reduce origin server bandwidth costs by up to 80% while maintaining lightning-fast response times.

Edge Nodes Deployment for Reduced Latency and Bandwidth Costs

Strategic edge node placement creates a distributed traffic management system that slashes both latency and bandwidth expenses. Deploy lightweight edge servers in key geographic regions using providers like DigitalOcean, Vultr, or bare-metal solutions. These nodes handle initial request processing, implement basic load balancing logic, and route traffic based on real-time server health metrics. This approach delivers sub-50ms response times while reducing cross-region data transfer costs significantly.

Intelligent Caching Mechanisms That Optimize Resource Utilization

Advanced caching strategies at the edge layer dramatically improve resource utilization and reduce infrastructure costs. Implement dynamic cache invalidation based on content freshness, user behavior patterns, and geographic demand. Edge caching systems can store frequently accessed data, API responses, and even database query results closer to users. Redis clusters at edge locations combined with smart prefetching algorithms ensure optimal cache hit rates while minimizing storage costs.

Geographic Traffic Steering Based on User Proximity

Geographic traffic steering routes users to the nearest available resources, reducing latency while optimizing bandwidth costs. DNS-based routing solutions like Route 53, Cloudflare’s Load Balancing, or custom GeoDNS implementations direct traffic based on user location and server availability. This distributed traffic management approach eliminates expensive cross-continental data transfers and ensures users always connect to the closest, healthiest server instance for optimal performance and cost efficiency.

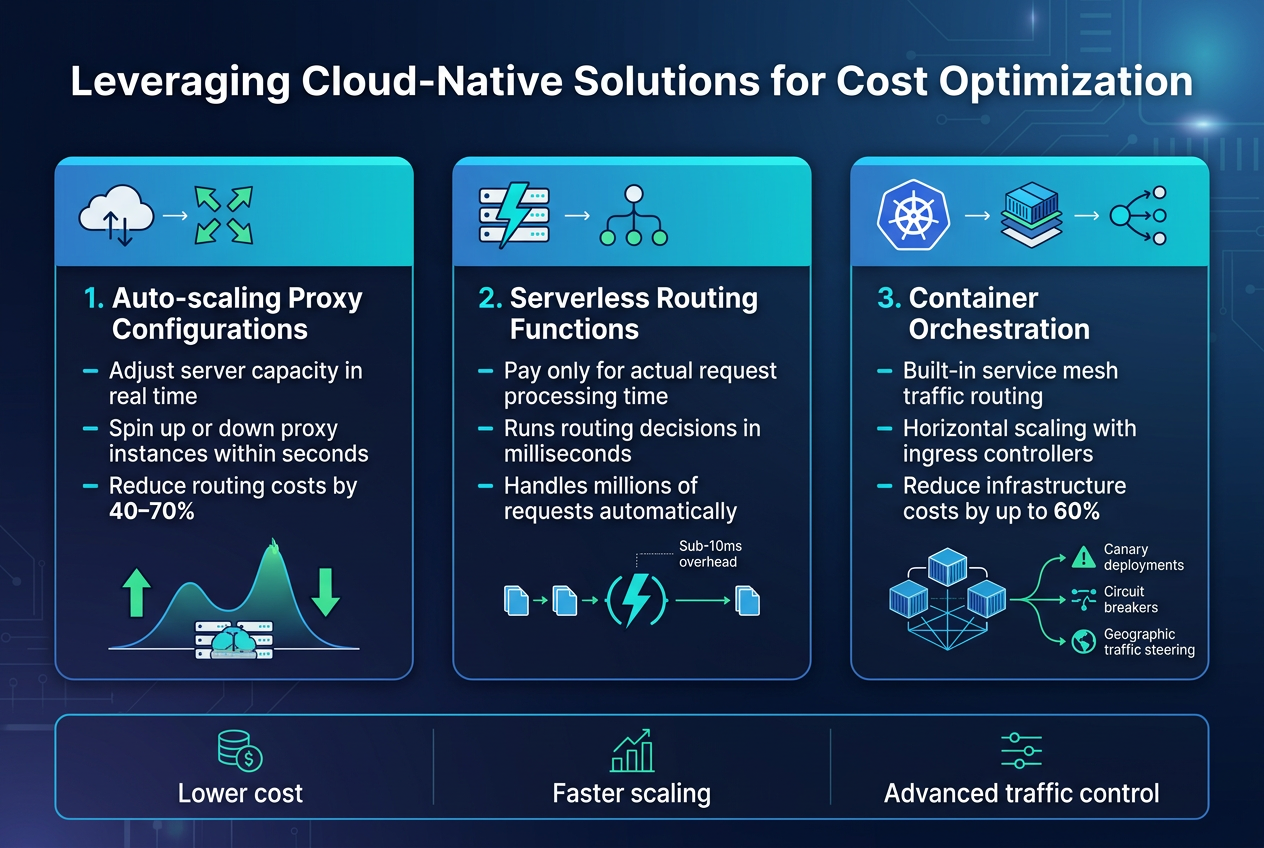

Leveraging Cloud-Native Solutions for Cost Optimization

Auto-scaling proxy configurations that match demand patterns

Cloud-native auto-scaling proxy solutions automatically adjust server capacity based on real-time traffic patterns, eliminating the need for expensive over-provisioned load balancers. These configurations monitor incoming requests and spin up or down proxy instances within seconds, ensuring you only pay for resources during peak demand periods. Popular solutions like NGINX Plus with Kubernetes HPA or Envoy proxy with custom metrics can reduce routing costs by 40-70% compared to traditional managed load balancer services.

Serverless routing functions with pay-per-use pricing

Serverless routing functions offer the ultimate cost-efficient traffic routing solution by charging only for actual request processing time. AWS Lambda@Edge, Azure Functions, or Google Cloud Functions can handle intelligent traffic distribution logic without maintaining dedicated infrastructure. These functions execute routing decisions in milliseconds and automatically scale to handle millions of requests, making them perfect for applications with unpredictable traffic spikes while maintaining sub-10ms latency overhead.

Container orchestration for dynamic traffic distribution

Container orchestration platforms like Kubernetes provide built-in service mesh capabilities that enable sophisticated traffic routing without additional load balancer costs. Using ingress controllers such as Traefik or Kong, combined with service discovery mechanisms, creates a distributed traffic management system that scales horizontally. This approach reduces infrastructure costs by up to 60% while providing advanced features like canary deployments, circuit breakers, and geographic traffic steering through lightweight container-based proxies.

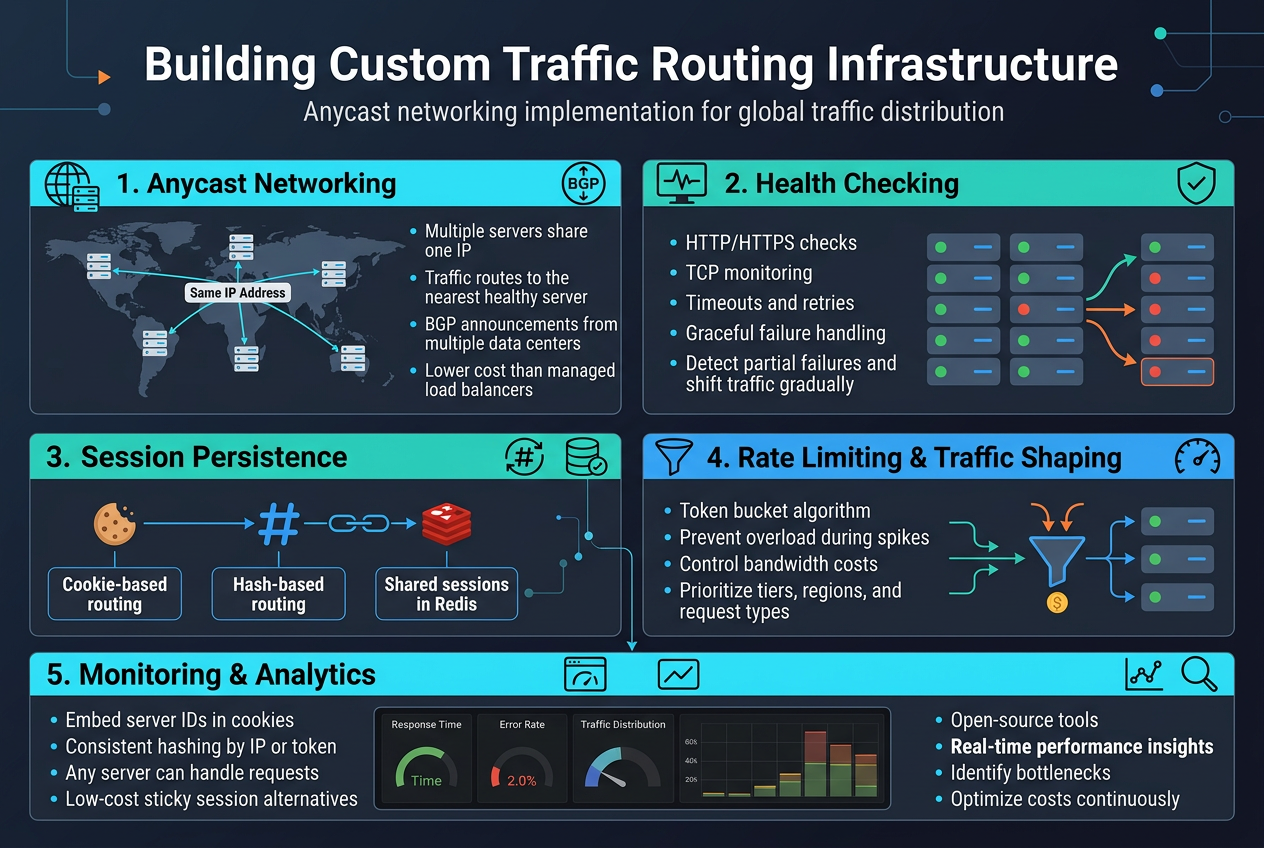

Building Custom Traffic Routing Infrastructure

Anycast networking implementation for global traffic distribution

Anycast networking represents one of the most powerful cost-efficient traffic routing solutions for global applications. This approach allows multiple servers across different geographic locations to share the same IP address, automatically directing users to the nearest available server based on network topology. Unlike expensive managed load balancers, anycast leverages existing internet routing protocols to distribute traffic naturally.

The implementation requires configuring BGP (Border Gateway Protocol) announcements from multiple data centers, creating a distributed network where traffic flows to the closest healthy endpoint without additional infrastructure costs.

Health checking mechanisms that ensure high availability

Custom traffic routing infrastructure demands robust health checking systems that monitor server availability without relying on expensive hardware solutions. Simple HTTP/HTTPS health checks combined with TCP connection monitoring provide reliable service detection at minimal cost. These mechanisms should include configurable timeout values, retry logic, and graceful failure handling to maintain service continuity.

Smart health checking algorithms can detect partial failures and gradually shift traffic away from degraded servers, preventing complete service outages while optimizing resource usage across your infrastructure.

Session persistence strategies without expensive hardware

Session persistence in custom traffic routing infrastructure can be achieved through several cost-effective methods. Cookie-based routing maintains user sessions by embedding server identifiers in browser cookies, eliminating the need for expensive sticky session hardware. Hash-based routing uses consistent hashing algorithms to map user requests to specific servers based on IP addresses or session tokens.

Database-backed session sharing allows any server to handle requests by storing session data in a centralized cache like Redis, providing flexibility while keeping infrastructure costs low.

Rate limiting and traffic shaping for cost control

Traffic shaping and rate limiting form essential components of cost-controlled routing infrastructure. Token bucket algorithms provide smooth rate limiting that prevents server overload while maintaining good user experience during traffic spikes. These controls help manage bandwidth costs and prevent resource exhaustion without requiring expensive DDoS protection services.

Implementing intelligent traffic shaping based on user tiers, geographic regions, or request types allows fine-grained cost control while ensuring critical traffic receives priority during peak usage periods.

Monitoring and analytics tools for performance optimization

Performance monitoring in custom traffic routing requires lightweight, cost-effective solutions that provide actionable insights. Open-source tools like Prometheus and Grafana offer comprehensive metrics collection and visualization without licensing fees. These platforms can track response times, error rates, and traffic distribution patterns across your routing infrastructure.

Real-time analytics enable proactive optimization decisions, helping identify bottlenecks and traffic patterns that impact both performance and costs, allowing continuous refinement of your custom routing strategies.

Measuring and Optimizing Your Routing Cost Savings

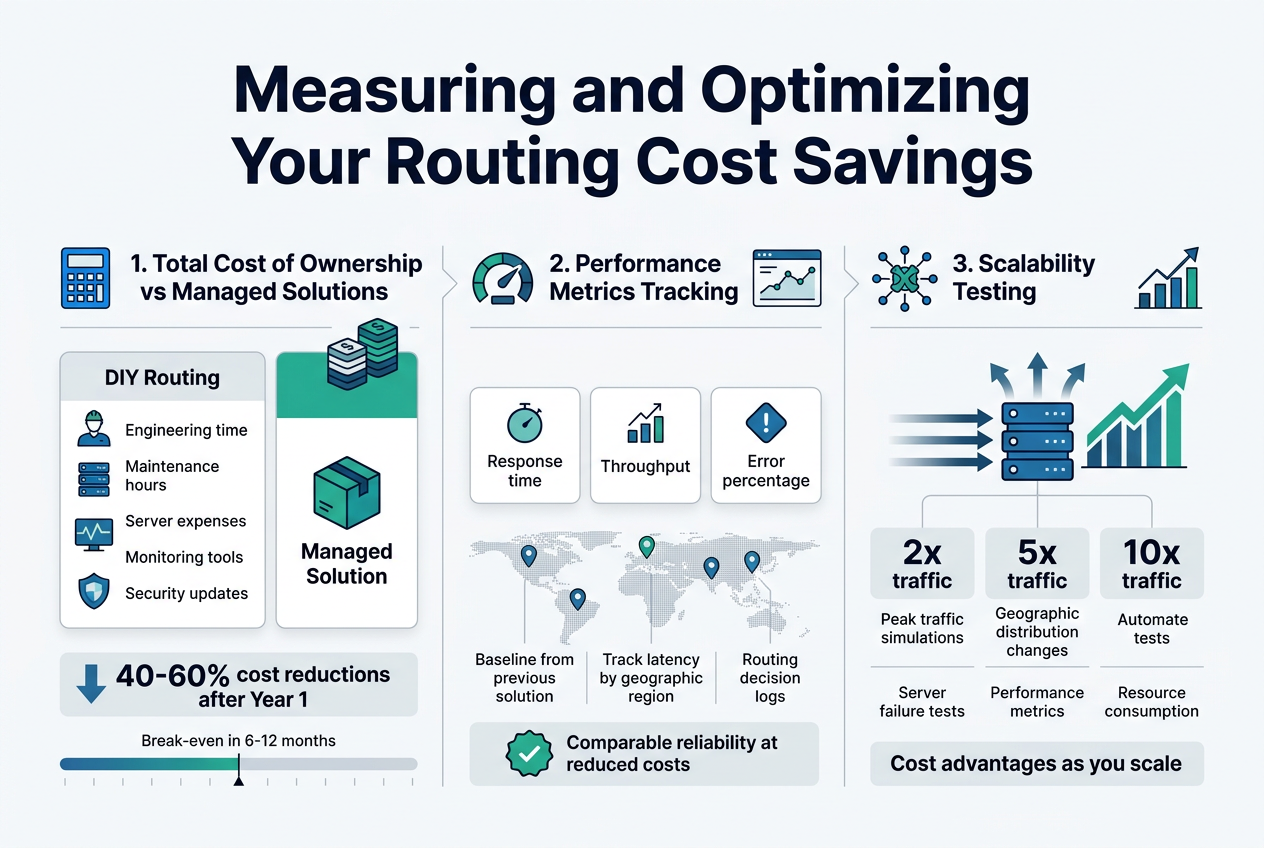

Total cost of ownership calculations versus managed solutions

Calculating your true routing cost savings requires comparing all expenses beyond the monthly service fees. Custom traffic routing infrastructure demands upfront development time, ongoing maintenance hours, and infrastructure costs that managed solutions bundle into their pricing. Track engineer salaries, server expenses, monitoring tools, and security updates against your previous load balancer bills. Most organizations discover 40-60% cost reductions after the first year, but initial setup investments can take 6-12 months to break even.

Performance metrics tracking for quality assurance

Monitor response times, throughput rates, and error percentages to ensure your cost-efficient traffic routing maintains service quality standards. Establish baseline measurements from your previous managed solution, then track latency across different geographic regions and traffic patterns. Set up automated alerts for performance degradation and maintain detailed logs of routing decisions. Regular performance audits help identify optimization opportunities while proving that DIY load balancing delivers comparable reliability at reduced costs.

Scalability testing to validate long-term cost benefits

Stress-test your routing infrastructure under peak traffic conditions to confirm it scales cost-effectively compared to managed alternatives. Simulate traffic spikes, geographic distribution changes, and server failures to validate your system handles growth without proportional cost increases. Document how your solution performs at 2x, 5x, and 10x current traffic levels, measuring both performance metrics and resource consumption. This testing reveals whether your custom routing maintains its cost advantages as your application scales.

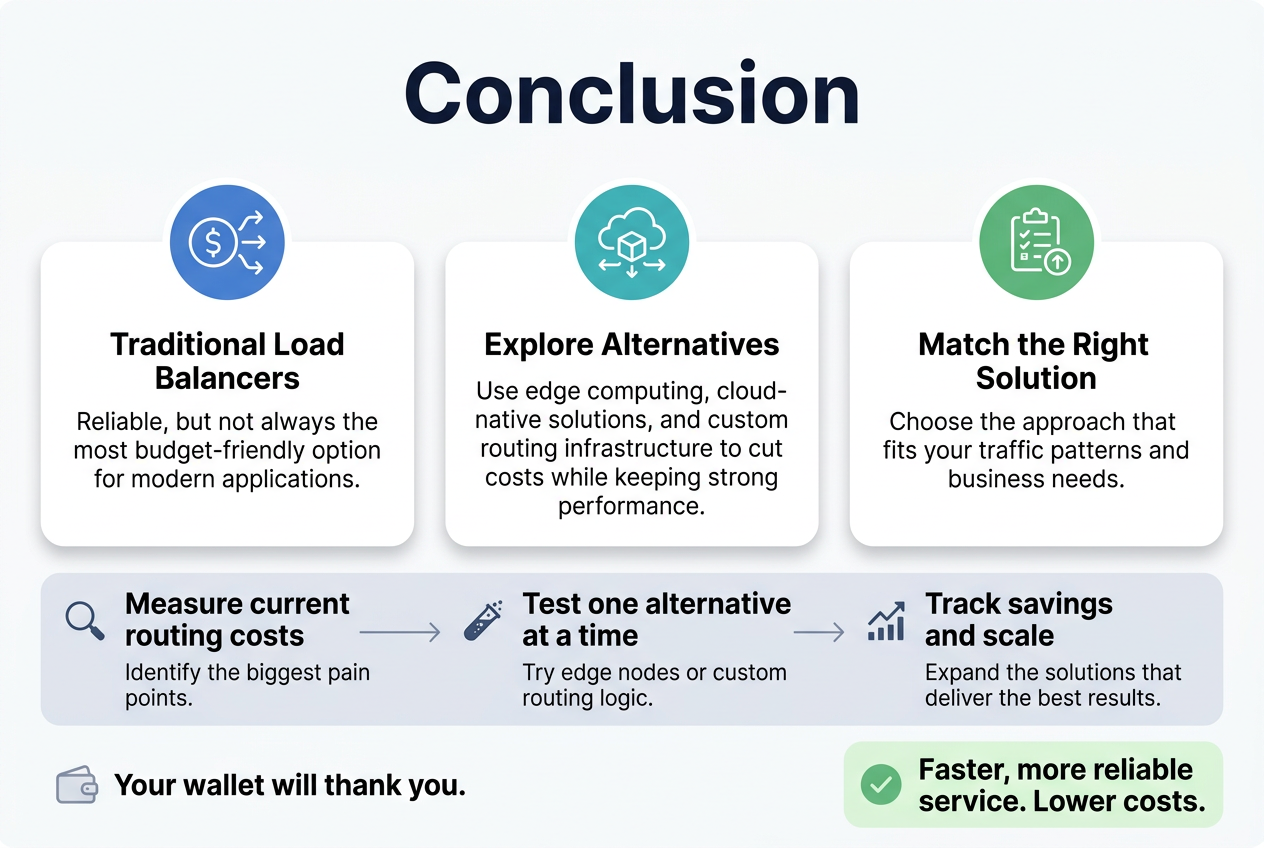

Traditional load balancers have served us well, but they’re not always the most budget-friendly option for modern applications. By exploring alternatives like edge computing, cloud-native solutions, and custom routing infrastructure, you can dramatically cut costs while maintaining excellent performance. The key is understanding which approach works best for your specific traffic patterns and business needs.

Start small by measuring your current routing costs and identifying the biggest pain points. Test one alternative solution at a time, whether that’s implementing edge computing nodes or building custom routing logic. Track your savings carefully and scale the solutions that deliver the best results. Your wallet will thank you, and your users will enjoy faster, more reliable service.