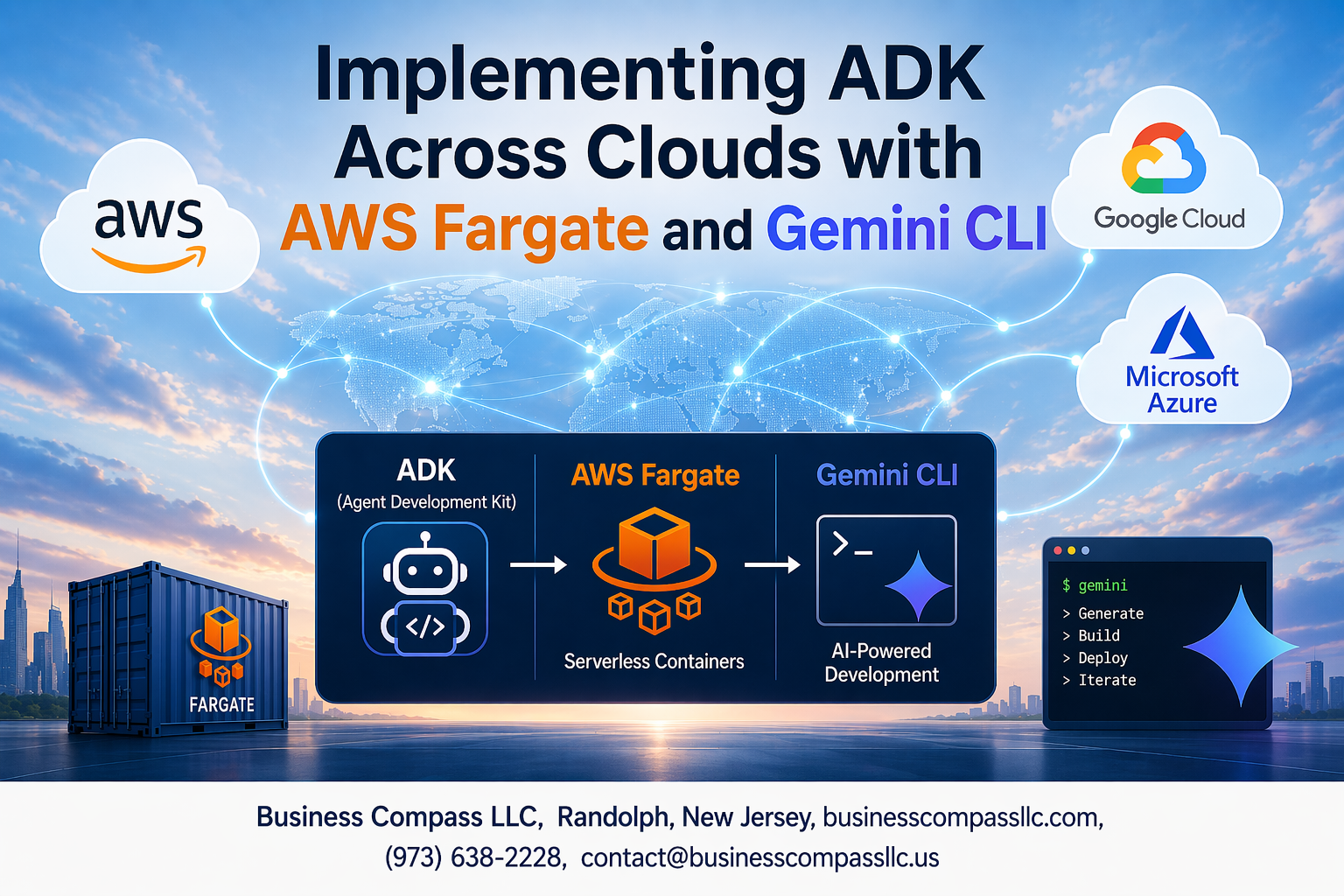

Running containerized applications across multiple clouds gets tricky fast, especially when you need seamless integration between different platforms. This guide walks you through AWS Fargate ADK implementation and shows how Gemini CLI development workflow can streamline your multi-cloud container orchestration setup.

Who this is for: DevOps engineers, cloud architects, and development teams managing containerized applications across AWS and other cloud platforms who want to build robust cross-cloud integration patterns.

We’ll cover the core ADK fundamentals multi-cloud architecture concepts you need to know, then dive into practical AWS Fargate container deployment strategies that actually work in production. You’ll also learn how to set up effective multi-cloud monitoring troubleshooting processes that catch issues before they impact your users.

By the end, you’ll have a clear roadmap for implementing cloud-native development tools that scale across different cloud environments without the usual headaches.

Understanding ADK Fundamentals and Multi-Cloud Architecture

Define Application Development Kit (ADK) core components and benefits

Application Development Kits (ADKs) serve as comprehensive toolsets that streamline software development across different platforms and environments. Core ADK components include libraries, APIs, documentation, code samples, and debugging tools that enable developers to build applications efficiently. Modern ADKs provide standardized interfaces for accessing cloud services, database connections, and third-party integrations while maintaining consistency across development teams.

The primary benefits of ADK fundamentals multi-cloud architecture include accelerated development cycles, reduced code duplication, and simplified maintenance workflows. ADKs abstract complex infrastructure details, allowing developers to focus on business logic rather than platform-specific configurations. This abstraction layer becomes particularly valuable when implementing AWS Fargate ADK implementation strategies across multiple cloud providers.

Explore multi-cloud deployment strategies and challenges

Multi-cloud container orchestration presents both opportunities and complexities for modern organizations. Companies adopt multi-cloud strategies to avoid vendor lock-in, improve disaster recovery capabilities, and leverage best-of-breed services from different providers. Popular deployment patterns include active-active configurations for high availability, geographic distribution for performance optimization, and hybrid approaches that balance cost with functionality requirements.

Significant challenges arise from managing different APIs, security models, and networking configurations across cloud platforms. Data synchronization, compliance requirements, and cost optimization become increasingly complex as applications span multiple environments. Cross-cloud integration patterns must address these challenges while maintaining operational efficiency and security standards.

Identify key requirements for cross-cloud application portability

Successful cross-cloud portability demands containerized applications AWS that can operate consistently across different environments. Key requirements include standardized container images, environment-agnostic configuration management, and portable storage solutions. Applications must abstract cloud-specific services through common interfaces, enabling seamless migration between platforms without significant code modifications.

Infrastructure as Code (IaC) templates, standardized CI/CD pipelines, and comprehensive monitoring strategies form the foundation of portable multi-cloud applications. Cloud-native development tools play a crucial role in maintaining consistency, while automated testing ensures applications perform reliably across different cloud environments. Security policies and compliance frameworks must also translate effectively between platforms to maintain operational integrity.

Setting Up AWS Fargate for Container Orchestration

Configure Fargate clusters for scalable container deployment

AWS Fargate ADK implementation starts with creating task definitions that specify container requirements, CPU, and memory allocations. Configure your Fargate service within an ECS cluster, enabling automatic scaling based on demand metrics. The serverless nature eliminates EC2 instance management while providing seamless container orchestration for multi-cloud environments.

Define scaling policies that respond to CPU utilization, memory consumption, or custom CloudWatch metrics. Fargate automatically provisions the exact compute resources needed, making it ideal for variable workloads in containerized applications AWS deployments.

Optimize resource allocation and cost management

Right-sizing Fargate tasks requires careful analysis of your application’s resource consumption patterns. Monitor CPU and memory utilization through CloudWatch metrics to identify opportunities for optimization. Implement spot capacity providers where appropriate to reduce costs for fault-tolerant workloads.

Schedule non-critical tasks during off-peak hours and use task placement strategies to maximize resource efficiency. Consider implementing auto-scaling policies that scale down aggressively during low-traffic periods while maintaining performance thresholds.

Implement security best practices for containerized applications

Container security begins with using minimal base images and regularly scanning for vulnerabilities. Configure IAM roles with least-privilege principles, granting only necessary permissions for each Fargate task. Enable VPC Flow Logs and AWS CloudTrail to monitor network traffic and API calls.

Implement secrets management using AWS Secrets Manager or Systems Manager Parameter Store instead of hardcoding sensitive information. Use AWS Security Groups as firewalls and enable encryption in transit and at rest for all data exchanges.

Establish networking and load balancing configurations

Configure your Fargate tasks within private subnets across multiple Availability Zones for high availability. Set up Application Load Balancers (ALB) to distribute incoming traffic and perform health checks on your containerized services. Enable cross-zone load balancing to ensure even traffic distribution.

Implement service discovery using AWS Cloud Map or ECS Service Discovery to enable dynamic service-to-service communication. Configure target groups with appropriate health check parameters and deregistration delays to handle rolling deployments smoothly while maintaining zero-downtime updates.

Leveraging Gemini CLI for Enhanced Development Workflow

Install and configure Gemini CLI tools

Getting started with Gemini CLI development workflow requires proper installation across your development environment. Download the latest release from the official repository and configure authentication credentials for your target cloud platforms. Set up environment variables for AWS Fargate container deployment and establish secure connections to your multi-cloud infrastructure components.

Streamline deployment automation and CI/CD pipelines

Gemini CLI cloud deployment transforms traditional deployment processes through automated pipeline configurations. Create deployment manifests that define your containerized applications AWS specifications and integrate with existing CI/CD tools. The CLI handles cross-cloud integration patterns seamlessly, pushing updates to multiple environments while maintaining consistency across your multi-cloud container orchestration setup.

Monitor application performance across multiple environments

Real-time monitoring becomes straightforward with Gemini CLI’s built-in observability features. Track performance metrics across different cloud providers and receive alerts when thresholds are exceeded. The tool aggregates logs from AWS Fargate and other platforms, providing centralized visibility into your distributed applications. Configure custom dashboards to monitor key performance indicators and troubleshoot issues before they impact end users.

Designing Cross-Cloud Integration Patterns

Implement data synchronization strategies between cloud providers

Building robust data synchronization across multiple cloud environments requires strategic planning around eventual consistency models and conflict resolution protocols. When implementing AWS Fargate ADK implementation with cross-cloud integration patterns, consider using message queues and event-driven architectures to maintain data integrity. Tools like AWS DMS, Azure Data Sync, and Google Cloud Dataflow can establish reliable pipelines that handle schema transformations and data validation automatically.

Real-time synchronization works best with change data capture (CDC) mechanisms that track modifications at the database level. Implement bi-directional sync patterns with timestamp-based conflict resolution, ensuring your containerized applications AWS deployments can handle network partitions gracefully. Always maintain backup synchronization paths through multiple regions to prevent single points of failure.

Configure API gateways for seamless service communication

API gateways serve as the central nervous system for multi-cloud container orchestration, managing authentication, rate limiting, and request routing between different cloud providers. Deploy gateway instances in each cloud region using AWS API Gateway, Azure Application Gateway, or Google Cloud Endpoints to minimize cross-cloud latency. Configure health checks and automatic failover rules that redirect traffic when services become unavailable.

Security policies should be consistent across all gateway implementations, with shared JWT tokens and OAuth configurations enabling seamless user authentication. Use service mesh technologies like Istio or Linkerd alongside your Gemini CLI development workflow to provide encrypted communication channels and detailed observability into inter-service dependencies across cloud boundaries.

Establish disaster recovery and failover mechanisms

Disaster recovery planning starts with defining Recovery Time Objectives (RTO) and Recovery Point Objectives (RPO) for each service tier. Create automated backup schedules that replicate critical data and configuration files across multiple cloud providers using tools native to each platform. Your AWS Fargate container deployment should include health monitoring that triggers failover procedures when performance thresholds are exceeded or services become unreachable.

Implement circuit breaker patterns that prevent cascading failures when one cloud provider experiences outages. Test failover procedures regularly using chaos engineering principles, simulating various failure scenarios to validate your recovery processes. Document runbooks with step-by-step instructions for manual intervention when automated systems require human oversight during complex disaster scenarios.

Optimize latency and performance across geographic regions

Geographic distribution of services requires careful analysis of user traffic patterns and data access frequencies. Deploy compute resources closer to your primary user bases using edge locations and content delivery networks available through each cloud provider. Monitor network latency between regions using tools like AWS CloudWatch, Azure Monitor, or Google Cloud Operations Suite to identify performance bottlenecks.

Cache frequently accessed data at regional endpoints using Redis or Memcached clusters, reducing the need for cross-cloud data fetching. Implement intelligent request routing that considers both geographic proximity and current system load when directing user requests. Use compression algorithms and optimize payload sizes for API communications that must traverse multiple cloud networks.

Monitoring and Troubleshooting Multi-Cloud Deployments

Set up comprehensive logging and observability frameworks

Effective multi-cloud monitoring troubleshooting starts with centralized logging across your AWS Fargate ADK implementation and other cloud environments. Deploy tools like Prometheus, Grafana, and ELK stack to aggregate metrics from containerized applications AWS deployments. Configure distributed tracing to track requests flowing between cloud services, enabling you to pinpoint bottlenecks in your cross-cloud integration patterns.

Implement automated alerts and incident response workflows

Create intelligent alerting systems that trigger based on anomalies in your Gemini CLI cloud deployment metrics and performance thresholds. Set up escalation workflows that automatically scale resources when CPU or memory usage spikes in your multi-cloud container orchestration setup. Configure Slack or PagerDuty integrations to notify your team when critical issues arise in your cloud-native development tools infrastructure.

Debug common connectivity and performance issues

Network latency between clouds often causes the most headaches in multi-cloud deployments. Use tools like traceroute and network monitoring dashboards to identify routing problems between your AWS Fargate containers and other cloud services. Check security group configurations and firewall rules that might block inter-cloud communication in your ADK fundamentals multi-cloud architecture.

Optimize resource utilization and cost tracking

Monitor your AWS Fargate container deployment costs using CloudWatch and third-party cost optimization tools. Right-size your containers based on actual usage patterns rather than estimated requirements. Implement auto-scaling policies that respond to real traffic patterns, and use spot instances where possible to reduce expenses while maintaining your Gemini CLI development workflow performance standards.

Running ADK across multiple cloud environments doesn’t have to be overwhelming when you have the right tools and approach. AWS Fargate takes care of the heavy lifting for container management, while Gemini CLI streamlines your development process and makes cross-cloud operations much smoother. The combination of solid architectural planning, proper monitoring setup, and clear integration patterns sets you up for success in even the most complex multi-cloud scenarios.

Ready to take your ADK implementation to the next level? Start small with a single cloud setup to get comfortable with the tools, then gradually expand your deployment across multiple environments. The investment in learning these technologies pays off quickly when you see how much easier it becomes to manage and scale your applications across different cloud platforms.