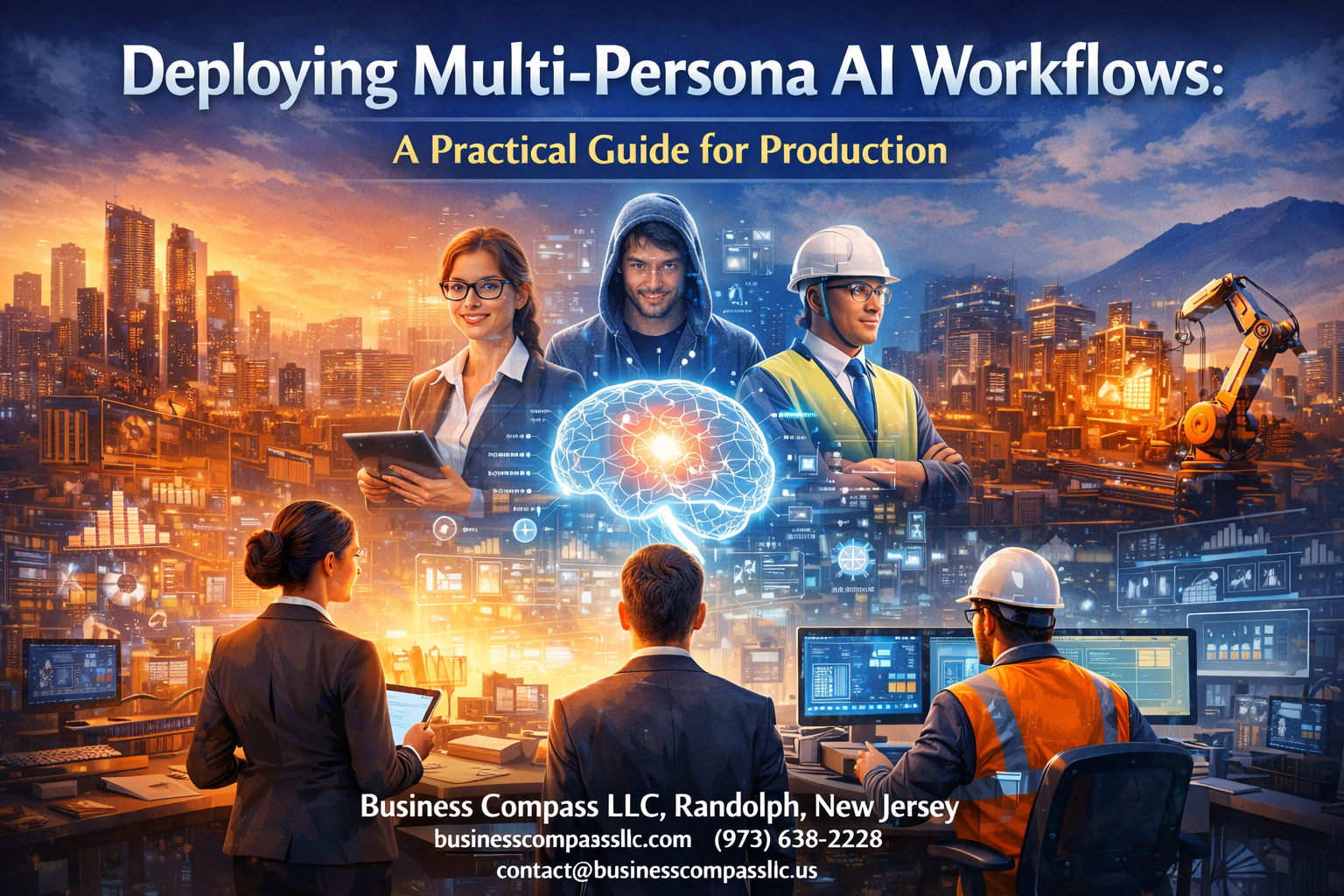

Multi-persona AI workflows are transforming how businesses handle complex automation tasks, but getting them ready for production requires careful planning and execution. This guide walks you through everything you need to deploy these systems successfully in real-world environments.

Who this guide is for: Engineering teams, AI practitioners, and technical leaders who need to move multi-persona AI workflows from development into production-ready systems.

You’ll learn how to design scalable persona management systems that can handle multiple AI agents working together without breaking down under pressure. We’ll also cover building robust development and testing frameworks that catch issues before they reach your users. Finally, you’ll discover proven strategies for optimizing performance and resource management while maintaining the security and compliance standards your organization demands.

The goal is simple: give you a practical roadmap for deploying multi-persona AI workflows that actually work when it matters most.

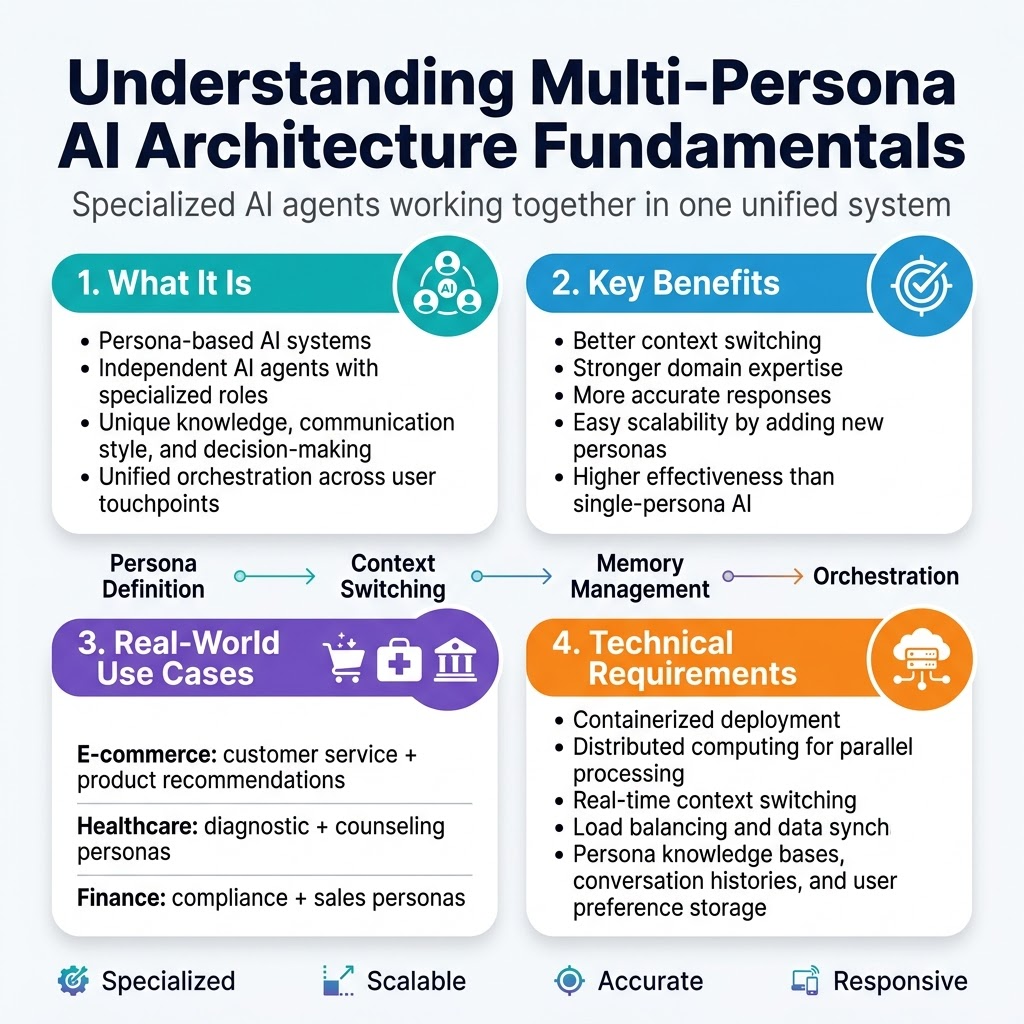

Understanding Multi-Persona AI Architecture Fundamentals

Define persona-based AI systems and their core components

Multi-persona AI workflows represent a paradigm shift from monolithic AI systems to specialized, role-based agents that handle distinct tasks within a unified framework. Each persona functions as an independent AI agent with specialized knowledge, communication styles, and decision-making capabilities tailored to specific domains or user interactions. Core components include persona definition engines, context switching mechanisms, memory management systems, and orchestration layers that coordinate between different AI personalities while maintaining coherent user experiences across various touchpoints.

Identify key benefits over single-persona approaches

Traditional single-persona AI systems often struggle with context switching and domain expertise limitations, leading to generic responses and reduced effectiveness. Multi-agent AI systems deliver superior performance by leveraging specialized personas that excel in their respective domains, whether customer service, technical support, or creative content generation. This approach enables dynamic adaptation to user needs, improved accuracy through domain-specific training, and enhanced scalability as new personas can be added without rebuilding the entire system architecture.

Examine real-world use cases across industries

E-commerce platforms deploy customer service personas alongside product recommendation agents, creating seamless shopping experiences that adapt to user behavior patterns. Healthcare organizations utilize diagnostic personas for symptom analysis while maintaining separate counseling personas for patient communication. Financial institutions leverage compliance-focused personas for regulatory interactions and sales-oriented personas for customer acquisition, demonstrating how persona management systems enhance operational efficiency across diverse business requirements.

Assess technical requirements and infrastructure needs

Production AI workflows demand robust infrastructure capable of supporting concurrent persona execution, real-time context switching, and seamless data synchronization between agents. Key requirements include containerized deployment environments, distributed computing resources for parallel persona processing, and sophisticated load balancing systems. Storage solutions must accommodate persona-specific knowledge bases, conversation histories, and user preference data while ensuring low-latency access patterns that maintain responsive user interactions across all deployed personas.

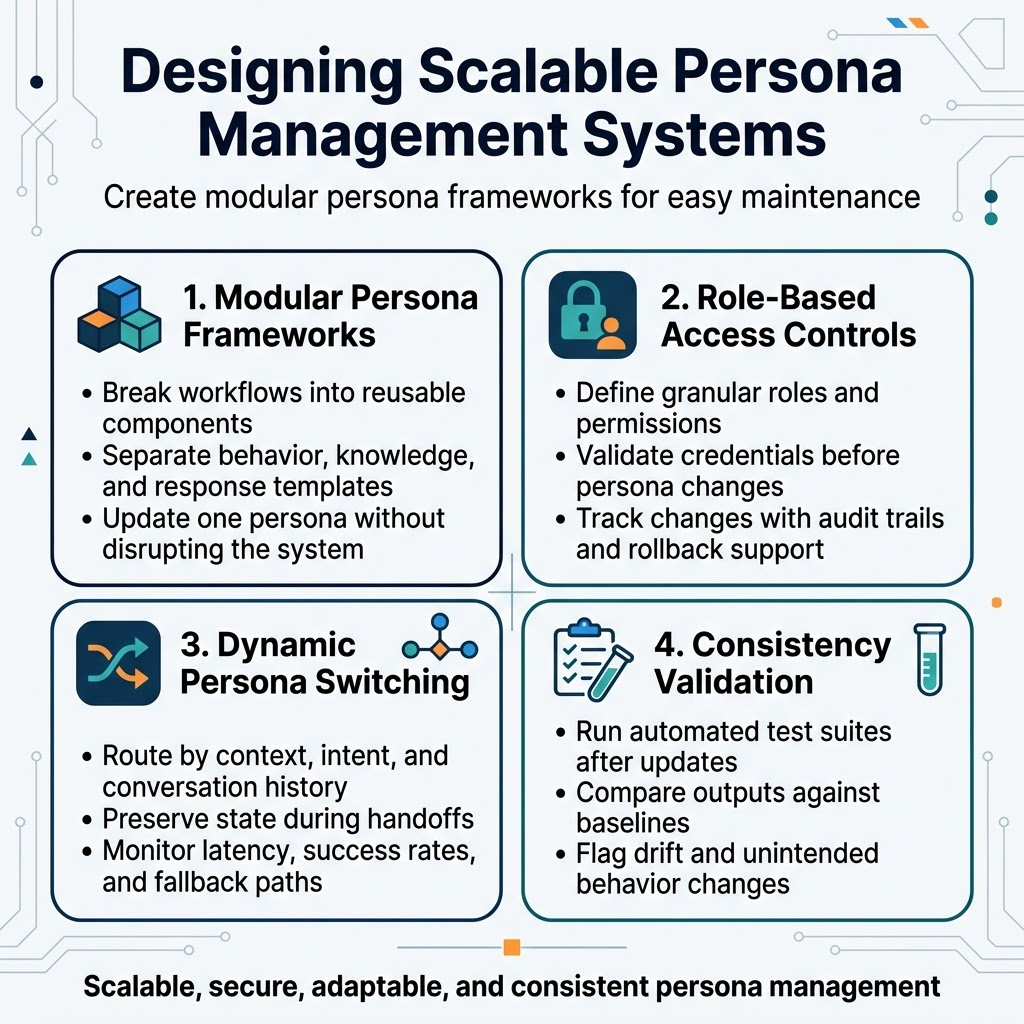

Designing Scalable Persona Management Systems

Create modular persona frameworks for easy maintenance

Breaking down multi-persona AI workflows into discrete, reusable components transforms complex systems into manageable building blocks. Each persona module should contain its specific behavioral patterns, knowledge bases, and response templates while maintaining clear interfaces for communication with other system components. This modular approach enables teams to update individual personas without disrupting the entire workflow, dramatically reducing maintenance overhead and deployment risks.

Implement role-based access controls and permissions

Robust permission systems protect sensitive persona configurations while enabling appropriate team access. Define granular roles that map to organizational responsibilities—developers modify persona logic, content managers update knowledge bases, and administrators control system-wide settings. Authentication layers should validate user credentials before allowing persona modifications, with audit trails tracking all changes to maintain accountability and support rollback capabilities when needed.

Build dynamic persona switching mechanisms

Real-time persona transitions require sophisticated routing logic that evaluates context, user intent, and conversation history to select optimal personas. Implement seamless handoff protocols that preserve conversation state and maintain context continuity across persona changes. The switching mechanism should include fallback strategies for edge cases and performance monitoring to track transition success rates and response latencies.

Establish persona consistency validation protocols

Automated testing frameworks verify persona behavior remains consistent across updates and deployments. Create comprehensive test suites that evaluate response quality, adherence to persona characteristics, and proper handling of edge cases. Regular validation cycles should compare persona outputs against established baselines, flagging deviations that could indicate configuration drift or unintended behavioral changes in your persona management systems.

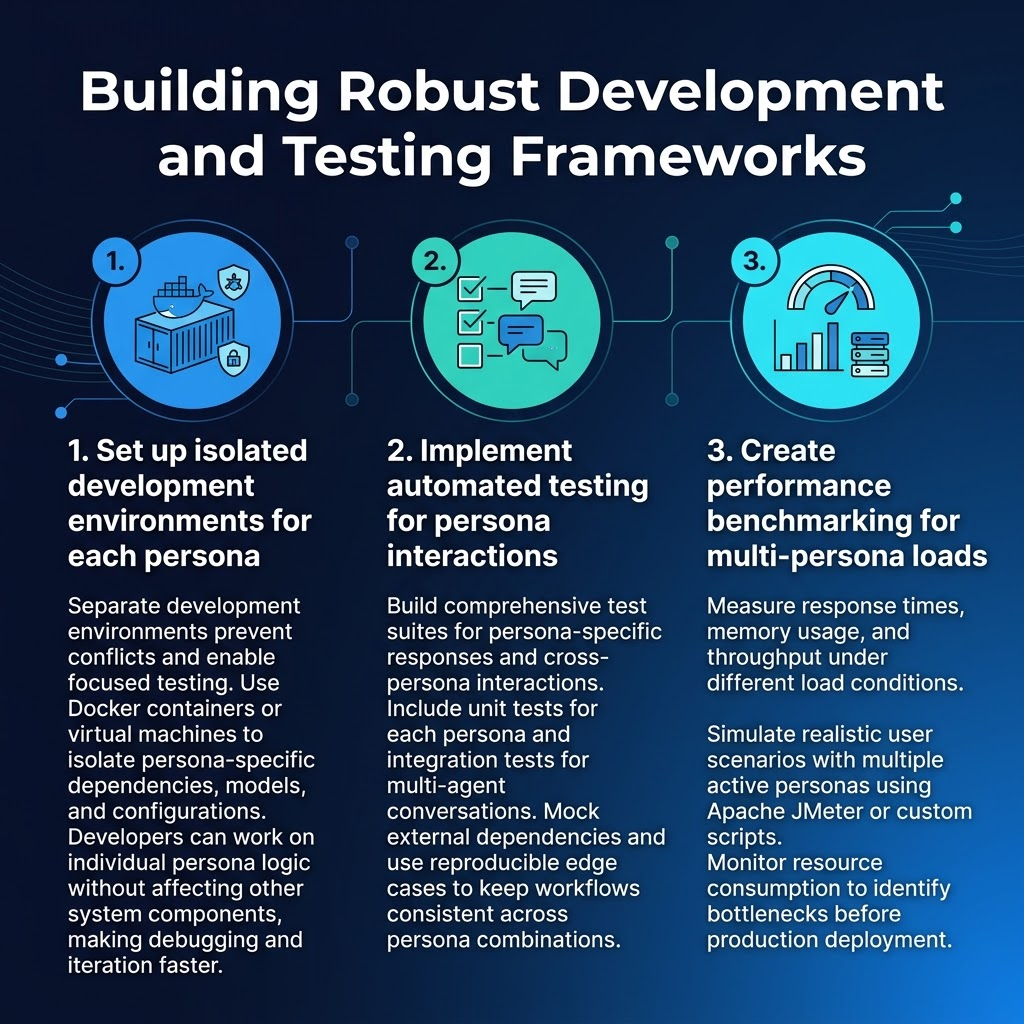

Building Robust Development and Testing Frameworks

Set up isolated development environments for each persona

Creating separate development environments for each persona prevents conflicts and enables focused testing. Use Docker containers or virtual machines to isolate persona-specific dependencies, models, and configurations. This approach allows developers to work on individual persona logic without affecting other system components, making debugging and iteration significantly faster.

Implement automated testing for persona interactions

Automated testing for multi-persona AI workflows requires comprehensive test suites that validate both individual persona behavior and cross-persona interactions. Design unit tests for persona-specific responses and integration tests for multi-agent conversations. Mock external dependencies and create reproducible test scenarios that cover edge cases, ensuring your production AI workflows maintain consistent performance across different persona combinations.

Create performance benchmarking for multi-persona loads

Performance benchmarking becomes critical when deploying multi-agent AI systems that handle concurrent persona interactions. Establish baseline metrics for response times, memory usage, and throughput under various load conditions. Use tools like Apache JMeter or custom scripts to simulate realistic user scenarios with multiple active personas. Monitor resource consumption patterns to identify bottlenecks before they impact your scalable AI deployment in production environments.

Implementing Production-Ready Deployment Strategies

Configure containerized deployment for persona isolation

Containerization provides the foundation for reliable multi-persona AI workflows in production environments. Docker containers ensure each AI persona operates within isolated environments, preventing resource conflicts and maintaining consistent behavior across deployments. Configure separate containers for each persona with dedicated CPU and memory allocations to guarantee performance stability. Kubernetes orchestration enables automatic scaling and resource management, allowing your production AI workflows to handle varying workload demands efficiently.

Set up load balancing for optimal persona distribution

Intelligent load balancing ensures requests reach the appropriate persona containers while maintaining optimal resource utilization across your cluster. Implement weighted routing algorithms that consider persona-specific processing requirements and current container health status. Configure health checks that monitor persona response times and accuracy metrics, automatically redirecting traffic away from underperforming instances. This approach maximizes throughput while maintaining consistent user experiences across your multi-agent AI systems deployment.

Establish monitoring and alerting systems

Comprehensive monitoring tracks persona performance metrics, resource consumption, and response quality in real-time. Set up custom dashboards displaying persona-specific latency, throughput, and error rates alongside system-level metrics like CPU usage and memory consumption. Configure intelligent alerts that trigger when personas exceed response time thresholds or accuracy drops below acceptable levels. Integrate logging systems that capture persona interactions for debugging and performance analysis, enabling proactive identification of potential issues before they impact users.

Create rollback procedures for failed deployments

Automated rollback procedures protect your production environment from deployment failures and performance degradation. Implement version control for persona configurations and model weights, enabling rapid restoration to previously stable states. Configure deployment pipelines that automatically trigger rollbacks when health checks fail or performance metrics drop below defined thresholds. Create manual override procedures for emergency situations, ensuring operations teams can quickly revert changes when automated systems detect critical issues affecting your scalable AI deployment.

Implement blue-green deployment for zero downtime

Blue-green deployment strategies eliminate service interruptions during persona updates and system maintenance. Maintain parallel production environments where the blue environment serves live traffic while green hosts new deployments for testing. Route traffic gradually from blue to green after validating persona behavior and performance metrics meet production standards. This approach enables seamless updates to your AI workflow automation while providing instant rollback capabilities if issues emerge during the transition process.

Optimizing Performance and Resource Management

Monitor system resource allocation across personas

Effective resource monitoring forms the backbone of successful multi-persona AI workflows in production environments. Track CPU, memory, and GPU utilization patterns for each persona to identify bottlenecks before they impact performance. Real-time dashboards should display resource consumption metrics, allowing teams to spot unusual spikes or degradation trends. Set up automated alerts when individual personas exceed predetermined resource thresholds, enabling proactive scaling decisions.

Implement caching strategies for frequently used personas

Smart caching reduces computational overhead by storing persona states and common response patterns. Deploy Redis or similar in-memory stores to cache persona configurations, conversation contexts, and frequently accessed model outputs. This approach significantly improves response times while reducing server load across your AI performance optimization infrastructure.

Configure auto-scaling based on persona demand

Dynamic scaling ensures your multi-persona AI workflows handle varying workloads efficiently. Configure Kubernetes horizontal pod autoscalers to monitor persona-specific metrics like request queue length and response latency. Implement predictive scaling algorithms that anticipate demand patterns based on historical usage data, preventing performance degradation during peak periods.

Optimize memory usage and response times

Memory optimization directly impacts the scalability of production AI workflows. Implement model quantization techniques to reduce memory footprint without sacrificing accuracy. Use connection pooling for database interactions and implement lazy loading for persona initialization. Profile memory allocation patterns regularly to identify leaks or inefficient usage that could compromise system stability.

Ensuring Security and Compliance Standards

Implement data isolation between different personas

Creating secure boundaries between AI personas prevents data leakage and maintains user trust. Each persona should operate within its own isolated environment with dedicated memory spaces, separate API endpoints, and distinct data access controls. This compartmentalization ensures that sensitive information processed by one persona remains completely inaccessible to others, even when they run on shared infrastructure.

Configure audit trails for persona activities

Comprehensive logging captures every interaction, decision, and data access across all persona instances. Track user requests, model responses, data transformations, and system events with detailed timestamps and unique identifiers. These audit trails support security investigations, compliance reporting, and performance analysis while enabling rapid identification of anomalous behavior patterns.

Establish compliance protocols for regulated industries

Financial services, healthcare, and government sectors require strict adherence to industry-specific regulations. Design your multi-persona AI workflows to meet GDPR, HIPAA, SOX, and other regulatory requirements through automated compliance checks, data retention policies, and regular certification processes. Document all compliance measures and maintain updated procedures for regulatory audits.

Create incident response procedures for security breaches

Develop clear escalation paths and containment strategies for security incidents affecting your AI security compliance framework. Establish automated threat detection systems that monitor unusual persona behavior, unauthorized access attempts, and data exfiltration patterns. Define roles, responsibilities, and communication protocols to minimize damage and restore normal operations quickly during security events.

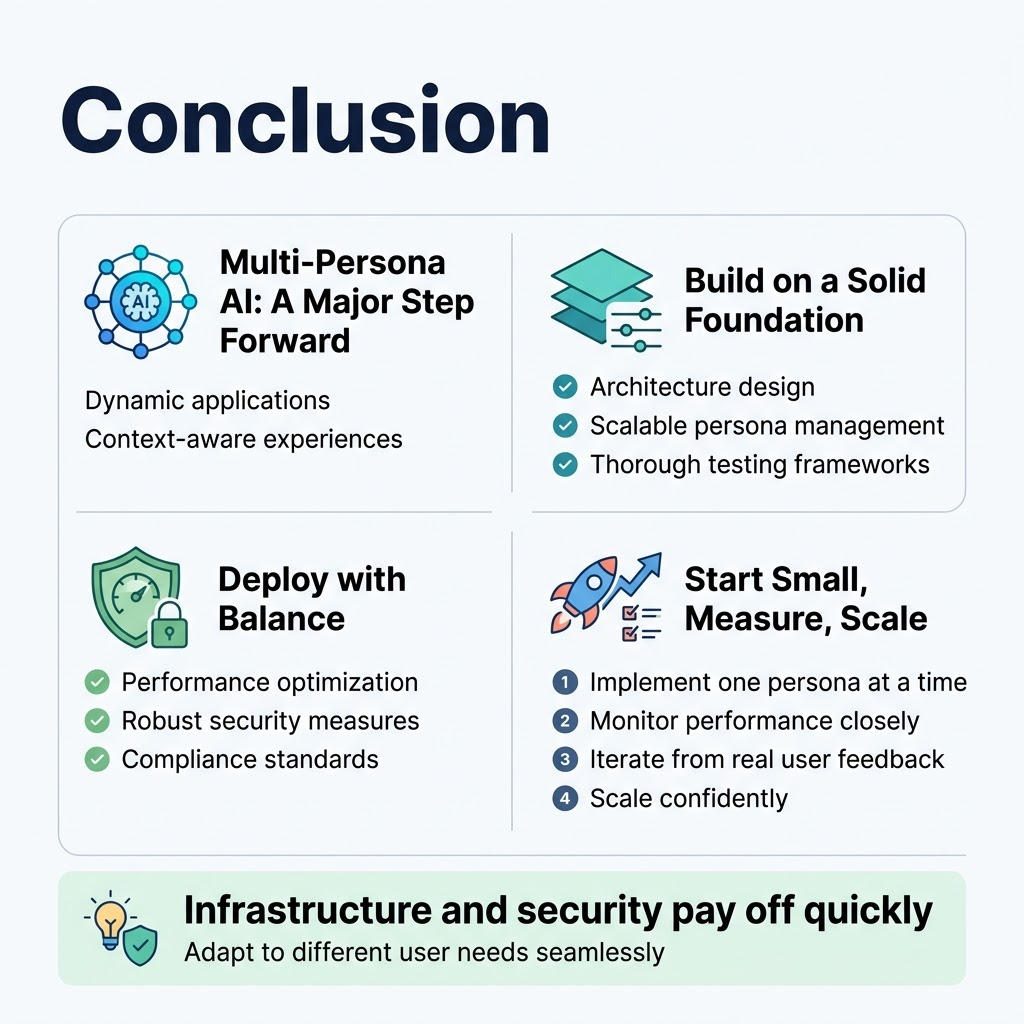

Multi-persona AI systems represent a significant step forward in creating more dynamic and context-aware applications. The key to success lies in building a solid foundation through proper architecture design, scalable persona management, and thorough testing frameworks. Your deployment strategy needs to balance performance optimization with robust security measures while maintaining compliance standards that protect both your organization and users.

Getting started doesn’t require perfection from day one. Focus on implementing one persona at a time, monitor performance closely, and iterate based on real user feedback. The investment in proper infrastructure and security protocols pays off quickly when your system can adapt to different user needs seamlessly. Start small, measure everything, and scale confidently knowing your multi-persona AI workflow can handle whatever production throws at it.