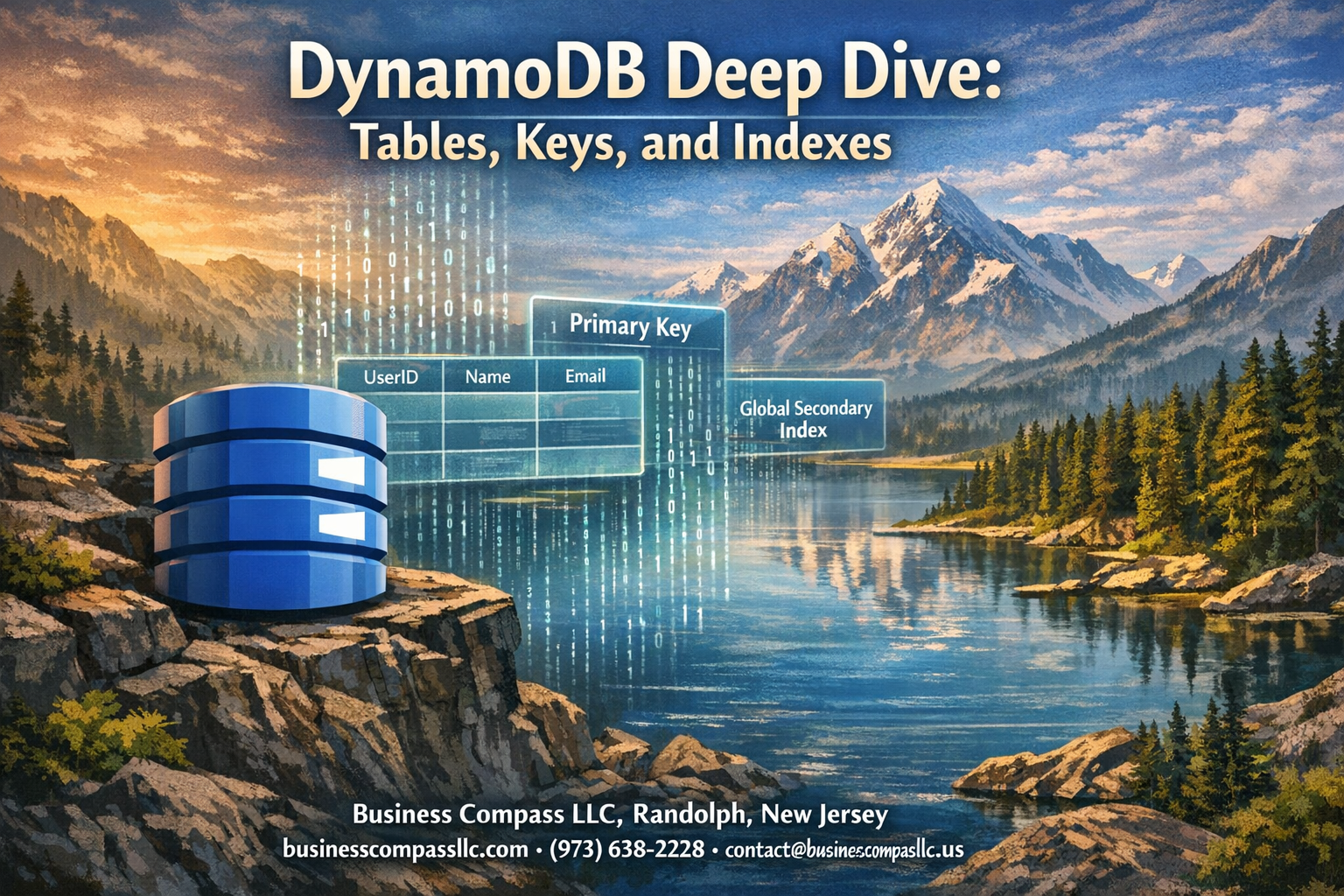

Amazon DynamoDB powers some of the world’s largest applications, but getting started with its tables, keys, and indexes can feel overwhelming. This AWS DynamoDB tutorial is designed for developers, cloud architects, and anyone building applications that need fast, scalable NoSQL database solutions.

DynamoDB’s unique architecture sets it apart from traditional databases, and understanding its core components is essential for building high-performance applications. We’ll break down how DynamoDB tables work differently from SQL databases, explore the critical role of primary keys in DynamoDB performance optimization, and show you how secondary indexes unlock advanced query capabilities.

You’ll learn practical DynamoDB schema design strategies that prevent common bottlenecks, discover DynamoDB best practices for data modeling that scale with your application, and see real examples of NoSQL database design patterns that work in production. By the end, you’ll have the knowledge to design DynamoDB architectures that deliver consistent performance at any scale.

Understanding DynamoDB Architecture and Core Components

NoSQL Database Benefits for Modern Applications

NoSQL databases like DynamoDB eliminate the rigid structure of traditional relational databases, making them perfect for handling unpredictable data patterns and rapid scaling demands. Unlike SQL databases that require predefined schemas, DynamoDB architecture allows developers to store different data structures within the same table, giving applications the flexibility to evolve without costly migrations.

DynamoDB’s Serverless Model and Automatic Scaling

DynamoDB’s serverless design means you never worry about provisioning servers or managing infrastructure. The service automatically adjusts capacity based on traffic patterns, scaling up during peak loads and down during quiet periods. This approach eliminates the guesswork of capacity planning while ensuring consistent performance for your applications.

Regional Distribution and Multi-AZ Availability

Amazon deploys DynamoDB across multiple Availability Zones within each region, providing built-in redundancy and fault tolerance. Your DynamoDB tables automatically replicate data across these zones, ensuring high availability even if an entire data center experiences issues. Global Tables extend this protection across multiple AWS regions for worldwide disaster recovery.

Integration with AWS Ecosystem Services

DynamoDB seamlessly connects with the broader AWS ecosystem, making it a natural choice for cloud-native applications. Lambda functions can trigger on table changes, CloudWatch monitors performance metrics, and IAM controls access permissions. This tight integration simplifies development workflows and reduces the complexity of building scalable applications in the cloud.

Mastering DynamoDB Tables Structure and Design

Creating Tables with Optimal Configuration Settings

DynamoDB table creation starts with selecting the right billing mode for your workload. On-demand billing works perfectly for unpredictable traffic patterns, while provisioned capacity gives you cost control with steady usage. When naming your DynamoDB tables, choose descriptive names that reflect your application’s purpose and avoid generic terms. Enable point-in-time recovery during setup to protect against accidental data loss. Stream configuration should align with your integration needs – DynamoDB Streams capture data changes for real-time processing with AWS Lambda or other services.

Choosing the Right Data Types for Maximum Efficiency

String, number, and binary data types form the foundation of efficient DynamoDB schema design. Strings work best for text content and identifiers, while numbers handle integers and decimals without precision loss. Binary types store images, documents, or encrypted data efficiently. Complex data structures benefit from DynamoDB’s native support for lists, maps, and sets. When designing your schema, keep attribute names short to reduce storage costs and network overhead.

Setting Up Read and Write Capacity Units

Provisioned capacity requires careful planning based on your application’s access patterns. One write capacity unit handles 1KB per second, while read capacity units depend on consistency requirements – eventually consistent reads consume half the units of strongly consistent reads. Auto scaling adjusts capacity automatically based on traffic, preventing throttling during peak usage. Reserved capacity offers significant cost savings for predictable workloads running consistently over time.

Implementing Table-Level Encryption and Security

DynamoDB provides multiple encryption options to secure your data at rest and in transit. AWS managed encryption keys offer simplicity, while customer-managed keys in AWS KMS provide granular access control. Encryption in transit happens automatically through HTTPS endpoints. IAM policies control table access at granular levels, allowing you to restrict operations by user, role, or resource. VPC endpoints keep DynamoDB traffic within your private network, adding an extra security layer for sensitive applications.

Primary Keys Strategy for Optimal Performance

Partition Key Selection for Even Data Distribution

Choosing the right partition key determines how DynamoDB distributes your data across multiple partitions, directly impacting performance and costs. A well-designed partition key creates uniform access patterns, preventing bottlenecks that occur when too much traffic hits a single partition. Consider using high-cardinality attributes like user IDs, device identifiers, or timestamps combined with random suffixes to spread data evenly across your table’s infrastructure.

Composite Keys with Sort Keys for Complex Queries

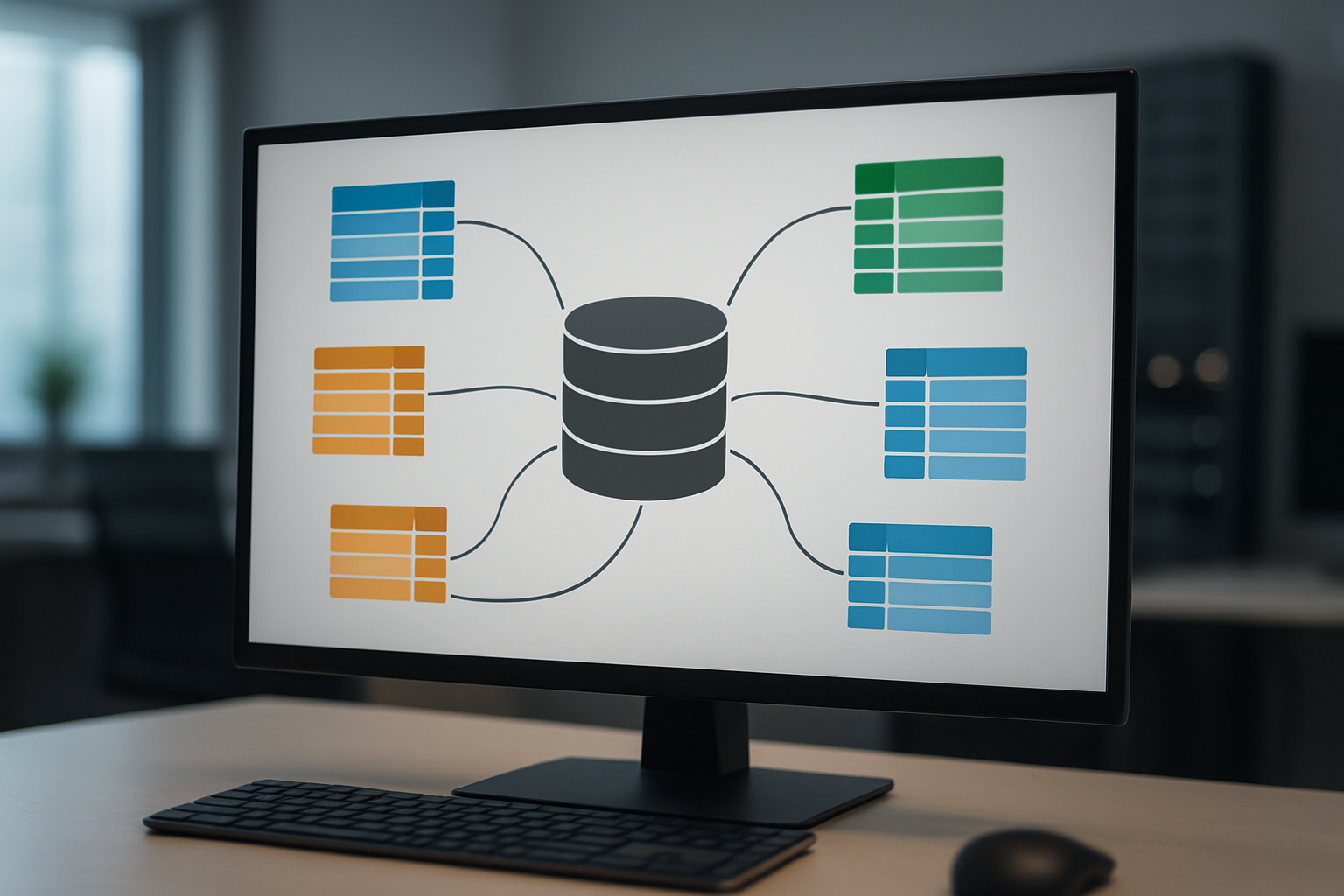

DynamoDB primary keys support composite structures using both partition keys and sort keys, enabling sophisticated query patterns within a single table design. This approach allows you to model hierarchical relationships and perform range queries efficiently. For example, using “CustomerID” as your partition key and “OrderDate” as your sort key lets you retrieve all orders for a specific customer within a date range using a single, optimized query operation.

Avoiding Hot Partitions Through Smart Key Design

Hot partitions occur when your DynamoDB table receives disproportionate traffic on specific partition key values, creating performance bottlenecks and throttling issues. Smart key design distributes read and write operations evenly by avoiding sequential patterns, popular values, or time-based keys that concentrate activity. Techniques like adding random prefixes, using composite attributes, or implementing write sharding help maintain consistent performance across your entire dataset.

Secondary Indexes for Advanced Query Flexibility

Global Secondary Indexes for Cross-Partition Queries

Global Secondary Indexes (GSIs) transform DynamoDB query capabilities by allowing you to search data using completely different partition and sort keys than your main table. Unlike your primary table structure, GSIs can span across all partitions, giving you the freedom to query data patterns that would otherwise require expensive scan operations.

Creating effective GSIs means thinking about your access patterns upfront. You can define up to 20 GSIs per table, each with its own provisioned throughput settings. The key advantage is query flexibility – you can retrieve data based on attributes that aren’t part of your primary key structure, making your DynamoDB tables more versatile for complex applications.

Local Secondary Indexes for Enhanced Sort Options

Local Secondary Indexes (LSIs) share the same partition key as your main table but offer alternative sort key options. You can only create LSIs during table creation, and they’re limited to 10GB per partition key value. This constraint makes LSIs perfect for scenarios where you need different sorting arrangements within the same logical data group.

LSIs excel when you need multiple query patterns on the same partition. For example, if your partition key is “CustomerID,” you could create LSIs to sort by “OrderDate,” “TotalAmount,” or “ProductCategory” without duplicating data across partitions.

Index Projection Types and Storage Optimization

DynamoDB secondary indexes offer three projection types that directly impact storage costs and query performance. KEYS_ONLY projections include only the index keys and primary table keys, minimizing storage while requiring additional reads for non-key attributes. ALL projections copy every table attribute to the index, maximizing query speed but increasing storage costs significantly.

INCLUDE projections provide the perfect middle ground, allowing you to specify exactly which non-key attributes to copy. This selective approach optimizes both performance and cost by including frequently accessed attributes while excluding rarely used data. Smart projection choices can reduce your DynamoDB bills by 30-50% while maintaining query efficiency.

Cost Management Strategies for Index Usage

Index costs stack up quickly since each secondary index maintains its own storage and throughput capacity. Monitor your index utilization through CloudWatch metrics to identify underused indexes that drain your budget. Consider consolidating similar access patterns into fewer, more efficient indexes rather than creating separate indexes for every possible query.

Implement auto-scaling for index throughput and regularly audit your projection strategies. GSIs with ALL projections can double your storage costs, so evaluate whether INCLUDE projections meet your needs. Delete unused indexes promptly and consider sparse indexes where only items with specific attributes create index entries, reducing overall storage requirements.

Best Practices for Schema Design and Data Modeling

Single Table Design Patterns for Cost Efficiency

DynamoDB schema design works best when you store multiple entity types in a single table using composite primary keys. This approach dramatically reduces costs by minimizing the number of tables you provision while maximizing throughput efficiency. Smart partition key design combined with sort keys creates natural data groupings.

Single table patterns shine when you map your access patterns to hierarchical sort key structures. You can store users, orders, and products together by crafting keys like USER#123 and ORDER#456#USER#123. This technique eliminates expensive cross-table joins and reduces your AWS bill significantly.

Access Pattern Analysis and Query Optimization

Start every DynamoDB project by mapping out exactly how your application will read and write data. Document each query pattern, including the attributes you’ll filter on and the expected data volume. This analysis drives your primary key design and determines which secondary indexes you actually need.

Query optimization in DynamoDB means designing your keys to support efficient range queries and avoiding scan operations. Group related items under the same partition key when possible, and structure sort keys to enable begins_with and between operations that your application requires.

Denormalization Techniques for Better Performance

NoSQL database design flips relational thinking on its head – duplicate data strategically to avoid expensive lookups. Store frequently accessed attributes directly in your items, even if they appear in multiple places. This redundancy trades storage costs for blazing-fast single-item reads.

Design your items to contain everything needed for common queries. If you often display user profiles with their latest orders, embed order summaries directly in the user record. Update these denormalized fields consistently, but accept this complexity for the performance gains you’ll achieve.

DynamoDB transforms how you think about database design by putting performance and scalability at the center of every decision. From crafting the right table structure to choosing between partition and sort keys, every element works together to create a system that can handle massive workloads without breaking a sweat. The magic really happens when you combine smart primary key strategies with well-designed secondary indexes, giving you the query flexibility you need while maintaining lightning-fast response times.

Getting your schema design right from the start saves you countless headaches down the road. Focus on understanding your access patterns first, then build your table structure around those patterns rather than trying to force traditional relational database thinking into DynamoDB. Take the time to experiment with different key strategies and index configurations in development – your future self will thank you when your application scales effortlessly and your AWS bills stay manageable.