Building AI applications just got easier with AWS serverless architecture. Gone are the days of managing servers, worrying about capacity planning, or dealing with infrastructure headaches when deploying machine learning models at scale.

This guide is perfect for developers, data scientists, and DevOps engineers who want to build robust AI applications without the operational overhead. You’ll learn how to leverage AWS AI services and serverless technologies to create intelligent applications that automatically scale based on demand.

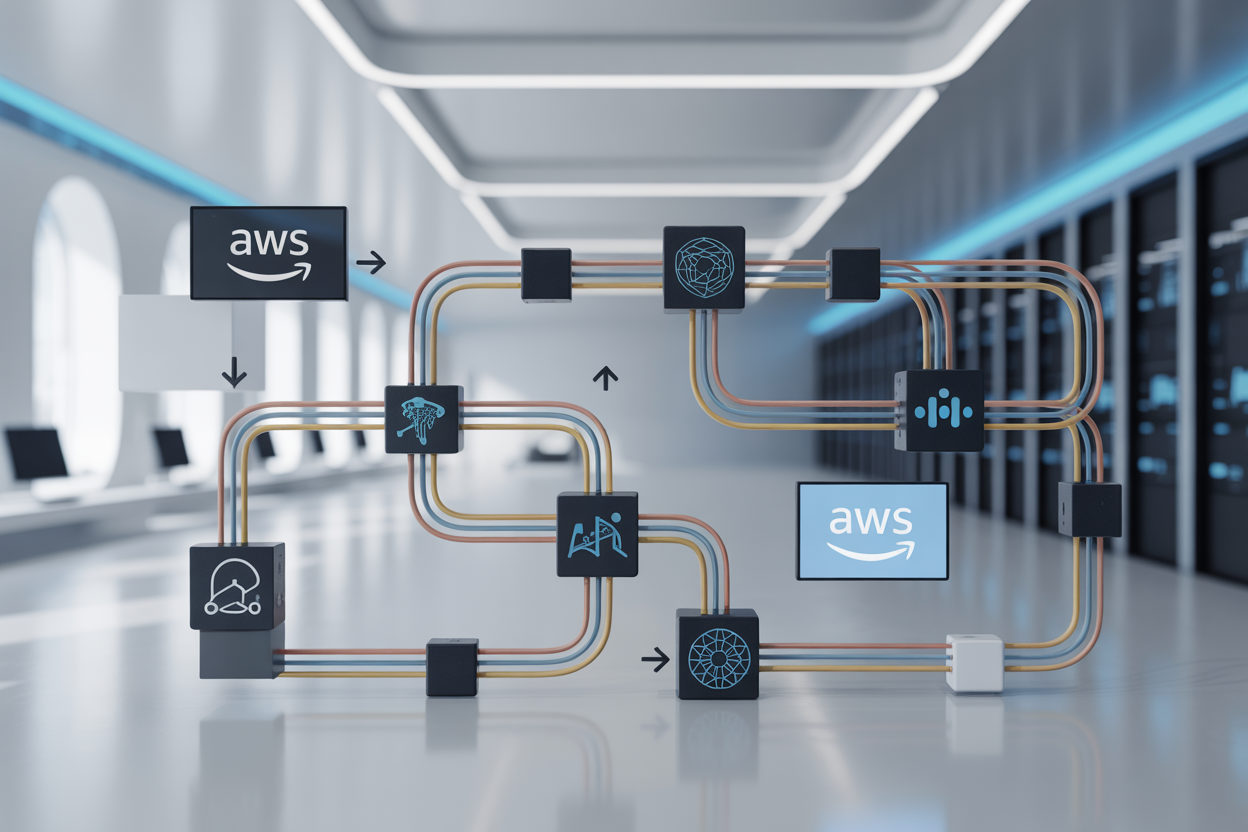

We’ll walk through the essential AWS services that power serverless AI development, including AWS Lambda AI applications and the core building blocks you need to get started. You’ll also discover how to design scalable AI data pipelines that can handle everything from real-time predictions to batch processing workloads.

Finally, we’ll cover implementing machine learning models in production using serverless architecture machine learning patterns, plus practical strategies for optimizing costs and maintaining security. By the end, you’ll have a clear roadmap for serverless AI deployment that can grow with your business needs.

Understanding Serverless Architecture for AI Workloads

Key benefits of serverless computing for machine learning applications

Serverless architecture transforms how we approach AI development by eliminating server provisioning and management tasks. With AWS serverless AI services like Lambda and SageMaker, developers can focus entirely on building intelligent applications rather than wrestling with infrastructure complexities. This approach delivers faster time-to-market for serverless machine learning pipeline projects while providing built-in reliability and fault tolerance.

Cost optimization through pay-per-execution pricing model

AWS Lambda AI applications charge only for actual compute time consumed, making AI workloads incredibly cost-effective. Unlike traditional server deployments that run continuously, serverless AI development means paying for milliseconds of execution time. This pricing model particularly benefits sporadic AI tasks like image recognition or natural language processing, where usage patterns vary dramatically throughout the day.

Automatic scaling capabilities for variable AI workloads

Serverless AI deployment handles traffic spikes seamlessly without manual intervention. When demand surges for your AI service, AWS automatically provisions additional compute resources within seconds. This elasticity proves invaluable for applications processing unpredictable workloads, such as real-time sentiment analysis or computer vision tasks that experience sudden usage bursts.

Reduced infrastructure management overhead

AWS AI infrastructure removes the burden of server maintenance, security patches, and capacity planning from development teams. Engineers can concentrate on refining machine learning algorithms and improving model accuracy instead of managing underlying systems. Building AI applications AWS becomes streamlined when teams avoid traditional DevOps responsibilities, allowing faster iteration cycles and more innovative AWS serverless ML solutions.

Essential AWS Services for Serverless AI Development

AWS Lambda for running inference and data processing functions

AWS Lambda serves as the backbone of serverless AI development, allowing you to run inference tasks without managing servers. This event-driven compute service automatically scales based on demand, making it perfect for real-time prediction scenarios and data preprocessing workflows. You can deploy lightweight machine learning models directly within Lambda functions, handling everything from image classification to natural language processing tasks while paying only for actual compute time used.

Amazon SageMaker for model training and deployment

Amazon SageMaker revolutionizes serverless machine learning pipeline development by providing fully managed training and hosting capabilities. The service enables you to build, train, and deploy models at scale without infrastructure overhead. SageMaker’s serverless inference options, including multi-model endpoints and batch transform jobs, integrate seamlessly with other AWS AI services to create comprehensive machine learning solutions that automatically handle traffic spikes and model versioning.

AWS Step Functions for orchestrating complex AI workflows

Step Functions acts as the conductor for your serverless AI development projects, coordinating multiple AWS services into sophisticated machine learning pipelines. This visual workflow service connects Lambda functions, SageMaker jobs, and data processing tasks into reliable, fault-tolerant sequences. You can build complex AI workflows that include data validation, model training, evaluation, and deployment stages while maintaining clear visibility into each step’s execution status and handling errors gracefully across your entire AWS AI infrastructure.

Designing Scalable AI Data Pipelines

Real-time data ingestion with Amazon Kinesis and API Gateway

Amazon Kinesis Data Streams captures high-volume data from IoT devices, web applications, and external APIs, automatically scaling to handle millions of records per second. API Gateway acts as the front door, processing REST and WebSocket requests while triggering downstream AWS Lambda AI applications for immediate analysis. This combination enables serverless AI development teams to build responsive systems that react to data as it arrives.

Automated data preprocessing using Lambda triggers

Lambda functions automatically trigger when new data lands in S3 buckets or streams through Kinesis, eliminating manual intervention in your serverless machine learning pipeline. These event-driven functions clean, transform, and validate incoming data before feeding it to ML models. The serverless architecture machine learning approach reduces operational overhead while maintaining consistent data quality across your AWS AI infrastructure.

Efficient data storage strategies with S3 and DynamoDB

S3 provides cost-effective storage for training datasets, model artifacts, and processed results with built-in lifecycle policies that automatically move data between storage classes. DynamoDB handles real-time feature stores and metadata management with single-digit millisecond latency. Strategic partitioning and indexing optimize query performance for building AI applications AWS while keeping costs predictable through on-demand pricing models.

Event-driven architecture patterns for AI applications

Event-driven patterns decouple AI components using SNS topics, SQS queues, and EventBridge rules to orchestrate complex workflows. When training jobs complete or model predictions exceed thresholds, events automatically trigger retraining pipelines or alert systems. This serverless AI deployment strategy creates resilient applications that self-heal and adapt to changing conditions without manual oversight, making your AWS serverless ML infrastructure truly autonomous.

Implementing Machine Learning Models in Production

Model deployment strategies using SageMaker endpoints

SageMaker endpoints provide a robust foundation for serverless AI deployment, offering real-time inference capabilities without managing underlying infrastructure. These endpoints automatically scale based on traffic patterns, making them perfect for AWS serverless ML applications that experience variable workloads. You can deploy multiple model variants behind a single endpoint, enabling A/B testing and gradual rollouts for production machine learning systems.

Container-based inference with AWS Fargate

AWS Fargate eliminates server management while running containerized AI models at scale. This approach works exceptionally well for serverless AI development when you need custom runtime environments or specific dependencies. Fargate automatically handles container orchestration and scaling, allowing your AI applications to focus purely on inference logic without worrying about underlying compute resources.

Edge computing capabilities with AWS Lambda@Edge

Lambda@Edge brings AI inference closer to users by running lightweight models at CloudFront edge locations worldwide. This serverless architecture machine learning approach dramatically reduces latency for real-time AI applications like image recognition or content personalization. The service integrates seamlessly with other AWS AI services, creating a distributed inference network that scales automatically based on global demand patterns.

Optimizing Performance and Managing Costs

Memory and Timeout Configuration Best Practices

Setting the right memory allocation directly impacts your AWS Lambda AI applications’ performance and costs. Start with 512MB for basic inference tasks, then monitor CloudWatch metrics to identify optimal settings. Higher memory allocations provide more CPU power, often reducing execution time and overall costs for compute-intensive AI workloads.

Configure timeout values based on your model complexity—simple predictions need 30-60 seconds, while complex deep learning inference might require 5-15 minutes. Always set timeouts slightly above your expected execution time to avoid premature function termination during peak loads.

Cold Start Mitigation Techniques for Lambda Functions

Cold starts can significantly impact serverless AI deployment performance, especially for real-time inference applications. Use provisioned concurrency for critical AI endpoints that require consistent response times. This keeps functions warm and ready to handle requests without initialization delays.

Optimize your deployment packages by removing unnecessary dependencies and using lightweight containers. Consider implementing model caching strategies using Amazon EFS or S3 to reduce model loading time during function initialization.

Cost Monitoring and Budget Alerts Setup

AWS Cost Explorer and CloudWatch help track spending across your serverless machine learning pipeline components. Set up billing alerts at 50%, 75%, and 90% of your monthly budget to catch unexpected cost spikes early. Monitor Lambda invocations, data transfer costs, and AI service usage separately for granular cost analysis.

Create custom CloudWatch dashboards to visualize costs per AI workload type. Use AWS Budgets to establish spending limits for different environments—development, staging, and production—ensuring your AWS serverless ML projects stay within financial constraints.

Resource Allocation Strategies for Different AI Workload Types

Batch processing workloads benefit from higher memory configurations (1GB-3GB) to handle large datasets efficiently. Real-time inference requires balanced memory settings (512MB-1GB) prioritizing response time over raw processing power. Training workloads should leverage AWS Batch or SageMaker rather than Lambda for better resource management.

Image processing tasks need 1-2GB memory for optimal performance, while text analysis can operate effectively with 256-512MB. Monitor your specific use cases and adjust allocations based on actual performance metrics rather than theoretical requirements.

Security and Compliance Considerations

IAM roles and permissions for AI service access

Securing your AWS serverless AI applications starts with proper Identity and Access Management configuration. Create specific IAM roles for each AI service component, granting minimum required permissions to Lambda functions accessing SageMaker endpoints, Bedrock models, or Comprehend APIs. Use resource-based policies to control cross-service access and implement least-privilege principles throughout your serverless AI development workflow.

Data encryption in transit and at rest

AWS automatically encrypts data flowing between serverless AI services using TLS 1.2, while storage services like S3 and DynamoDB provide built-in encryption at rest. Configure customer-managed KMS keys for enhanced control over your machine learning datasets and model artifacts. Enable encryption for Lambda environment variables containing sensitive configuration data and ensure your serverless ML pipeline maintains end-to-end data protection standards.

VPC configuration for secure model deployment

Deploy your serverless AI infrastructure within Virtual Private Cloud environments to isolate network traffic and control access patterns. Configure VPC endpoints for AWS AI services to keep data traffic within your private network boundary. Set up security groups and NACLs to restrict communication between Lambda functions and AI service endpoints, creating secure channels for your AWS serverless ML workloads without exposing sensitive model inference traffic to public internet routes.

Serverless architecture transforms how we build and deploy AI applications on AWS, offering automatic scaling, reduced operational overhead, and cost-effective solutions. The combination of services like Lambda, SageMaker, and Step Functions creates powerful data pipelines that can handle everything from real-time inference to batch processing. When you design your AI workloads with serverless principles, you get the flexibility to scale up during peak demand and scale down when things are quiet, paying only for what you actually use.

Success with serverless AI comes down to smart design choices and ongoing optimization. Focus on choosing the right services for each part of your pipeline, implement proper security measures from day one, and keep monitoring your performance metrics and costs. Start small with a proof of concept, learn from real usage patterns, and gradually expand your serverless AI capabilities. The serverless approach removes much of the infrastructure complexity, letting you concentrate on what really matters – building AI solutions that deliver value to your users.