When building distributed systems, you’ll face a fundamental trade-off that can make or break your application’s performance. The CAP Theorem forces you to choose between keeping data perfectly synchronized across all nodes (consistency) and ensuring your system stays online even when things go wrong (availability).

This guide is for software engineers, system architects, and developers who need to make smart decisions about distributed system design. Whether you’re scaling a startup’s database or architecting enterprise solutions, understanding these trade-offs will help you build more reliable systems.

We’ll explore the core principles of consistency and availability in distributed systems, showing you how each affects user experience and system behavior. You’ll also learn practical strategies for making the right choice between consistency and availability based on your specific use case, plus real-world examples of how companies like Amazon, Google, and Netflix have navigated these decisions.

Fundamentals of the CAP Theorem

Core principles that govern distributed system design

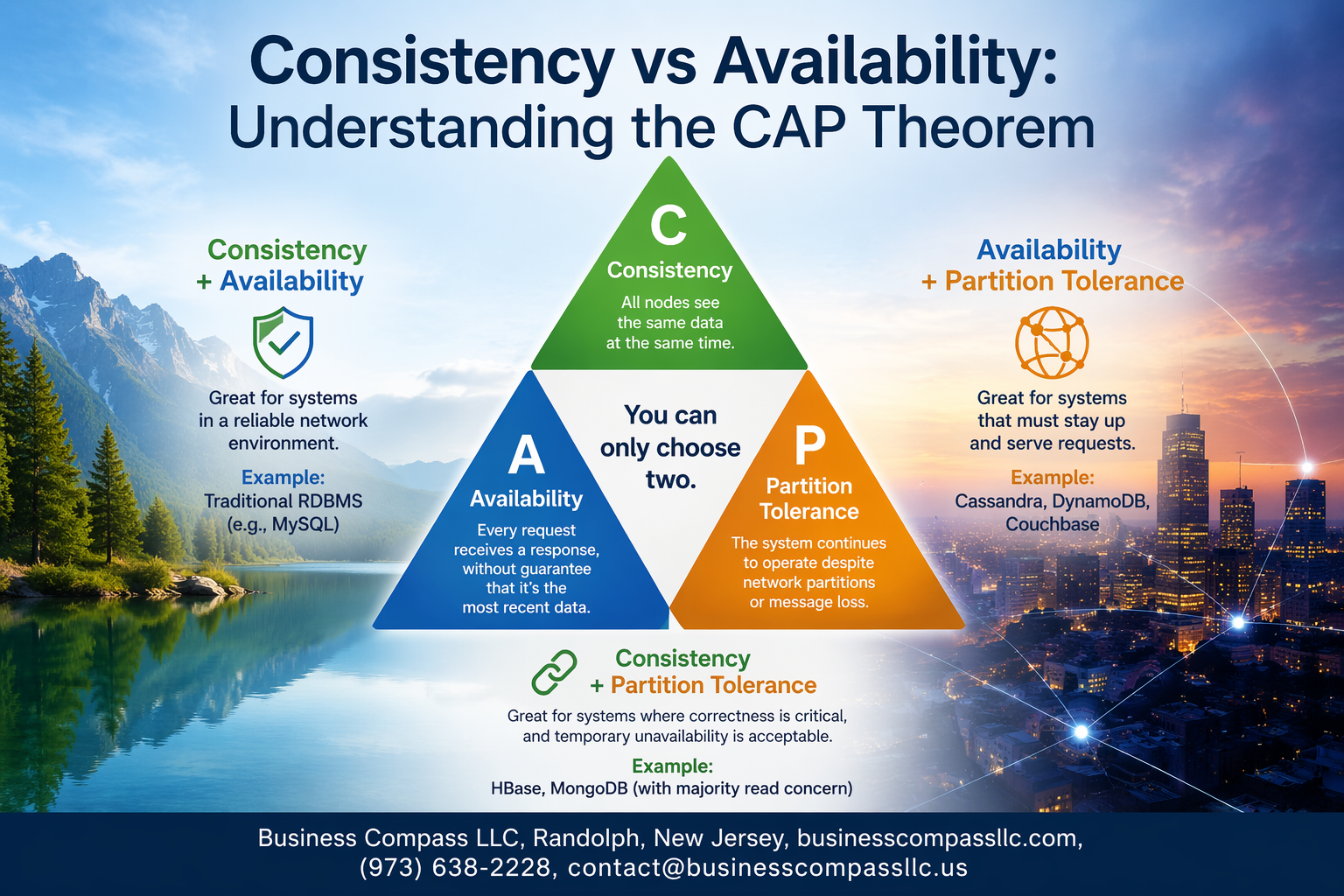

The CAP theorem establishes that distributed systems must navigate three fundamental properties: Consistency (all nodes see identical data simultaneously), Availability (system remains operational despite failures), and Partition tolerance (system continues functioning when network splits occur). This creates an impossible trinity where achieving all three simultaneously defies the laws of distributed computing.

Historical context and Eric Brewer’s original conjecture

Eric Brewer introduced this concept in 2000 during his keynote at the ACM Principles of Distributed Computing symposium. His conjecture challenged the prevailing assumption that distributed systems could maintain perfect consistency while ensuring continuous availability across network partitions.

Mathematical proof and theoretical foundations

Seth Gilbert and Nancy Lynch formalized Brewer’s conjecture in 2002, providing rigorous mathematical proof. Their work demonstrated that when network partitions occur, systems face a binary choice: either maintain consistency by rejecting requests or preserve availability by accepting potentially inconsistent operations.

Why you can only choose two out of three properties

Network partitions represent unavoidable realities in distributed environments due to hardware failures, network congestion, or geographic separation. When partitions happen, systems cannot simultaneously guarantee that all nodes reflect identical data while remaining responsive to user requests. This forces architects to prioritize either data correctness or system responsiveness.

Breaking Down Consistency in Distributed Systems

Strong Consistency Guarantees and ACID Properties

Strong consistency means every node in a distributed system shows the same data at the same time. ACID properties (Atomicity, Consistency, Isolation, Durability) ensure database transactions complete reliably. When you update a bank account balance, strong consistency guarantees that all servers immediately reflect the new amount, preventing discrepancies.

Eventual Consistency Models and Their Trade-offs

Eventual consistency allows temporary data differences across nodes, promising synchronization will happen later. Social media platforms often use this approach – your friend’s post might appear on your feed seconds after they publish it. This model offers better performance and availability but creates scenarios where users see outdated information temporarily.

Real-World Scenarios Where Consistency Matters Most

Financial systems prioritize strong consistency because money transfers cannot tolerate inconsistent account balances. E-commerce inventory management also requires immediate consistency to prevent overselling products. Healthcare records demand strict consistency since outdated patient information could impact medical decisions. Banking transactions, stock trading platforms, and payment processors all sacrifice some performance to maintain data accuracy across their distributed networks.

Understanding Availability in Modern Applications

High availability requirements for business continuity

Modern businesses can’t afford to have their systems go offline, even for a few minutes. E-commerce platforms, banking applications, and social media services need to stay operational around the clock. Users expect instant responses, and any downtime directly impacts customer trust and revenue streams.

Measuring uptime and system reliability metrics

Most organizations target 99.9% availability, which allows roughly 8.7 hours of downtime annually. However, many cloud providers now offer 99.99% uptime guarantees. System reliability gets measured through metrics like Mean Time To Recovery (MTTR) and Mean Time Between Failures (MTBF). These numbers help teams understand performance patterns and identify weak points in their infrastructure.

Cost implications of downtime for your organization

Downtime costs vary dramatically across industries. Amazon loses approximately $220,000 per minute when their systems fail, while smaller businesses might face hundreds of dollars in lost sales. Beyond immediate revenue loss, companies deal with damaged reputation, customer churn, and potential regulatory penalties. The financial impact often reaches 10-20 times the immediate lost revenue.

Partition Tolerance as the Non-Negotiable Factor

Network failures and split-brain scenarios

Network partitions happen when communication links between nodes fail, creating isolated groups that can’t talk to each other. Split-brain scenarios emerge when multiple groups think they’re the authoritative cluster, leading to conflicting data updates. These situations are inevitable in distributed systems, especially across geographic regions where network reliability varies significantly.

Why partition tolerance is mandatory in distributed systems

Real-world networks are unreliable by nature – cables break, routers fail, and data centers lose connectivity. Any distributed system that can’t handle these partitions will simply stop working when networks split. The CAP theorem forces you to choose between consistency and availability during partitions, but partition tolerance isn’t optional. Without it, your system becomes a single point of failure dressed up as a distributed architecture.

Strategies for handling network partitions effectively

- Consensus algorithms like Raft or PBFT help nodes agree on system state even during partitions

- Circuit breakers detect partition scenarios and gracefully degrade service functionality

- Read/write separation allows read-only operations to continue during network splits

- Conflict resolution strategies merge divergent data when partitions heal

- Health checks and timeouts quickly identify partition scenarios and trigger appropriate responses

Practical Decision-Making Between Consistency and Availability

CP Systems That Prioritize Consistency Over Availability

CP systems guarantee data consistency even when network partitions occur, accepting temporary unavailability as a trade-off. Traditional relational databases like PostgreSQL and MySQL exemplify this approach, ensuring all nodes reflect identical data states before accepting new transactions. Banking systems and financial applications rely heavily on CP architectures because data accuracy supersedes service availability.

AP Systems That Favor Availability Over Consistency

AP systems maintain service availability during network failures while accepting eventual consistency. NoSQL databases like Cassandra and DynamoDB represent this paradigm, allowing writes to continue across available nodes even when some become unreachable. Social media platforms and content delivery networks embrace this model since user engagement matters more than immediate data synchronization.

Industry Examples and Use Case Analysis

Financial Services choose CP systems for:

- Payment processing

- Account management

- Regulatory compliance

- Fraud detection

Web-Scale Applications prefer AP systems for:

- Social media feeds

- E-commerce catalogs

- User-generated content

- Analytics data collection

Hybrid Approaches emerge in:

- Multi-tier architectures

- Microservices ecosystems

- Regional data centers

Framework for Choosing the Right Approach for Your Needs

Evaluate Data Criticality:

- Mission-critical data requires CP

- User experience data suits AP

- Operational data allows flexibility

Assess Business Impact:

- Revenue loss from inconsistency vs. downtime

- Regulatory requirements

- User expectations and tolerance

- Recovery time objectives

Technical Considerations:

- Network reliability patterns

- Geographic distribution requirements

- Scalability projections

- Team expertise and operational capacity

Real-World Implementation Strategies

Design patterns for CP-focused architectures

Strong consistency architectures rely on synchronous replication and consensus protocols like Raft or PBFT. Master-slave configurations ensure single points of truth, while two-phase commit protocols guarantee atomic transactions across distributed nodes. These patterns sacrifice availability during network partitions but maintain data integrity.

Techniques for building highly available AP systems

Event sourcing and eventual consistency models enable AP systems to remain operational during failures. Conflict-free replicated data types (CRDTs) automatically resolve concurrent updates without coordination. Circuit breakers and graceful degradation patterns ensure services stay responsive even when dependencies fail.

Hybrid approaches and consistency levels

Tunable consistency allows applications to adjust guarantees per operation. Read and write quorum configurations balance consistency with availability based on business requirements. Multi-version concurrency control provides snapshot isolation while maintaining system responsiveness.

Monitoring and alerting for CAP-related issues

Partition detection mechanisms track network connectivity between nodes and data centers. Consistency lag metrics measure replication delays across replicas. Availability dashboards monitor service uptime and response times, while conflict resolution alerts identify data inconsistencies requiring manual intervention.

The CAP Theorem forces every distributed system architect to make tough choices. You simply can’t have perfect consistency and perfect availability when network partitions happen – and they always do. The key is understanding what your application actually needs and making informed trade-offs based on real business requirements, not theoretical ideals.

When building your next distributed system, start by asking the right questions. Can your users tolerate slightly stale data for better uptime? Or do you need rock-solid consistency even if it means occasional service interruptions? Study how companies like Amazon chose availability for shopping carts while banks prioritize consistency for transactions. Your decision will shape your entire architecture, so take the time to get it right from the beginning.