Building real-time gaming platforms that can handle thousands of concurrent players requires more than just powerful servers—it demands smart architectural decisions from day one. Game developers, platform engineers, and technical leads working on multiplayer titles face unique challenges when designing systems that must process millions of player actions per second without lag or downtime.

This guide breaks down the essential components of massive concurrency gaming systems, from the foundational infrastructure to advanced optimization techniques. You’ll learn how successful gaming companies architect their platforms to scale seamlessly as player bases grow.

We’ll dive deep into core infrastructure components that form the backbone of high-performance gaming systems, including load balancers, message queues, and database clusters specifically configured for real-time game development. Then we’ll explore horizontal scaling strategies that let you add capacity on demand, covering auto-scaling policies, containerization approaches, and distributed server architectures that keep games running smoothly during peak traffic.

Finally, we’ll tackle state management and synchronization patterns that ensure all players see consistent game worlds, covering conflict resolution algorithms, event sourcing techniques, and data consistency models that work at massive scale.

Understanding Real-Time Gaming Architecture Requirements

Latency Constraints and Performance Benchmarks

Modern real-time gaming architecture demands sub-50ms response times for competitive gameplay, with action games requiring even stricter 16-33ms latencies to maintain 60fps synchronization. High-performance gaming systems must consistently deliver tick rates of 64-128Hz while processing thousands of concurrent player actions. Network optimization becomes critical when handling massive concurrency gaming scenarios, where packet loss above 1% significantly degrades player experience. Scalable gaming infrastructure needs predictable performance metrics across geographic regions, ensuring consistent gameplay regardless of player location or server load fluctuations.

Scalability Demands for Multiplayer Environments

Multiplayer game architecture faces exponential scaling challenges as player counts increase from hundreds to millions of concurrent users. Game server infrastructure must dynamically allocate resources based on real-time demand, supporting everything from intimate 4-player matches to massive 1000+ player battle royales. Horizontal scaling gaming strategies become essential when traditional vertical scaling hits hardware limitations. Auto-scaling systems need intelligent load balancing that considers game session affinity, player geographic distribution, and regional peak hours to maintain optimal performance across diverse gaming scenarios.

Data Consistency Across Distributed Systems

Game state synchronization presents unique challenges in distributed environments where multiple server nodes must maintain identical world states without introducing conflicts or desynchronization. Real-time game development requires sophisticated conflict resolution algorithms that prioritize authoritative server decisions while minimizing rollbacks and prediction errors. Eventual consistency models work well for non-critical data like player statistics, but critical gameplay elements demand strong consistency guarantees. Gaming platform design must carefully balance consistency requirements with performance needs, often implementing hybrid approaches that treat different data types with varying consistency levels.

Fault Tolerance and System Reliability

Real-time gaming architecture cannot afford single points of failure when millions of players depend on continuous service availability. Redundant game server infrastructure with automatic failover mechanisms ensures seamless player experience even during hardware failures or network partitions. Circuit breakers and graceful degradation strategies prevent cascading failures from affecting healthy game instances. Massive concurrency gaming platforms implement sophisticated monitoring that detects anomalies before they impact players, with automated recovery systems that can migrate game sessions between servers without interrupting active matches.

Core Infrastructure Components for Massive Concurrency

Load Balancing Strategies for Game Traffic

Game traffic patterns differ dramatically from traditional web applications, requiring specialized load balancing approaches that account for session stickiness and real-time latency requirements. Geographic load balancing becomes critical when distributing players across multiple regions, ensuring optimal connection speeds while maintaining game state consistency. Layer 4 load balancers excel at handling UDP traffic for fast-paced action games, while Layer 7 balancers provide intelligent routing based on game mode or player skill level. Consistent hashing algorithms prevent player disconnections during server scaling events, maintaining seamless gameplay experiences even as infrastructure adapts to changing demand.

Database Optimization for High-Volume Transactions

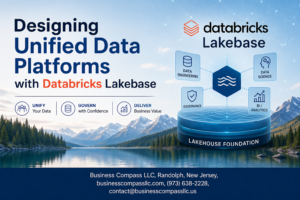

Massive concurrency gaming demands database architectures that can handle thousands of simultaneous read/write operations without compromising game performance or data integrity. Partitioning player data by geographic regions or game servers reduces contention and improves query response times significantly. Write-heavy workloads benefit from specialized NoSQL solutions like Redis for leaderboards and MongoDB for flexible player profiles, while traditional RDBMS systems handle critical transactional data like purchases and achievements. Connection pooling becomes essential when managing hundreds of concurrent database connections, preventing resource exhaustion during peak gaming hours while maintaining optimal throughput.

Message Queue Systems for Real-Time Communication

Real-time gaming platforms rely heavily on message queue systems to handle the massive volume of player actions, game events, and system notifications flowing through the infrastructure. Apache Kafka excels at handling high-throughput game event streams, enabling features like replay systems and analytics while maintaining sub-millisecond latency for critical gameplay messages. Redis Pub/Sub provides lightweight messaging for lobby systems and chat functionality, while RabbitMQ offers reliable delivery guarantees for important transactions like item purchases or level completions. Message prioritization ensures that critical game state updates take precedence over less time-sensitive communications, maintaining responsive gameplay even under heavy system load.

Network Architecture Design Patterns

CDN Integration for Global Player Base

Content Delivery Networks transform real-time gaming architecture by positioning game assets and lightweight game states closer to players worldwide. Modern CDNs like Cloudflare and AWS CloudFront cache static resources while providing intelligent routing for dynamic game traffic. Edge locations reduce initial connection latency by 60-80%, enabling faster matchmaking and asset loading. Smart CDN configurations can handle burst traffic during game launches or events, automatically scaling bandwidth to prevent bottlenecks that could crash your multiplayer game architecture.

Regional Server Distribution Strategies

Geographic server distribution creates the backbone of massive concurrency gaming systems. Deploying game servers across multiple regions – North America, Europe, Asia-Pacific, and emerging markets – ensures players connect to nearby infrastructure. Regional clustering involves primary and secondary server pools, where primary handles active gameplay while secondary manages overflow and failover scenarios. Cross-region synchronization maintains global leaderboards and friend lists without impacting local game performance. This scalable gaming infrastructure approach reduces ping times below 50ms for 90% of your player base.

Protocol Selection for Low-Latency Communication

UDP dominates real-time game development for its speed advantages, though TCP serves specific use cases in gaming platform design. UDP packets bypass connection overhead, delivering position updates and actions with minimal delay. However, critical events like player deaths or score updates require TCP’s reliability guarantees. WebSocket protocols bridge browser-based games with native performance, while custom binary protocols optimize bandwidth for mobile gaming. Protocol selection directly impacts your game server infrastructure’s ability to handle thousands of concurrent players without lag spikes.

Connection Pooling and Resource Management

Efficient connection management prevents resource exhaustion in high-performance gaming systems. Connection pooling maintains persistent links between clients and servers, reusing established connections rather than creating new ones for each interaction. Load balancers distribute incoming connections across available server instances, preventing any single server from becoming overwhelmed. Memory pools pre-allocate resources for player sessions, avoiding garbage collection pauses during intense gameplay moments. Database connection pooling ensures state synchronization doesn’t bottleneck under massive concurrency gaming loads, maintaining smooth gameplay even during peak hours.

Scaling Horizontal Game Server Infrastructure

Containerization and Orchestration Solutions

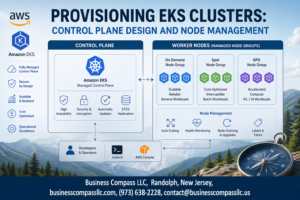

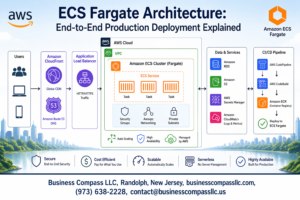

Docker containers revolutionize game server infrastructure by packaging game instances with all dependencies, ensuring consistent deployment across environments. Kubernetes orchestrates these containers at massive scale, automatically managing thousands of game servers while handling failures gracefully. Container orchestration platforms like Kubernetes enable seamless updates, rolling deployments, and resource isolation. Service mesh technologies like Istio provide advanced traffic management and security between containerized game services. Container registries store versioned game server images, enabling rapid deployment and rollback capabilities when issues arise during peak gaming periods.

Auto-Scaling Based on Player Demand

Dynamic scaling responds to player traffic patterns by automatically spinning up new game server instances during peak hours and shutting them down during low-activity periods. Horizontal pod autoscalers monitor CPU, memory, and custom metrics like player queue lengths to trigger scaling decisions. Predictive scaling leverages machine learning algorithms to anticipate traffic spikes based on historical patterns, game events, and marketing campaigns. Reactive scaling handles unexpected viral moments or tournament events that create sudden player influxes. Custom metrics like matchmaking wait times and server capacity utilization provide more accurate scaling triggers than traditional infrastructure metrics alone.

Resource Allocation Optimization

Quality of Service (QoS) classes prioritize critical game processes over background tasks, ensuring smooth gameplay during resource contention. CPU affinity binds game servers to specific processor cores, reducing context switching overhead and improving performance consistency. Memory management strategies include pre-allocated pools for game objects and careful garbage collection tuning to minimize latency spikes. GPU resources require specialized allocation for physics calculations and AI processing in demanding multiplayer games. Resource quotas prevent individual game instances from consuming excessive resources and impacting neighboring servers on shared infrastructure.

Multi-Cloud Deployment Strategies

Geographic distribution across multiple cloud providers reduces latency for global player bases while providing disaster recovery capabilities. Hybrid deployments combine on-premises hardware for predictable workloads with cloud resources for burst capacity during peak periods. Edge computing brings game servers closer to players through content delivery networks and edge data centers. Cross-cloud networking solutions like Consul Connect enable seamless communication between game services deployed across different cloud platforms. Vendor lock-in mitigation strategies include containerized deployments and infrastructure-as-code templates that work across AWS, Google Cloud, and Azure environments.

State Management and Data Synchronization

Player State Consistency Across Sessions

Game state synchronization becomes critical when millions of players interact simultaneously across distributed servers. Real-time gaming architecture demands sophisticated conflict resolution mechanisms to handle concurrent player actions without data corruption. Implementing eventual consistency models with vector clocks ensures player sessions remain synchronized while maintaining low-latency performance. Distributed databases like Redis Cluster provide horizontal scaling gaming capabilities, storing player inventories, achievements, and progress across multiple nodes. Session affinity strategies route players to consistent server instances, preventing state fragmentation during gameplay transitions.

Game World Persistence Mechanisms

Massive concurrency gaming platforms require robust persistence layers that handle continuous world state updates without performance degradation. Event sourcing patterns capture every game action as immutable events, enabling complete world reconstruction and temporal debugging capabilities. Database sharding strategies distribute game world data across multiple storage nodes, ensuring scalable gaming infrastructure can accommodate growing player populations. Memory-mapped files combined with write-ahead logging provide high-performance gaming systems with crash recovery guarantees. Background persistence services continuously snapshot critical world state to prevent data loss during server failures.

Conflict Resolution in Concurrent Updates

Simultaneous player actions create inevitable conflicts that multiplayer game architecture must resolve deterministically. Operational transformation algorithms handle concurrent modifications to shared game objects, ensuring all players see consistent results regardless of network latency. Priority-based conflict resolution assigns precedence to time-critical actions like combat moves over less urgent activities like inventory management. Optimistic locking mechanisms allow players to proceed with actions immediately while background processes validate and reconcile conflicts asynchronously. Lock-free data structures minimize contention in high-throughput scenarios where thousands of players modify nearby game world regions simultaneously.

Performance Monitoring and Optimization

Real-Time Metrics Collection Systems

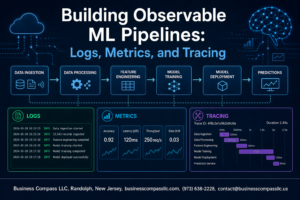

Effective monitoring of massive concurrency gaming platforms requires sophisticated metrics collection that operates without impacting game performance. Modern real-time gaming architecture demands low-latency monitoring systems that track player connections, server response times, memory usage, and network throughput across distributed game server infrastructure. Implement time-series databases like InfluxDB or Prometheus to capture granular performance data, while using lightweight agents that sample critical metrics every 100-500 milliseconds. Focus on application-specific metrics including player session duration, match completion rates, and concurrent user loads per server instance. Custom dashboards should visualize real-time game development KPIs alongside traditional infrastructure metrics, enabling rapid identification of performance degradation before players experience issues.

Bottleneck Identification and Resolution

Identifying performance bottlenecks in scalable gaming infrastructure requires systematic analysis of multiple system layers simultaneously. CPU saturation often occurs during complex game state calculations, while memory bottlenecks emerge from inefficient object pooling or garbage collection patterns. Network bottlenecks manifest as increased latency or packet loss, particularly during peak concurrent user sessions. Database query performance can severely impact multiplayer game architecture when player data retrieval becomes inefficient. Implement automated alerting systems that trigger when response times exceed acceptable thresholds, typically 50-100ms for real-time interactions. Use profiling tools to analyze code execution patterns and identify resource-intensive operations that scale poorly with increased player counts.

Capacity Planning for Peak Traffic

Strategic capacity planning for high-performance gaming systems involves analyzing historical traffic patterns and projecting growth scenarios. Gaming platforms experience unpredictable traffic spikes during game launches, tournaments, or viral social media events that can increase concurrent users by 300-500% within hours. Implement predictive scaling algorithms that monitor leading indicators such as social media mentions, marketing campaign schedules, and seasonal gaming patterns. Design horizontal scaling gaming infrastructure with buffer capacity of 20-30% above projected peak loads to handle unexpected surges. Consider geographic distribution patterns when planning capacity, as player behavior varies significantly across time zones and regions, affecting server utilization patterns throughout 24-hour cycles.

Cost Optimization Through Resource Management

Balancing performance requirements with operational costs requires intelligent resource allocation strategies across gaming platform design. Implement dynamic scaling policies that automatically provision servers during peak hours and reduce capacity during low-activity periods, potentially reducing infrastructure costs by 40-60%. Use spot instances or preemptible virtual machines for non-critical workloads like analytics processing or game asset generation. Optimize game state synchronization algorithms to reduce bandwidth costs, which can represent 15-25% of total operational expenses. Monitor resource utilization patterns to identify over-provisioned services and right-size instances based on actual usage. Implement caching strategies at multiple levels to reduce database query costs and improve response times for frequently accessed game data.

Building real-time gaming platforms that can handle thousands of simultaneous players demands careful planning and the right technical foundation. From setting up robust infrastructure components to implementing smart network design patterns, every piece of the architecture puzzle plays a crucial role in delivering smooth gameplay experiences. The key lies in mastering horizontal scaling techniques, keeping game state synchronized across multiple servers, and maintaining tight control over performance metrics.

Success in this space comes down to making smart architectural choices early and staying committed to continuous optimization. Start with a solid understanding of your concurrency requirements, invest in proper monitoring tools from day one, and don’t underestimate the importance of efficient data synchronization strategies. The gaming industry moves fast, and players have zero tolerance for lag or downtime—but with the right architectural approach, you can build platforms that not only meet these demands but scale effortlessly as your player base grows.