Introduction: The Machine Learning Development Lifecycle: Best Practices and Tools

Building successful machine learning models requires more than just coding algorithms. The machine learning lifecycle involves a structured approach that takes your project from raw data to production-ready models that deliver real business value.

This guide is designed for data scientists, ML engineers, and product managers who want to streamline their ML development process and avoid common pitfalls that derail projects. Whether you’re working at a startup or enterprise company, you’ll learn how to build reliable ML workflows that scale.

We’ll explore data preparation techniques that set your models up for success, including how to handle messy datasets and engineer features that actually matter. You’ll also discover model evaluation methods that go beyond accuracy scores to ensure your models perform well in the real world.

Finally, we’ll cover MLOps best practices for getting your models into production and keeping them running smoothly. From automated testing to monitoring model performance, you’ll learn the tools and strategies that separate successful ML teams from those stuck in endless experimentation cycles.

Understanding the Machine Learning Development Lifecycle Framework

Define the core phases of ML development

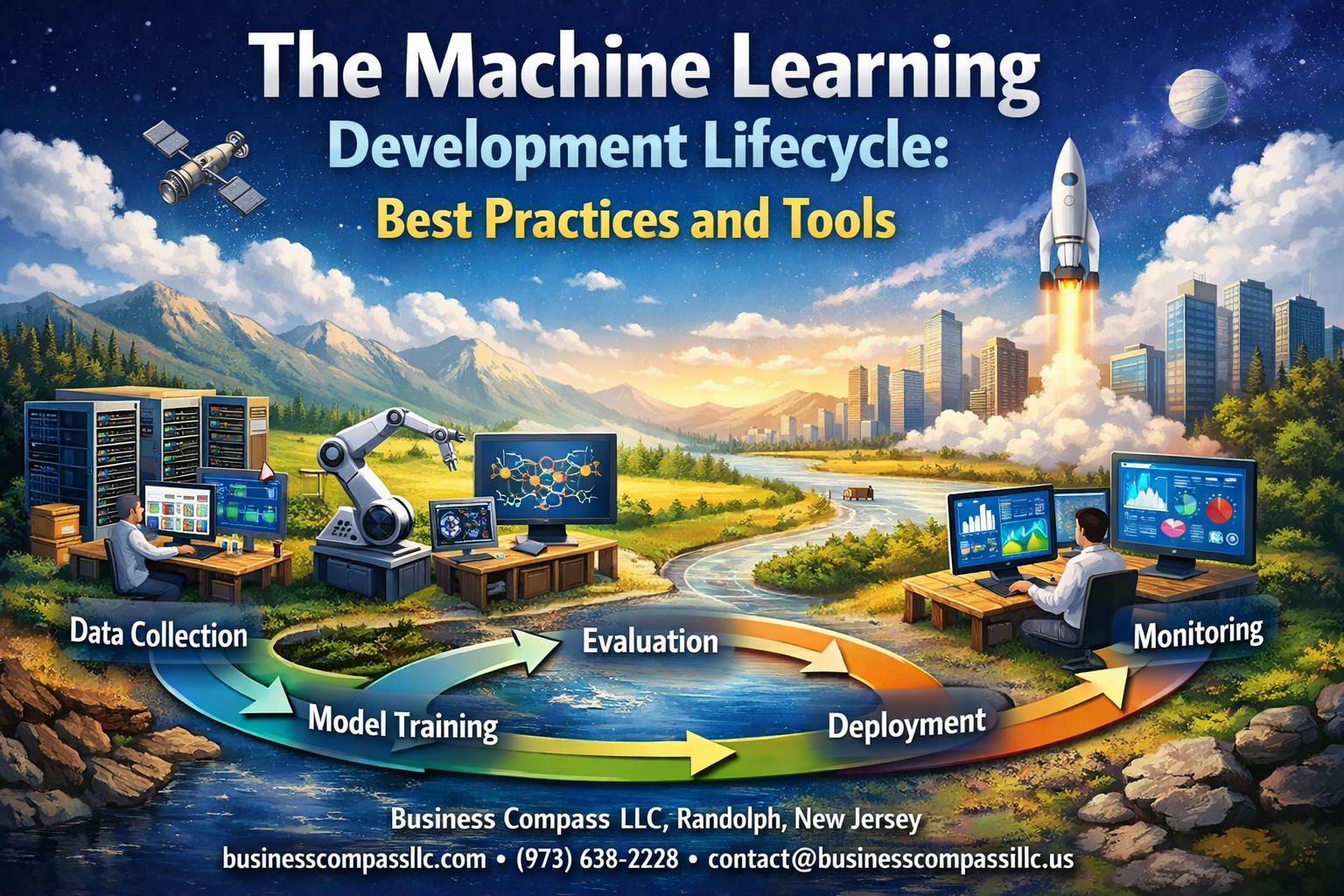

The machine learning lifecycle breaks down into six fundamental phases that guide teams from initial concept to production-ready solutions. The problem definition phase kicks things off by clearly articulating business objectives and translating them into technical requirements. Teams determine whether ML is the right approach and define success criteria upfront.

Data collection and preparation forms the foundation of any ML project. This phase involves gathering relevant datasets, cleaning messy data, handling missing values, and transforming raw information into formats suitable for model training. Quality data preparation directly impacts model performance, making this phase critical for project success.

The model development and experimentation phase focuses on algorithm selection, feature engineering, and iterative model building. Data scientists experiment with different approaches, tune hyperparameters, and validate assumptions through systematic testing. This exploratory phase often consumes the most time as teams search for optimal solutions.

Model evaluation and validation ensures developed models meet performance requirements across various scenarios. Teams apply rigorous testing methodologies, assess model bias, and verify results using holdout datasets. Cross-validation techniques help confirm model reliability before moving forward.

Production deployment transforms experimental models into scalable, reliable systems. This phase involves infrastructure setup, model serving architecture, and integration with existing business systems. MLOps practices become essential for smooth deployment workflows.

The final monitoring and maintenance phase ensures deployed models continue performing effectively. Teams track model drift, retrain algorithms when needed, and continuously optimize system performance based on real-world feedback.

Identify key stakeholders and their responsibilities

Multiple stakeholders collaborate throughout the ML development framework, each bringing specialized expertise and unique responsibilities. Data scientists lead model development efforts, conducting experiments, selecting algorithms, and optimizing performance metrics. They translate business problems into mathematical formulations and guide technical decision-making throughout the project lifecycle.

ML engineers bridge the gap between experimentation and production deployment. They design scalable ML pipelines, implement model serving infrastructure, and ensure systems can handle real-world traffic loads. Their expertise in software engineering and distributed systems makes production deployment possible.

Data engineers build and maintain data infrastructure that powers ML workflows. They create data pipelines, ensure data quality, and establish reliable access to training datasets. Their work enables consistent data flow from source systems to ML models.

Business stakeholders define project requirements, provide domain expertise, and validate model outputs against real-world scenarios. Product managers, domain experts, and end-users fall into this category, offering critical insights that shape model design and evaluation criteria.

DevOps and platform teams provide infrastructure support, monitoring capabilities, and deployment automation. They ensure ML systems integrate smoothly with existing technology stacks and maintain operational reliability.

Data governance and compliance teams oversee regulatory requirements, privacy protection, and ethical AI practices. They establish guidelines for data usage, model transparency, and bias mitigation across ML projects.

Establish success metrics for each lifecycle stage

Each phase of the ML development process requires specific success metrics that align with both technical objectives and business outcomes. During the problem definition phase, success metrics focus on clarity and feasibility. Teams measure progress through well-defined problem statements, identified data sources, and established baseline performance benchmarks. Clear success criteria prevent scope creep and ensure alignment between technical teams and business stakeholders.

The data preparation phase emphasizes data quality and completeness metrics. Key indicators include data coverage percentages, missing value rates, data consistency scores, and feature correlation analysis results. Teams also track data pipeline reliability and processing time efficiency to ensure sustainable workflows.

Model development success gets measured through performance metrics relevant to specific problem types. Classification tasks rely on accuracy, precision, recall, and F1-scores, while regression problems focus on mean squared error, root mean squared error, and R-squared values. Cross-validation scores and learning curve analysis help assess model stability and generalization capability.

Evaluation and validation phases require comprehensive testing metrics including model performance across different data segments, bias detection scores, and robustness testing results. A/B testing frameworks help validate real-world performance improvements compared to existing solutions.

Production deployment success gets tracked through system reliability metrics like uptime percentages, response time latency, throughput capacity, and error rates. Model prediction accuracy in production environments provides crucial feedback on deployment effectiveness.

Monitoring and maintenance phases focus on model drift detection, retraining frequency requirements, and continuous performance tracking. Business impact metrics like conversion rate improvements or cost reduction achievements demonstrate ongoing value delivery from ML investments.

Data Collection and Preparation Strategies

Source High-Quality Datasets Efficiently

Finding the right data sources can make or break your machine learning pipeline. Start by identifying authoritative datasets from reputable repositories like Kaggle, UCI Machine Learning Repository, or government open data portals. Industry-specific databases often provide more relevant and cleaner datasets than generic collections.

Create a systematic approach to dataset evaluation before committing to any source. Check the data’s freshness, completeness, and alignment with your problem domain. Look for datasets with comprehensive metadata and clear documentation about collection methods. This upfront investment saves countless hours during later stages of the ML development process.

Consider synthetic data generation when real-world datasets are insufficient or privacy-sensitive. Tools like Faker, SDV, or GANs can create realistic training data that preserves statistical properties without exposing sensitive information. This approach proves especially valuable in healthcare, finance, and other regulated industries.

Establish partnerships with data providers early in your project timeline. Many organizations are willing to share anonymized datasets for research purposes, but these collaborations take time to develop. Building these relationships creates sustainable data pipelines for future projects.

Handle Missing Data and Outliers Effectively

Missing data patterns tell a story about your dataset’s quality and collection process. Before applying any imputation techniques, analyze whether missing values occur randomly or follow specific patterns. This analysis guides your strategy for addressing gaps without introducing bias into your machine learning pipeline.

Simple imputation methods like mean or median substitution work well for numerical data with random missing patterns. For categorical variables, mode imputation or creating a separate “unknown” category often yields better results than dropping incomplete records entirely.

Advanced techniques like K-nearest neighbors imputation or iterative imputation capture relationships between features more effectively. These methods prove especially valuable when dealing with high-dimensional datasets where feature interactions influence missing value patterns.

Outlier detection requires balancing statistical rigor with domain expertise. Statistical methods like IQR or Z-score identify numerical anomalies, but domain knowledge determines whether these outliers represent errors or valuable edge cases. In fraud detection, outliers might be your most important training examples.

Create visualization dashboards to monitor data quality metrics continuously. Box plots, scatter plots, and distribution histograms reveal outlier patterns that automated detection might miss. This visual approach helps data scientists make informed decisions about outlier treatment strategies.

Implement Data Validation and Quality Checks

Data validation forms the backbone of reliable ML workflow management. Implement schema validation to ensure incoming data matches expected formats, data types, and value ranges. This prevents downstream errors that could corrupt model training or produce unreliable predictions.

Statistical validation checks monitor data drift over time. Compare distributions of new data batches against baseline statistics from your training set. Significant changes in mean, variance, or distribution shape indicate potential data quality issues that require investigation.

Create automated alerts for common data quality problems like duplicate records, impossible value combinations, or unexpected null rates. These checks should run automatically in your data preprocessing pipelines, flagging issues before they impact model performance.

Business rule validation ensures data makes logical sense within your domain context. For example, customer ages should fall within reasonable ranges, and transaction amounts should align with typical business patterns. These domain-specific checks catch errors that statistical validation might miss.

Version control your validation rules alongside your code. As business requirements evolve, validation criteria need updates to remain effective. Tracking these changes helps maintain data quality standards across different stages of the machine learning lifecycle.

Create Scalable Data Preprocessing Pipelines

Building scalable data preparation techniques starts with choosing the right processing framework for your data volume and velocity requirements. Apache Spark handles large-scale batch processing efficiently, while streaming frameworks like Apache Kafka work better for real-time data ingestion scenarios.

Design your preprocessing steps as modular, reusable components. Each transformation should handle a specific task like normalization, encoding, or feature engineering. This modular approach simplifies testing, debugging, and pipeline maintenance as your ML development process evolves.

Implement caching strategies for expensive preprocessing operations. Store intermediate results from computationally intensive transformations like feature engineering or data aggregations. This optimization reduces processing time for iterative model development and experimentation.

Create separate preprocessing pipelines for training and inference scenarios. Training pipelines can afford longer processing times for comprehensive feature engineering, while inference pipelines need optimized paths for real-time predictions. Document these differences clearly to prevent deployment issues.

Monitor pipeline performance metrics like processing time, memory usage, and error rates. Set up automated scaling triggers that add computing resources when processing volumes exceed normal thresholds. This monitoring ensures your data preprocessing pipelines can handle production workloads reliably.

Model Development and Experimentation Best Practices

Select Appropriate Algorithms for Your Problem

Understanding your problem type is the foundation of successful model development in any machine learning pipeline. Classification problems require different approaches than regression tasks, while unsupervised learning scenarios demand their own specialized algorithms. Start by clearly defining whether you’re predicting categories, continuous values, or discovering hidden patterns in your data.

Consider the nature and size of your dataset when making algorithmic choices. Linear models like logistic regression work well with smaller datasets and provide interpretability, making them ideal for scenarios where you need to explain predictions. Tree-based algorithms like Random Forest and XGBoost excel with structured data and can handle missing values naturally. Deep learning approaches shine when working with unstructured data like images or text, but require substantial computational resources and larger datasets.

Don’t overlook the importance of baseline models in your ML development process. Simple algorithms often provide surprisingly strong performance and serve as essential benchmarks. A basic linear model can reveal whether more complex approaches actually add value to your machine learning lifecycle.

Design Effective Feature Engineering Processes

Feature engineering can make or break your model’s performance, often having more impact than algorithm selection itself. Raw data rarely comes in a format that machine learning algorithms can effectively use, so transforming variables into meaningful representations becomes crucial.

Create features that capture domain knowledge and business logic. For time series data, consider lag features, rolling averages, and seasonal decomposition. For categorical variables, explore different encoding strategies beyond simple one-hot encoding. Target encoding, frequency encoding, and embedding approaches can reveal relationships that traditional methods miss.

Implement automated feature engineering pipelines that can scale with your data. Tools like feature stores help maintain consistency between training and production environments, addressing a common source of model degradation. Document your feature engineering decisions thoroughly – this knowledge becomes invaluable when troubleshooting model performance issues or onboarding new team members.

Build feature validation checks into your MLOps workflow. Monitor feature distributions, detect drift, and set up alerts when features behave unexpectedly. This proactive approach prevents many production issues before they impact your model’s performance.

Optimize Hyperparameters Systematically

Random hyperparameter tuning wastes computational resources and rarely finds optimal configurations. Instead, adopt systematic approaches that balance exploration with efficiency. Bayesian optimization and successive halving techniques can significantly reduce the time needed to find good hyperparameter combinations.

Start with coarse grid searches to identify promising regions, then use more sophisticated methods to fine-tune parameters. Tools like Optuna and Hyperopt automate this process and can run multiple optimization strategies in parallel. Set up proper validation schemes to avoid overfitting during hyperparameter search – nested cross-validation helps ensure your parameter choices generalize well.

Consider multi-objective optimization when you need to balance competing metrics like accuracy and inference speed. Production constraints often require trade-offs between model performance and operational requirements. Automated hyperparameter optimization tools can help navigate these trade-offs systematically.

Track Experiments for Reproducible Results

Experiment tracking forms the backbone of effective ML workflow management. Every model training run should capture code versions, data versions, hyperparameters, metrics, and environmental details. This comprehensive tracking enables you to reproduce successful experiments and understand what drives model performance.

MLflow, Weights & Biases, and Neptune provide robust platforms for experiment management. These tools integrate with popular machine learning frameworks and automatically capture many experimental details. Set up your tracking system early in your machine learning lifecycle – retrofitting experiment tracking becomes exponentially more difficult as projects grow.

Version your datasets alongside your code and models. Data changes often explain mysterious performance differences between experiments. Use data versioning tools like DVC or built-in dataset versioning in your experiment tracking platform.

Create experiment naming conventions and tagging strategies that make sense for your team. Clear organization becomes essential when managing hundreds of experiments across multiple team members. Regular experiment cleanup prevents your tracking system from becoming cluttered with obsolete runs.

Model Evaluation and Validation Techniques

Choose Relevant Performance Metrics

Selecting the right performance metrics forms the backbone of effective model evaluation methods in any ML development process. The choice of metrics depends heavily on your problem type and business objectives. For classification tasks, accuracy might seem like the obvious choice, but it can be misleading when dealing with imbalanced datasets. In such cases, precision, recall, and F1-score provide better insights into model performance.

For binary classification problems, consider using:

- Precision: Measures the proportion of positive predictions that were actually correct

- Recall (Sensitivity): Captures how well the model identifies all positive cases

- Specificity: Shows the model’s ability to correctly identify negative cases

- AUC-ROC: Evaluates performance across all classification thresholds

Regression tasks require different approaches. Mean Absolute Error (MAE) and Root Mean Square Error (RMSE) are common choices, but they serve different purposes. MAE treats all errors equally, while RMSE penalizes larger errors more heavily. R-squared explains the variance in your target variable, but adjusted R-squared accounts for model complexity.

Business context matters enormously when choosing metrics. A fraud detection system might prioritize recall over precision to catch more fraudulent transactions, even if it means more false positives. Medical diagnosis models often focus on sensitivity to avoid missing serious conditions.

Implement Robust Cross-Validation Strategies

Cross-validation strategies prevent overfitting and provide realistic performance estimates for your machine learning pipeline. Simple train-test splits can be unreliable, especially with smaller datasets or when data has temporal dependencies.

K-fold cross-validation divides your dataset into k subsets, training on k-1 folds and testing on the remaining fold. This process repeats k times, giving you k different performance estimates. Five-fold or ten-fold cross-validation typically works well for most scenarios.

Time series data requires special handling through temporal cross-validation techniques:

- Forward chaining: Uses progressively larger training sets with fixed-size test sets

- Time series split: Maintains temporal order while creating multiple train-test splits

- Blocked cross-validation: Groups consecutive time periods to respect temporal dependencies

Stratified cross-validation ensures each fold maintains the same class distribution as the original dataset. This becomes crucial when working with imbalanced classes or when certain subgroups need adequate representation in each fold.

For datasets with grouped structures (like multiple samples from the same patient), group-based cross-validation prevents data leakage by keeping related samples in the same fold.

Test for Model Bias and Fairness

Model validation techniques must include comprehensive bias testing to ensure fair outcomes across different demographic groups. Algorithmic bias can perpetuate or amplify existing societal inequalities, making fairness testing a critical component of the ML development process.

Start by identifying protected attributes in your dataset – characteristics like race, gender, age, or socioeconomic status that shouldn’t influence decisions in many contexts. Even when these attributes aren’t explicitly included as features, proxy variables might still introduce bias.

Key fairness metrics to evaluate include:

- Demographic parity: Equal positive prediction rates across groups

- Equalized odds: Equal true positive and false positive rates for all groups

- Equal opportunity: Equal true positive rates across protected groups

- Calibration: Prediction probabilities should reflect actual outcomes consistently across groups

Intersectional bias analysis examines how multiple protected attributes interact. A model might appear fair when looking at gender and race separately but show significant bias when considering Black women specifically.

Bias can emerge from training data imbalances, historical discrimination embedded in data, or biased labeling processes. Regular auditing throughout the machine learning lifecycle helps identify these issues before deployment.

Validate Models on Real-World Scenarios

Real-world model validation goes beyond traditional test sets to simulate actual production conditions. This validation approach tests how models perform when faced with data drift, edge cases, and unexpected input variations.

Shadow testing runs new models alongside existing systems without affecting real decisions. This allows you to compare performance in production environments while maintaining system stability. The approach provides valuable insights into how models behave with live data streams.

A/B testing splits traffic between different model versions, measuring business metrics alongside technical performance measures. This method reveals how model improvements translate into actual business value, which might differ from pure accuracy gains.

Stress testing exposes models to extreme scenarios:

- Data quality issues: Missing values, outliers, or corrupted inputs

- Distribution shifts: Changes in data patterns over time

- Adversarial inputs: Deliberately crafted examples designed to fool the model

- High-load conditions: Performance under heavy traffic or resource constraints

Canary deployments gradually roll out models to small user segments, monitoring for unexpected behaviors before full deployment. This MLOps best practice catches issues that might not appear in offline testing.

User acceptance testing involves domain experts evaluating model outputs in realistic scenarios. Their feedback often reveals practical limitations that automated metrics miss, particularly around interpretability and trustworthiness.

Production Deployment and MLOps Implementation

Build automated deployment pipelines

Creating robust automated deployment pipelines stands as the backbone of successful production ML deployment. These pipelines transform your machine learning models from experimental code into production-ready systems that can handle real-world traffic and demands.

Start by establishing a continuous integration and continuous deployment (CI/CD) framework specifically designed for machine learning workflows. Unlike traditional software deployment, ML pipelines must handle model artifacts, data dependencies, and complex validation steps. Tools like Jenkins, GitLab CI, or GitHub Actions can orchestrate these processes, but you’ll need to customize them for ML-specific requirements.

Your automated pipeline should include several critical stages: model packaging, environment provisioning, dependency management, and deployment validation. Model packaging involves containerizing your trained models using Docker or similar technologies, ensuring consistent runtime environments across development and production. Environment provisioning automatically sets up the necessary infrastructure, whether that’s Kubernetes clusters, cloud instances, or serverless functions.

Data validation becomes crucial at this stage. Your pipeline should automatically verify that incoming production data matches the statistical properties and schema of your training data. Implement automated data quality checks and feature drift detection to catch potential issues before they impact model performance.

Testing automation should encompass both traditional unit tests and ML-specific validations. This includes model performance benchmarks, inference latency tests, and integration tests with downstream systems. Your pipeline should automatically run these tests and only proceed with deployment if all checks pass.

Implement model versioning and rollback capabilities

Model versioning serves as your safety net in production machine learning operations. Every model iteration needs proper tracking, from experimental versions through production releases. This systematic approach prevents confusion and enables quick recovery when issues arise.

Establish a comprehensive versioning strategy that captures not just model weights, but also training data versions, hyperparameters, code commits, and evaluation metrics. Tools like MLflow, DVC, or custom versioning systems can manage these artifacts. Each model version should include metadata about training conditions, performance benchmarks, and compatibility requirements.

Create semantic versioning conventions that make sense for your ML context. Major versions might indicate significant architecture changes, minor versions could represent retraining with new data, and patch versions might cover small bug fixes or optimizations. This consistency helps teams understand the significance of each release.

Rollback capabilities require more than just reverting to previous model files. Your system needs to handle graceful transitions between model versions, managing traffic routing and ensuring no data loss during switches. Implement blue-green deployment strategies where you can run multiple model versions simultaneously and gradually shift traffic between them.

Design your rollback mechanisms to be both automated and manual. Automated rollbacks should trigger when performance metrics drop below acceptable thresholds or error rates spike. Manual rollbacks give you immediate control when unexpected issues emerge that automated systems might miss.

Set up monitoring and alerting systems

Production monitoring for machine learning systems requires a multi-layered approach that goes beyond traditional application monitoring. Your ML monitoring strategy should track model performance, data quality, system health, and business impact simultaneously.

Model performance monitoring forms the foundation of your observability stack. Track key metrics like accuracy, precision, recall, and custom business metrics that reflect real-world impact. Set up automated comparison between current performance and baseline metrics from your validation datasets. Performance degradation often signals data drift, model decay, or upstream system changes.

Data monitoring catches issues before they affect model predictions. Monitor input feature distributions, missing value patterns, and data schema changes. Set alerts for statistical drift in key features, unusual spike or drops in data volume, and schema violations that could break your model pipeline. Real-time data quality monitoring prevents poor-quality inputs from reaching your models.

System-level monitoring covers infrastructure health, including model serving latency, memory usage, CPU utilization, and request throughput. These metrics help you understand operational bottlenecks and capacity planning needs. Monitor prediction request patterns to identify usage trends and potential scaling requirements.

Business impact monitoring connects technical metrics to actual business outcomes. Track conversion rates, user engagement, or other business KPIs that your models influence. This connection helps stakeholders understand when technical issues translate to business problems.

Configure alerting with appropriate severity levels and escalation paths. Critical alerts should wake someone up at night, while warning-level alerts can wait for business hours. Use alert aggregation and correlation to avoid flooding your team with redundant notifications during incidents.

Essential Tools and Technologies for ML Lifecycle Management

Version Control Systems for ML Projects

Traditional software version control doesn’t fully capture the complexity of machine learning projects. ML development requires tracking datasets, model parameters, hyperparameters, and experiment configurations alongside code changes. Git remains the foundation, but specialized tools like DVC (Data Version Control) and Git-LFS handle large datasets and model files that exceed Git’s typical limits.

DVC creates lightweight metadata files that reference actual data stored in cloud storage or local systems, making it easy to version massive datasets without bloating repositories. MLflow’s Model Registry integrates seamlessly with Git workflows, allowing teams to link specific model versions to code commits. GitLab and GitHub now offer ML-specific features like model cards and experiment tracking integration, streamlining the machine learning development process.

Best practices include creating separate branches for different experiments, using semantic versioning for models, and maintaining clear commit messages that describe both code and data changes. Teams should establish branching strategies that accommodate parallel experimentation while maintaining reproducible results.

Experiment Tracking and Model Registry Platforms

Experiment tracking platforms solve the chaos of managing hundreds of ML experiments. MLflow stands out as the most popular open-source option, providing experiment logging, model packaging, and deployment capabilities. Weights & Biases offers superior visualization and collaboration features, making it easier for teams to compare results and share insights.

Neptune.ai excels at handling complex experiments with multiple runs and provides excellent integration with popular frameworks like PyTorch and TensorFlow. Amazon SageMaker Experiments and Azure ML Studio offer cloud-native solutions that integrate tightly with their respective ecosystems.

Key features to look for include:

- Automatic hyperparameter logging

- Real-time metric visualization

- Model artifact storage

- Collaboration and sharing capabilities

- API access for programmatic interaction

- Integration with popular ML libraries

Model registries centralize model storage and metadata, creating a single source of truth for production-ready models. They track model lineage, performance metrics, and deployment status, enabling better ML workflow management and governance.

Automated Testing Frameworks for ML Models

ML models require different testing approaches than traditional software. Great Expectations validates data quality and schema consistency, catching issues before they corrupt model training. The framework creates data documentation and alerts teams when datasets drift from expected patterns.

Evidently AI specializes in model monitoring and data drift detection, providing statistical tests that identify when model performance degrades. DeepChecks offers comprehensive model validation, including data integrity checks, model performance analysis, and fairness assessments.

Testing strategies should cover:

- Data validation: Schema compliance, missing values, outlier detection

- Model performance: Accuracy metrics, prediction consistency, inference speed

- Integration testing: API endpoints, data pipeline connections, deployment workflows

- Regression testing: Comparing new model versions against baselines

Continuous integration pipelines should automatically run these tests before model deployment, preventing problematic models from reaching production systems.

Cloud Platforms and Infrastructure Solutions

Modern MLOps best practices rely heavily on cloud infrastructure for scalability and collaboration. AWS SageMaker provides end-to-end ML lifecycle management with built-in algorithms, notebook environments, and automated model tuning. Google Cloud AI Platform offers similar capabilities with strong integration to TensorFlow and robust AutoML features.

Microsoft Azure ML combines familiar Azure services with ML-specific tools, making it attractive for organizations already using Microsoft infrastructure. All three platforms support distributed training, automatic scaling, and managed deployment endpoints.

Kubernetes-based solutions like Kubeflow provide platform-agnostic ML pipelines that work across different cloud providers. MLflow’s deployment capabilities integrate with these platforms, offering flexibility in deployment strategies.

Infrastructure considerations include:

- Compute resources: GPU availability, auto-scaling policies, cost optimization

- Storage solutions: Data lakes, feature stores, model artifact repositories

- Networking: VPC configuration, API gateways, security groups

- Monitoring: Resource utilization, model performance, cost tracking

Container orchestration through Docker and Kubernetes ensures consistent environments from development to production, reducing the “works on my machine” problem common in ML development. These containerized approaches support the machine learning pipeline requirements while maintaining reproducibility across different environments.

Continuous Improvement and Model Maintenance

Monitor model performance in production

Once your machine learning model goes live, keeping tabs on its performance becomes your top priority. Production environments are unpredictable beasts – what worked perfectly in testing might stumble when faced with real-world data patterns. Setting up comprehensive monitoring systems helps you catch issues before they impact your business outcomes.

Start by tracking key performance metrics that align with your business objectives. For classification models, monitor accuracy, precision, recall, and F1-scores. Regression models need MAE, RMSE, and R-squared tracking. But don’t stop at traditional ML metrics – business KPIs matter just as much. Track conversion rates, user engagement, or revenue impact depending on your use case.

Real-time dashboards and alerting systems are game-changers for ML operations. Tools like Grafana, DataDog, or MLflow can visualize performance trends and send alerts when metrics drop below acceptable thresholds. Set up automated monitoring pipelines that continuously evaluate your model’s predictions against ground truth data when available.

Log everything: input data distributions, prediction confidence scores, response times, and system resource usage. This logging strategy creates a paper trail that helps diagnose issues quickly. Consider implementing A/B testing frameworks to compare different model versions in production safely.

Detect and handle model drift

Model drift is the silent killer of machine learning systems. Your model might perform brilliantly during development but gradually lose effectiveness as real-world conditions change. Two main types of drift threaten your ML lifecycle: data drift and concept drift.

Data drift happens when input features change their statistical properties over time. Imagine a fraud detection model trained on pre-pandemic spending patterns – consumer behavior shifts dramatically during economic upheavals. Statistical tests like the Kolmogorov-Smirnov test or Population Stability Index help detect these changes by comparing current data distributions with your training baseline.

Concept drift occurs when the relationship between features and target variables evolves. Customer preferences shift, market conditions change, or seasonal patterns emerge that weren’t captured in historical training data. This type of drift is trickier to spot but equally dangerous for model performance.

Build drift detection into your ML workflow management using automated monitoring tools. Libraries like Evidently AI or Alibi Detect can continuously analyze incoming data and flag potential drift issues. Set up sliding window comparisons between recent data and your reference dataset to catch gradual changes.

When drift strikes, you have several response strategies. Quick fixes include adjusting prediction thresholds or applying simple corrections based on observed patterns. More robust solutions involve triggering retraining pipelines or switching to backup models until you can address the root cause.

Retrain models with new data systematically

Smart retraining strategies separate successful ML operations from failed experiments. Random retraining schedules waste resources and miss critical performance windows. Instead, build systematic approaches that balance model freshness with computational costs.

Trigger-based retraining works best for most production scenarios. Set up automated systems that initiate retraining when performance metrics drop below thresholds, when drift detection algorithms raise flags, or when sufficient new labeled data accumulates. This approach ensures your models stay relevant without unnecessary computational overhead.

Design your retraining pipeline as part of your broader machine learning pipeline architecture. Include data validation steps, feature engineering consistency checks, and model comparison phases. Your pipeline should automatically validate new model versions against holdout datasets before deployment decisions.

Version control becomes critical during continuous retraining cycles. Track model lineage, training data snapshots, hyperparameter configurations, and performance benchmarks for each iteration. Tools like DVC or MLflow help manage these complex versioning requirements across your ML development process.

Consider incremental learning approaches for models that can benefit from online updates. Streaming algorithms or transfer learning techniques allow you to incorporate new information without full retraining cycles. This strategy works particularly well for recommendation systems or real-time fraud detection where patterns evolve rapidly.

Implement robust rollback mechanisms in case new model versions underperform. Blue-green deployments or canary releases let you test updated models on small traffic portions before full rollouts. Always maintain previous model versions as safety nets during the transition period.

Building successful machine learning systems requires a structured approach that covers every step from data collection to ongoing maintenance. The key to ML success lies in treating it as a complete lifecycle rather than just building models. Smart data preparation, rigorous experimentation, thorough validation, and robust deployment practices form the foundation of reliable ML systems.

The real game-changer comes down to choosing the right tools and embracing MLOps practices that keep your models running smoothly in production. Don’t forget that launching your model is just the beginning – continuous monitoring and improvement separate winning ML projects from those that fade away. Start by implementing these best practices one step at a time, and you’ll build ML systems that actually deliver value for your business.