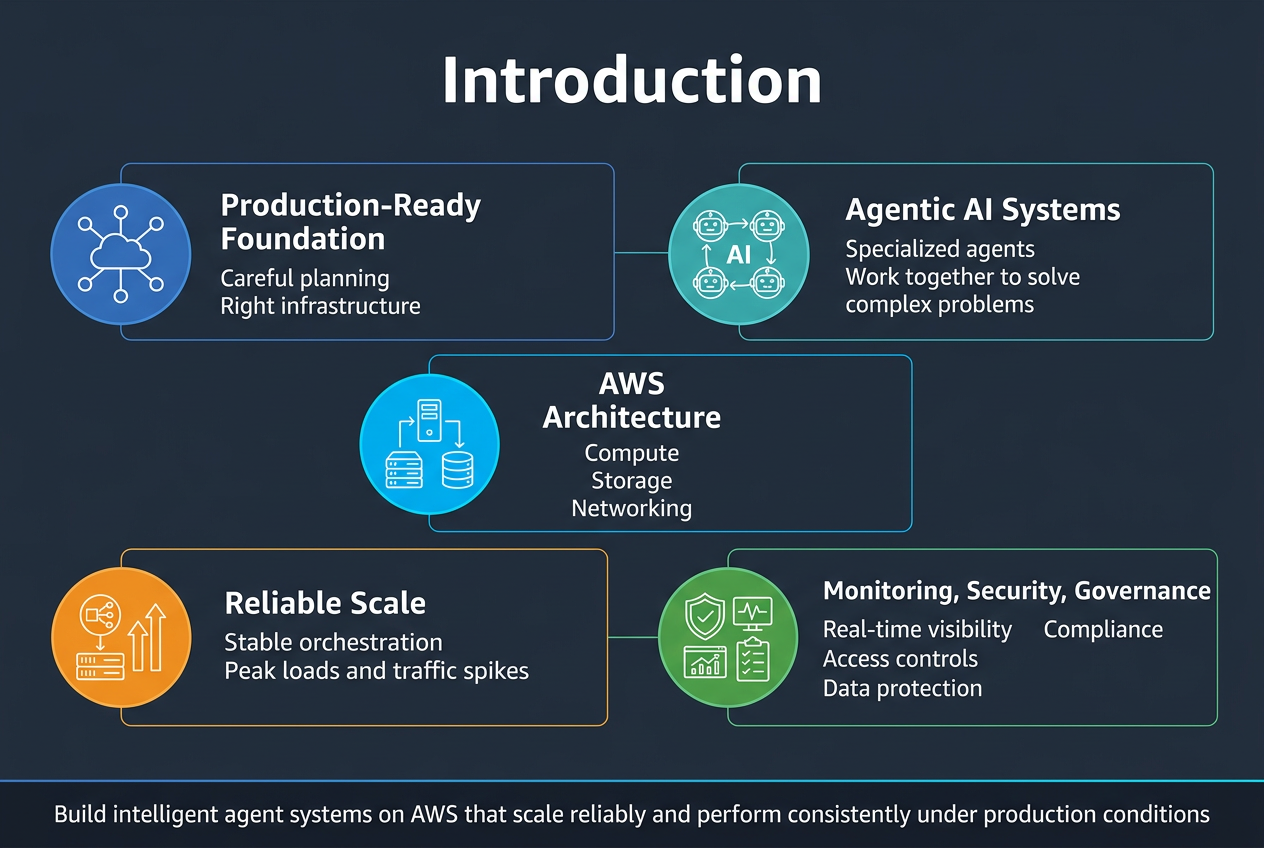

Building agentic AI systems that can handle real-world production workloads requires careful planning and the right infrastructure foundation. This guide is designed for AI engineers, solutions architects, and DevOps teams who need to deploy intelligent agent systems on AWS that can scale reliably and perform consistently under production conditions.

Agentic AI systems represent a shift from traditional single-model approaches to networks of specialized AI agents that work together to solve complex problems. Unlike monolithic AI applications, these multi-agent systems AWS environments need robust communication channels, sophisticated orchestration capabilities, and comprehensive monitoring to function effectively at scale.

We’ll explore how to build a solid AWS AI architecture foundation that supports your agent ecosystem, covering everything from compute resources and storage solutions to networking configurations that enable seamless agent interactions. You’ll learn practical deployment strategies that ensure your AI agent orchestration remains stable during peak loads and unexpected traffic spikes.

We’ll also dive into monitoring and observability practices that give you real-time visibility into agent behavior, performance metrics, and system health. Finally, we’ll cover the security and governance frameworks essential for production AI deployment, including access controls, data protection, and compliance requirements that keep your agentic AI framework both powerful and secure.

Understanding Agentic AI Architecture Fundamentals

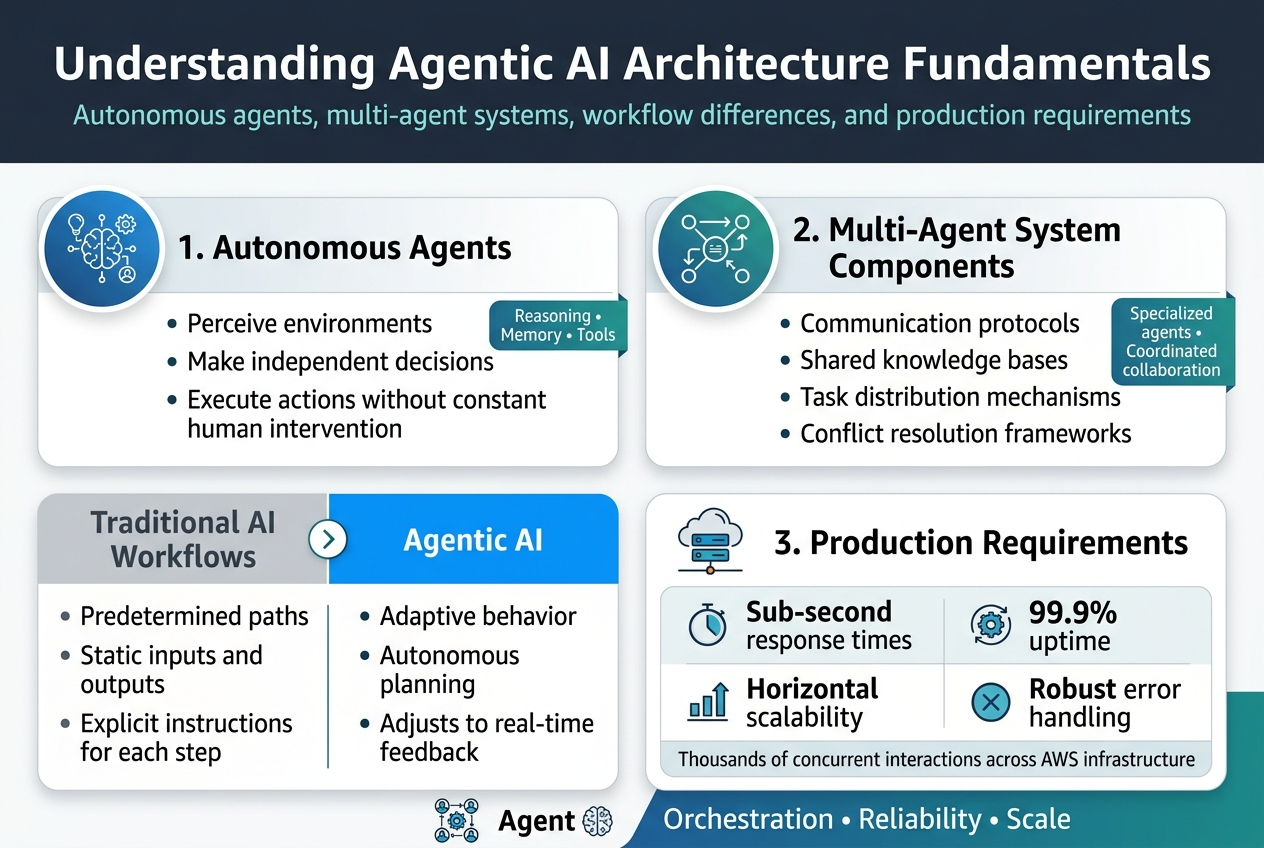

Defining autonomous agents and their decision-making capabilities

Autonomous agents represent a breakthrough in AI design, operating as intelligent entities that perceive environments, make independent decisions, and execute actions without constant human intervention. These agentic AI systems leverage advanced reasoning capabilities, memory persistence, and tool integration to handle complex, multi-step tasks that traditional AI workflows struggle with.

Key components of multi-agent systems

Multi-agent systems on AWS infrastructure require several critical components: communication protocols for inter-agent messaging, shared knowledge bases for collaborative learning, task distribution mechanisms, and conflict resolution frameworks. Each agent maintains its own specialized capabilities while contributing to collective problem-solving through coordinated interactions.

Differentiating agentic AI from traditional AI workflows

Traditional AI workflows follow predetermined paths with static inputs and outputs, while agentic AI framework systems demonstrate adaptive behavior and autonomous planning. Unlike conventional machine learning models that require explicit instructions for each step, agents can break down complex goals, make strategic decisions, and adjust their approach based on real-time feedback and changing conditions.

Performance requirements for production environments

Production-ready AI systems demand sub-second response times, 99.9% uptime, and seamless scalability across AWS machine learning infrastructure. Agent orchestration platforms must handle thousands of concurrent interactions while maintaining consistent performance, supporting horizontal scaling, and providing robust error handling mechanisms that prevent cascading failures in distributed agent networks.

AWS Infrastructure Foundation for Agentic Systems

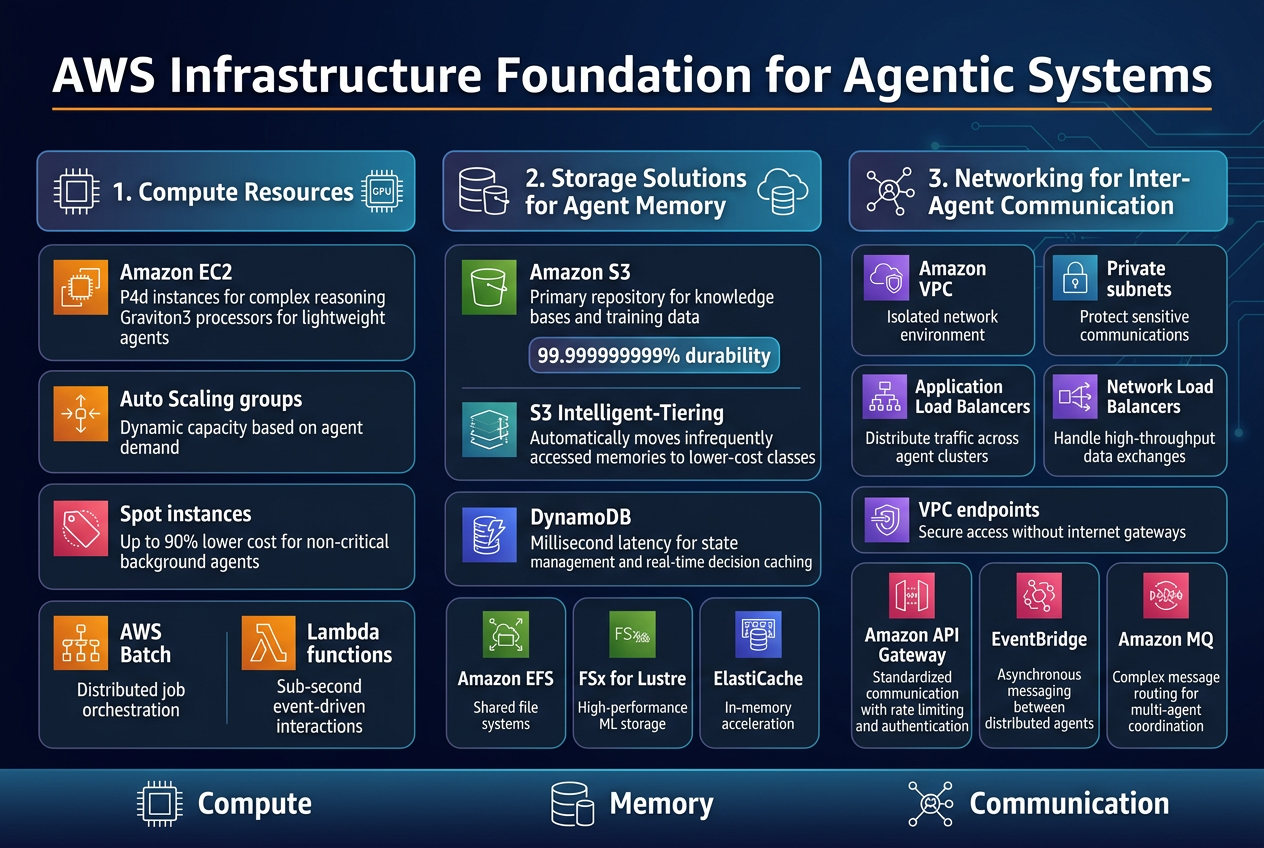

Selecting Optimal Compute Resources for Agent Workloads

Amazon EC2 provides the backbone for agentic AI systems, with P4d instances delivering exceptional GPU performance for complex reasoning tasks. Graviton3 processors offer cost-effective alternatives for lightweight agents handling coordination and data processing. Auto Scaling groups dynamically adjust capacity based on agent demand, while Spot instances reduce costs for non-critical background agents by up to 90%.

AWS Batch simplifies job orchestration across distributed agent networks, automatically provisioning resources for batch inference workloads. Lambda functions handle event-driven agent interactions with sub-second response times, perfect for triggering workflows and lightweight decision-making processes.

Designing Scalable Storage Solutions for Agent Memory

Amazon S3 serves as the primary repository for agent knowledge bases and training data, offering virtually unlimited capacity with 99.999999999% durability. S3 Intelligent-Tiering automatically optimizes costs by moving infrequently accessed agent memories to lower-cost storage classes. DynamoDB provides millisecond latency for agent state management and real-time decision caching.

Amazon EFS enables shared file systems across multiple agent instances, while FSx for Lustre delivers high-performance storage for intensive ML workloads. ElastiCache accelerates agent response times by caching frequently accessed data patterns and inference results in memory.

Implementing Robust Networking for Inter-Agent Communication

Amazon VPC creates isolated network environments with private subnets protecting sensitive agent communications from external threats. Application Load Balancers distribute traffic across agent clusters, while Network Load Balancers handle high-throughput data exchanges between autonomous systems. VPC endpoints enable secure access to AWS services without internet gateways.

Amazon API Gateway standardizes communication protocols between different agent types, providing rate limiting and authentication. EventBridge facilitates asynchronous messaging between distributed agents, while Amazon MQ supports complex message routing patterns for sophisticated multi-agent coordination workflows.

Building Resilient Agent Communication Frameworks

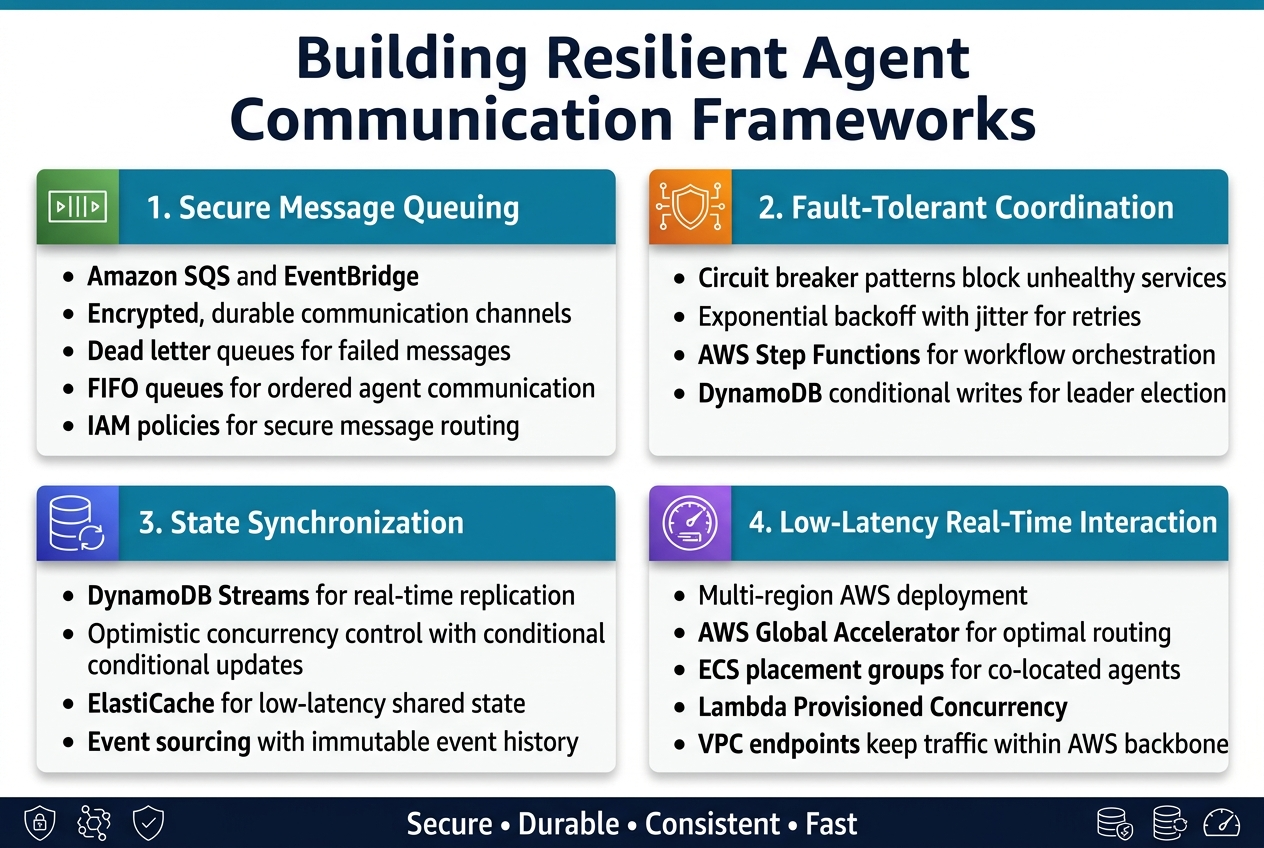

Establishing secure message queuing between agents

Message queues form the backbone of production agentic AI systems on AWS. Amazon SQS and EventBridge provide encrypted, durable communication channels that prevent message loss during agent failures. Dead letter queues capture failed messages for debugging, while FIFO queues maintain order-critical agent communications. IAM policies restrict access to specific agent roles, ensuring secure message routing across your multi-agent systems AWS infrastructure.

Implementing fault-tolerant coordination protocols

Circuit breaker patterns protect agents from cascading failures by temporarily blocking requests to unhealthy services. Implement exponential backoff with jitter for retry mechanisms, preventing thundering herd problems during recovery. AWS Step Functions orchestrates complex agent workflows with automatic error handling and state persistence. Leader election using DynamoDB conditional writes ensures single points of coordination remain available during network partitions.

Managing state synchronization across distributed agents

DynamoDB streams enable real-time state replication across agent instances without compromising consistency. Implement optimistic concurrency control using conditional updates to prevent race conditions in shared agent memory. AWS ElastiCache provides low-latency distributed caching for frequently accessed state data. Event sourcing patterns capture all state changes as immutable events, enabling complete system recovery and debugging capabilities for your agentic AI framework.

Optimizing latency for real-time agent interactions

Place agents in multiple AWS regions with cross-region replication to minimize geographic latency. Use AWS Global Accelerator to route agent communications through optimal network paths. Container placement groups on ECS ensure co-located agents share physical hardware for sub-millisecond communication. Lambda Provisioned Concurrency eliminates cold start delays for time-sensitive agent functions, while VPC endpoints keep traffic within AWS backbone networks for consistent performance.

Production Deployment Strategies and Orchestration

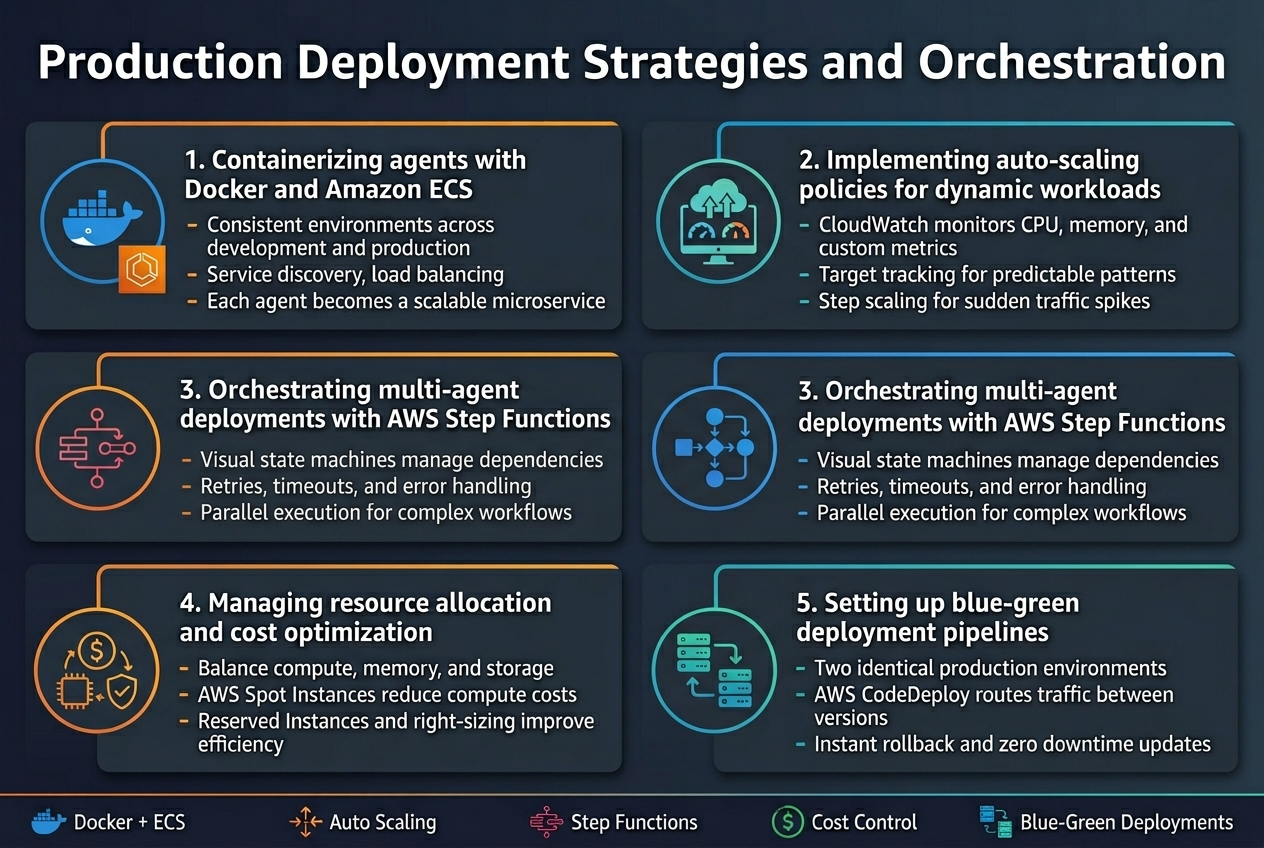

Containerizing agents with Docker and Amazon ECS

Docker containers provide the foundation for deploying agentic AI systems at scale, offering consistent environments across development and production. Amazon ECS simplifies container orchestration by automatically handling cluster management, service discovery, and load balancing for your AI agents. Each agent becomes a microservice with defined resource requirements, enabling independent scaling and updates without affecting the entire system.

Implementing auto-scaling policies for dynamic workloads

Auto-scaling policies keep your agentic AI systems responsive during varying workloads while controlling costs. ECS Service Auto Scaling monitors CloudWatch metrics like CPU usage, memory consumption, and custom application metrics to automatically adjust the number of running agent instances. Target tracking policies work best for predictable patterns, while step scaling handles sudden traffic spikes more effectively.

Orchestrating multi-agent deployments with AWS Step Functions

Step Functions coordinate complex multi-agent workflows through visual state machines that manage dependencies, error handling, and parallel execution. Each agent becomes a task within the workflow, allowing you to build sophisticated agentic AI frameworks that can adapt to different scenarios. The service handles retries, timeouts, and state management automatically, reducing the complexity of managing distributed agent communications.

Managing resource allocation and cost optimization

Resource allocation for agentic AI systems requires balancing performance with cost efficiency across compute, memory, and storage needs. AWS Spot Instances can reduce compute costs by up to 90% for fault-tolerant agents, while Reserved Instances provide predictable pricing for baseline capacity. Right-sizing instances based on actual usage patterns and implementing lifecycle policies for data storage helps optimize your AI infrastructure spending.

Setting up blue-green deployment pipelines

Blue-green deployments eliminate downtime when updating agentic AI systems by maintaining two identical production environments. AWS CodeDeploy automatically routes traffic between environments, allowing you to test new agent versions in production before switching over completely. This approach provides instant rollback capabilities if issues arise, ensuring your production AI deployment maintains high availability during updates and feature releases.

Monitoring and Observability for Agent Systems

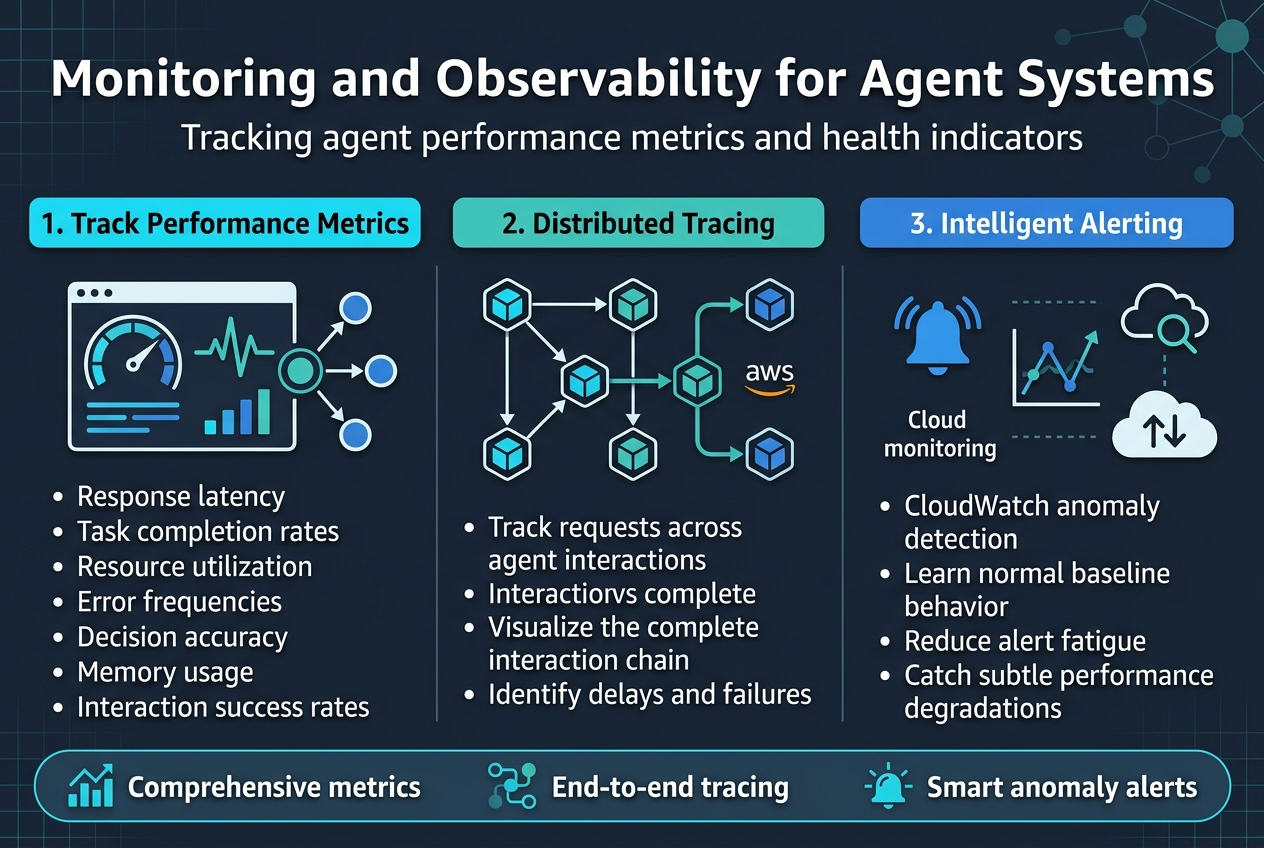

Tracking agent performance metrics and health indicators

Effective monitoring starts with establishing comprehensive metrics that capture both individual agent performance and system-wide health patterns. Key performance indicators include response latency, task completion rates, resource utilization, and error frequencies across your AWS AI architecture. Modern agentic AI systems require tracking agent-specific metrics like decision accuracy, memory usage, and interaction success rates to identify bottlenecks early.

Implementing distributed tracing across agent interactions

Distributed tracing becomes critical when multiple agents collaborate on complex workflows within your AWS infrastructure. AWS X-Ray provides native support for tracking requests as they flow between different AI agents, allowing you to visualize the complete interaction chain and identify where delays or failures occur in your agentic AI framework.

Setting up intelligent alerting for system anomalies

Smart alerting systems use machine learning to distinguish between normal operational variations and genuine anomalies in your production AI deployment. CloudWatch anomaly detection models learn your system’s baseline behavior patterns, reducing alert fatigue while catching subtle performance degradations that could impact your multi-agent systems AWS environment before they escalate into critical issues.

Security and Governance Considerations

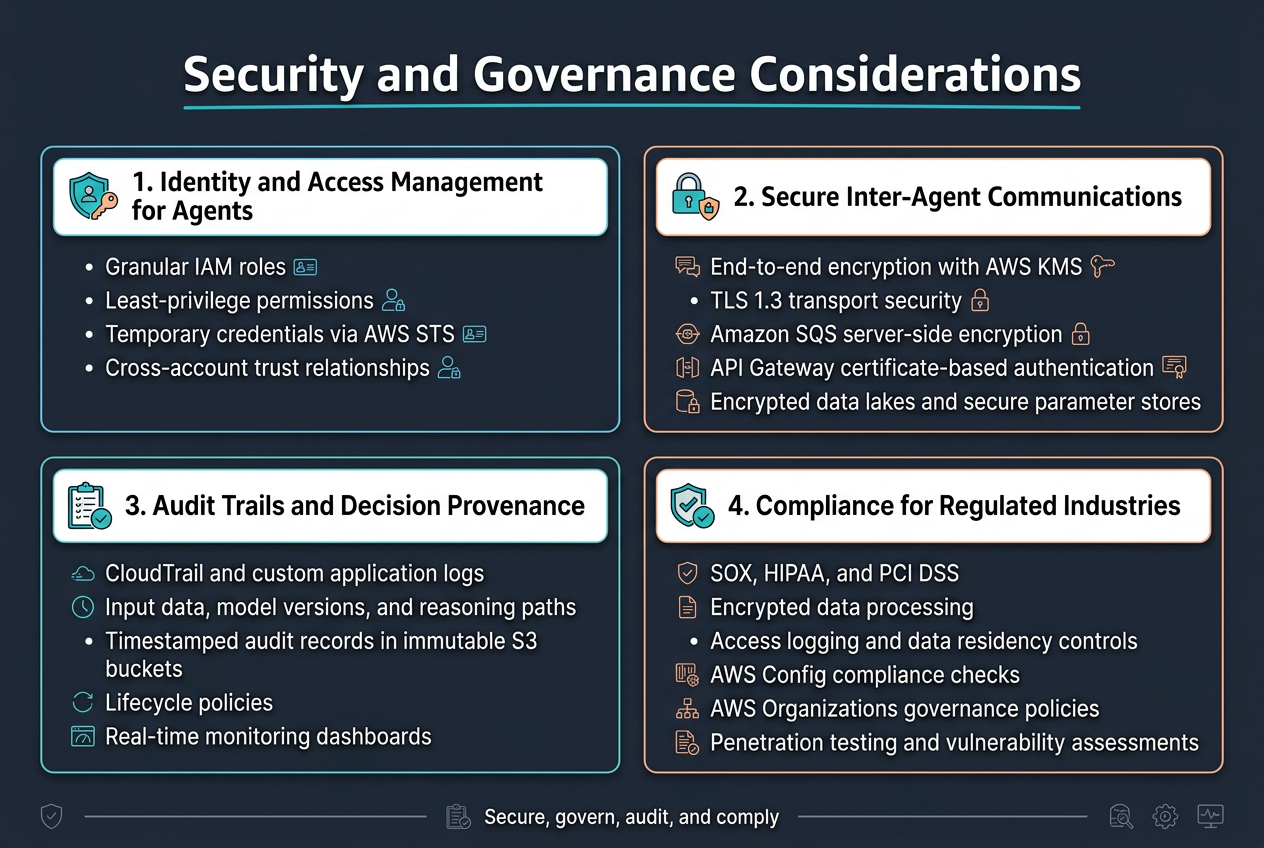

Implementing identity and access management for agents

AWS Identity and Access Management (IAM) forms the cornerstone of secure agentic AI systems. Each agent requires granular permissions through dedicated service roles that follow least-privilege principles. Multi-agent systems AWS deployments benefit from role-based access control where agents authenticate using temporary credentials via AWS STS. Cross-account trust relationships enable secure resource sharing while maintaining strict boundaries between agent capabilities.

Securing inter-agent communications with encryption

Agent-to-agent communication demands end-to-end encryption using AWS KMS for key management and TLS 1.3 for transport security. Message queues like Amazon SQS support server-side encryption, while API Gateway enforces certificate-based authentication. Production AI deployment scenarios require encrypted data lakes and secure parameter stores to protect sensitive agent configurations and model artifacts.

Establishing audit trails for agent decision-making

Comprehensive logging captures every agent action through AWS CloudTrail and custom application logs. Decision provenance tracking records input data, model versions, and reasoning paths for each autonomous action. AWS AI governance requirements include timestamped audit records stored in immutable S3 buckets with lifecycle policies. Real-time monitoring dashboards surface anomalous agent behaviors and decision patterns.

Compliance frameworks for regulated industries

Financial and healthcare sectors require specialized compliance measures for agentic AI framework deployments. SOX, HIPAA, and PCI DSS compliance demands encrypted data processing, access logging, and data residency controls. AWS Config rules automate compliance checking while AWS Organizations enforce governance policies across multi-account AI system monitoring environments. Regular penetration testing and vulnerability assessments validate security postures.

Building production-ready agentic AI systems on AWS requires careful planning across multiple layers, from understanding the core architecture principles to implementing robust security measures. The key is creating a solid foundation with AWS services that can handle the complex communication patterns between agents while maintaining reliability and performance. Your infrastructure choices, deployment strategies, and monitoring setup will make or break your system when it hits real-world traffic and complexity.

Security and governance can’t be afterthoughts in these systems—they need to be baked in from day one. Start small with a well-architected proof of concept, focus on getting your agent communication patterns right, and build out your observability stack early. The AWS ecosystem gives you all the tools you need, but success comes down to how thoughtfully you combine them and how well you prepare for the unexpected behaviors that emerge when intelligent agents start working together at scale.