Building robust RAG pipeline AWS infrastructure requires more than just connecting a few AI services together. Many organizations struggle with scaling their retrieval augmented generation systems while maintaining performance, controlling costs, and ensuring reliability at enterprise scale.

This comprehensive guide targets AI engineers, cloud architects, and DevOps teams who need to deploy production-ready RAG systems on Amazon Web Services. You’ll discover proven strategies for handling increased query volumes, managing infrastructure costs, and maintaining system reliability as your RAG applications grow.

We’ll explore building scalable RAG infrastructure that can handle enterprise workloads without breaking the bank. You’ll learn advanced monitoring and observability solutions that give you deep insights into your RAG pipeline performance, helping you spot bottlenecks before they impact users. Finally, we’ll cover essential performance optimization techniques and security best practices that keep your RAG systems running smoothly while meeting compliance requirements.

Ready to transform your RAG prototype into a production-grade AWS RAG architecture? Let’s dive into the strategies that separate successful implementations from costly failures.

Understanding RAG Pipeline Architecture on AWS

Core Components and Data Flow Mechanisms

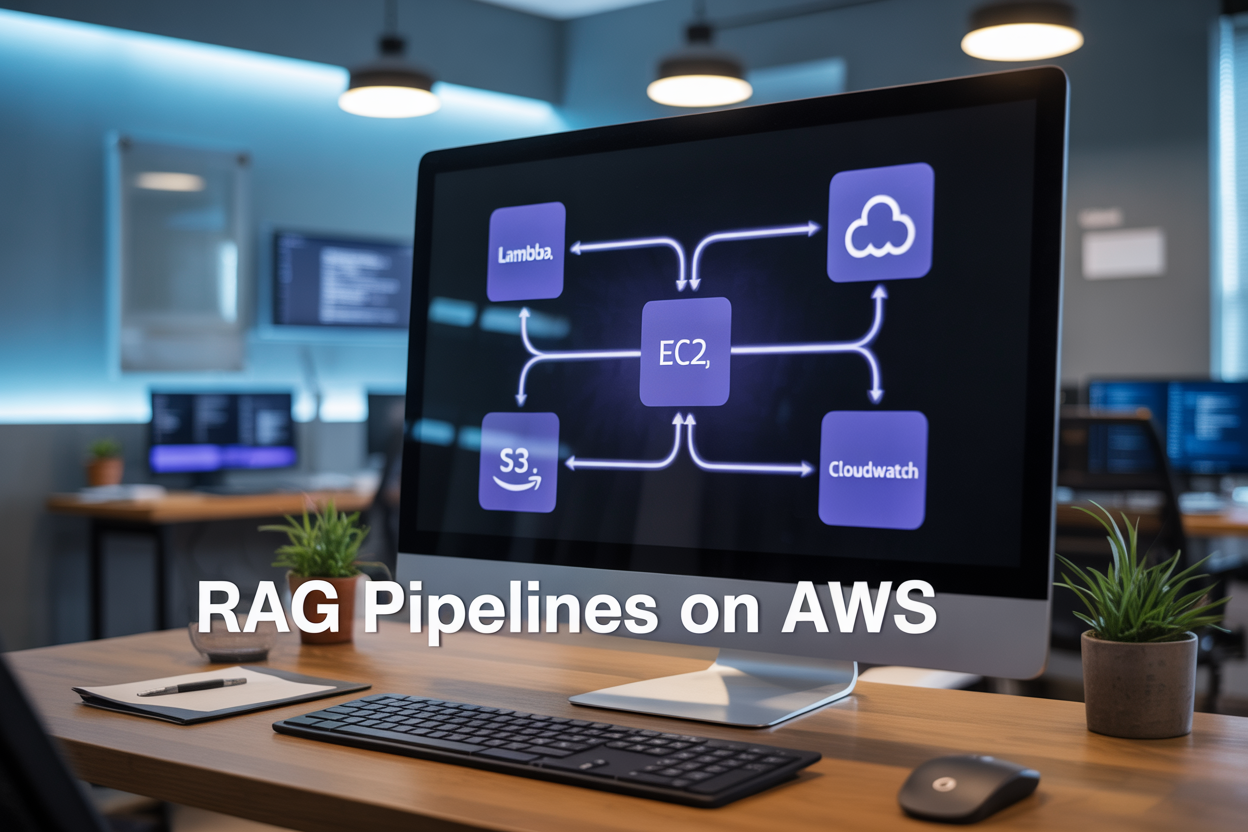

RAG pipeline AWS architectures consist of three primary components: document ingestion systems, vector storage layers, and query processing engines. The data flow begins when documents enter through AWS S3, get processed by Lambda functions for chunking and preprocessing, then transformed into embeddings using SageMaker endpoints. These embeddings flow into vector databases like Amazon OpenSearch or third-party solutions, creating searchable knowledge bases that enable contextual retrieval during inference.

AWS Service Integration for Retrieval Systems

AWS RAG architecture leverages multiple managed services working together seamlessly. Amazon Bedrock provides foundation model access, while SageMaker hosts custom embedding models and inference endpoints. API Gateway handles request routing, Lambda functions orchestrate retrieval logic, and CloudWatch monitors performance metrics. This native integration eliminates infrastructure complexity and provides automatic scaling capabilities that adapt to varying query volumes without manual intervention.

Vector Database Selection and Configuration

Choosing the right vector database impacts RAG performance significantly. Amazon OpenSearch offers native AWS integration with built-in security and scaling features, making it ideal for enterprise deployments. Alternative solutions like Pinecone or Weaviate provide specialized vector search capabilities but require additional configuration. Key factors include dimensionality support, similarity search algorithms, and integration complexity with your existing AWS RAG infrastructure setup.

Embedding Model Deployment Strategies

Deploying embedding models on AWS requires careful consideration of latency, cost, and accuracy trade-offs. SageMaker real-time endpoints provide low-latency inference for high-frequency applications, while batch transform jobs handle bulk processing efficiently. Amazon Bedrock offers pre-trained embedding models with managed scaling, reducing operational overhead. Custom model deployment through SageMaker gives you control over model selection and fine-tuning but demands more infrastructure management expertise and monitoring setup.

Building Scalable RAG Infrastructure

Auto-scaling Vector Databases for Dynamic Workloads

Managing fluctuating query loads requires smart auto-scaling for vector databases on AWS. Amazon OpenSearch Service with vector search capabilities automatically adjusts cluster capacity based on real-time demand, while Pinecone’s serverless architecture scales seamlessly without manual intervention. Configure CloudWatch metrics to trigger scaling events when query latency exceeds thresholds or when memory utilization peaks during high-traffic periods.

For hybrid deployments, Amazon RDS with pgvector extension can scale compute and storage independently. Set up custom scaling policies that consider both retrieval frequency and embedding dimensionality. This approach ensures your RAG pipeline AWS infrastructure maintains consistent performance while optimizing costs during low-usage periods.

Distributed Processing with Amazon ECS and Lambda

Amazon ECS orchestrates containerized RAG components across multiple availability zones, enabling horizontal scaling of embedding generation and document processing tasks. Deploy your retrieval services using ECS Fargate for serverless container management, automatically distributing workloads based on CPU and memory requirements. Configure service discovery to route requests efficiently between embedding models and vector stores.

AWS Lambda handles lightweight preprocessing and post-processing tasks within your retrieval augmented generation scaling strategy. Use Lambda functions for document chunking, metadata extraction, and response formatting. Combine ECS for sustained workloads with Lambda for burst processing, creating a resilient AWS RAG architecture that adapts to varying computational demands while maintaining low latency.

Load Balancing Strategies for High-Throughput Applications

Application Load Balancer (ALB) distributes incoming RAG queries across multiple service instances using intelligent routing algorithms. Configure target groups for different components like embedding services, vector databases, and language models. Health checks ensure traffic only reaches healthy instances, while sticky sessions maintain context for multi-turn conversations. Cross-zone load balancing provides fault tolerance across AWS regions.

Implement weighted routing to gradually shift traffic during deployments or A/B testing scenarios. Amazon API Gateway adds another layer of load distribution with built-in caching and rate limiting capabilities. For global applications, CloudFront CDN caches frequently accessed embeddings and responses, reducing load on backend services while improving response times for users worldwide.

Advanced Monitoring and Observability Solutions

Real-time Performance Metrics with CloudWatch

CloudWatch serves as the backbone for RAG monitoring observability, capturing critical metrics like embedding generation latency, vector search response times, and LLM inference duration. You can track query throughput, memory consumption across Lambda functions, and database connection pools to identify bottlenecks before they impact user experience.

Custom Dashboard Creation for RAG-Specific KPIs

Building custom dashboards transforms raw metrics into actionable insights for your retrieval augmented generation monitoring strategy. Create visualizations that display semantic search accuracy, chunk retrieval success rates, and end-to-end query completion times. These dashboards help teams quickly spot performance degradation and correlate issues across different pipeline components.

Alert Configuration for System Health Management

Smart alerting prevents minor issues from becoming major outages in your RAG pipeline AWS infrastructure. Configure thresholds for response time spikes, error rate increases, and resource exhaustion scenarios. Set up multi-level alerts that escalate based on severity, ensuring the right team members receive notifications when vector databases become unresponsive or embedding services hit capacity limits.

Cost Tracking and Resource Utilization Analysis

Monitoring costs becomes crucial as RAG workloads consume significant compute resources across multiple AWS services. Track spending patterns for SageMaker endpoints, OpenSearch clusters, and Lambda invocations to identify optimization opportunities. Analyze usage patterns to right-size instances and implement auto-scaling policies that balance performance with cost efficiency in your retrieval augmented generation scaling strategy.

Performance Optimization Techniques

Query Response Time Reduction Methods

Optimizing query response times in RAG pipeline AWS implementations starts with strategic indexing and retrieval mechanisms. Use Amazon OpenSearch Service with proper field mappings and implement hybrid search combining dense and sparse vectors. Configure connection pooling for database queries and leverage Amazon ElastiCache for frequently accessed embeddings. Parallel processing through AWS Lambda or ECS containers dramatically reduces latency by handling multiple retrieval operations simultaneously.

Memory and Compute Resource Allocation

Right-sizing compute resources prevents bottlenecks while controlling costs in your RAG infrastructure AWS deployment. Monitor memory usage patterns using CloudWatch metrics and scale Amazon EC2 instances based on actual workload demands. GPU instances like P4 or G5 families accelerate embedding generation, while CPU-optimized instances handle retrieval tasks efficiently. Auto Scaling Groups automatically adjust capacity during peak usage periods.

Caching Strategies for Frequently Accessed Data

Smart caching reduces redundant computations and database calls in RAG performance optimization scenarios. Implement multi-layer caching using Redis clusters for embedding vectors and DynamoDB for metadata. Cache generated responses at the application layer to serve identical queries instantly. Set appropriate TTL values based on data freshness requirements and implement cache warming strategies for predictable query patterns.

Batch Processing Optimization for Large Datasets

Process large document collections efficiently using Amazon EMR or AWS Batch for embedding generation. Partition datasets by document type or date ranges to enable parallel processing across multiple workers. Use Amazon S3 Transfer Acceleration for faster data ingestion and implement checkpointing to resume interrupted batch jobs. Configure optimal batch sizes based on memory constraints and processing time targets.

Model Quantization and Compression Benefits

Model quantization reduces memory footprint and inference time while maintaining acceptable accuracy levels. Convert models to INT8 or INT4 precision using AWS Inferentia chips or ONNX Runtime optimization. Implement dynamic quantization for embedding models and static quantization for language models. Compressed models enable deployment on smaller instance types, significantly reducing operational costs without sacrificing RAG pipeline performance.

Cost Management and Resource Efficiency

Right-sizing Infrastructure Components

Choosing the right instance types for your RAG pipeline AWS deployment directly impacts both performance and costs. Start by analyzing your workload patterns – text preprocessing typically needs CPU-optimized instances, while vector similarity searches benefit from memory-optimized configurations. Auto Scaling Groups automatically adjust capacity based on demand, preventing over-provisioning during low-traffic periods.

Regular performance monitoring reveals when components are underutilized or struggling. AWS Compute Optimizer provides recommendations for instance type changes, often identifying 20-30% cost savings opportunities. Consider graviton-based instances for compute-heavy tasks, as they deliver excellent price-performance ratios for retrieval augmented generation workloads.

Spot Instance Utilization for Training Workloads

Training embedding models or fine-tuning language models presents perfect opportunities for spot instance savings of up to 90%. Spot instances work exceptionally well for batch processing tasks where interruptions are manageable through checkpointing strategies.

Implement mixed instance policies combining spot and on-demand instances for critical training jobs. AWS Batch automatically handles spot interruptions by migrating workloads to available instances. For long-running training sessions, save model checkpoints frequently to S3, enabling seamless recovery when spot instances terminate.

Storage Optimization for Vector Embeddings

Vector embeddings consume significant storage space, making optimization crucial for AWS RAG cost optimization. Choose appropriate storage tiers based on access patterns – frequently queried embeddings belong in EBS gp3 volumes, while archival vectors can use S3 Intelligent-Tiering for automatic cost reduction.

Compress vector embeddings using techniques like quantization or dimensionality reduction without sacrificing search quality. OpenSearch Service with UltraWarm provides cost-effective storage for older embedding data. Regular cleanup of unused vectors and duplicate embeddings prevents storage bloat that silently increases monthly bills.

Security and Compliance Implementation

Data Encryption in Transit and at Rest

Securing your RAG pipeline security compliance on AWS starts with robust encryption strategies. AWS KMS provides centralized key management for encrypting vector databases, knowledge bases, and model artifacts. All data flows between retrieval systems and generation models must use TLS 1.2+ encryption. Configure S3 bucket encryption for document storage and enable encryption for Amazon OpenSearch Service clusters storing embeddings.

Access Control and Identity Management

AWS IAM policies control who can access your RAG pipeline components. Create service-specific roles for different pipeline stages – separate permissions for document ingestion, vector search, and model inference. Implement least-privilege access using resource-based policies and condition keys. Multi-factor authentication adds another security layer for administrative access to your AWS RAG architecture.

Audit Logging for Regulatory Requirements

CloudTrail captures all API calls across your RAG infrastructure AWS deployment. Enable detailed logging for S3 access patterns, Lambda function invocations, and database queries. CloudWatch Logs aggregates application-level events from retrieval and generation processes. Configure log retention policies that meet your compliance requirements – typically 7 years for financial services and 3 years for healthcare applications.

VPC Configuration for Network Isolation

Deploy your RAG pipeline within a private VPC to isolate network traffic from public internet access. Create separate subnets for different components – embeddings services, vector databases, and inference endpoints. Use security groups as virtual firewalls to control inbound and outbound traffic between services. VPC endpoints enable secure communication with AWS services without internet gateway routing.

RAG pipelines on AWS offer incredible potential for businesses looking to build intelligent, context-aware applications. By understanding the core architecture and building with scalability in mind from day one, you’ll save yourself countless headaches down the road. The monitoring tools and observability solutions we’ve explored give you the visibility needed to keep your pipelines running smoothly, while the optimization techniques can dramatically improve both performance and user experience.

Managing costs and maintaining security shouldn’t be afterthoughts in your RAG implementation. The strategies we’ve covered for resource efficiency and compliance will help you build systems that are not only powerful but also sustainable and secure. Start with a solid foundation, implement monitoring early, and continuously optimize based on real-world usage patterns. Your future self will thank you for taking the time to get these fundamentals right from the beginning.