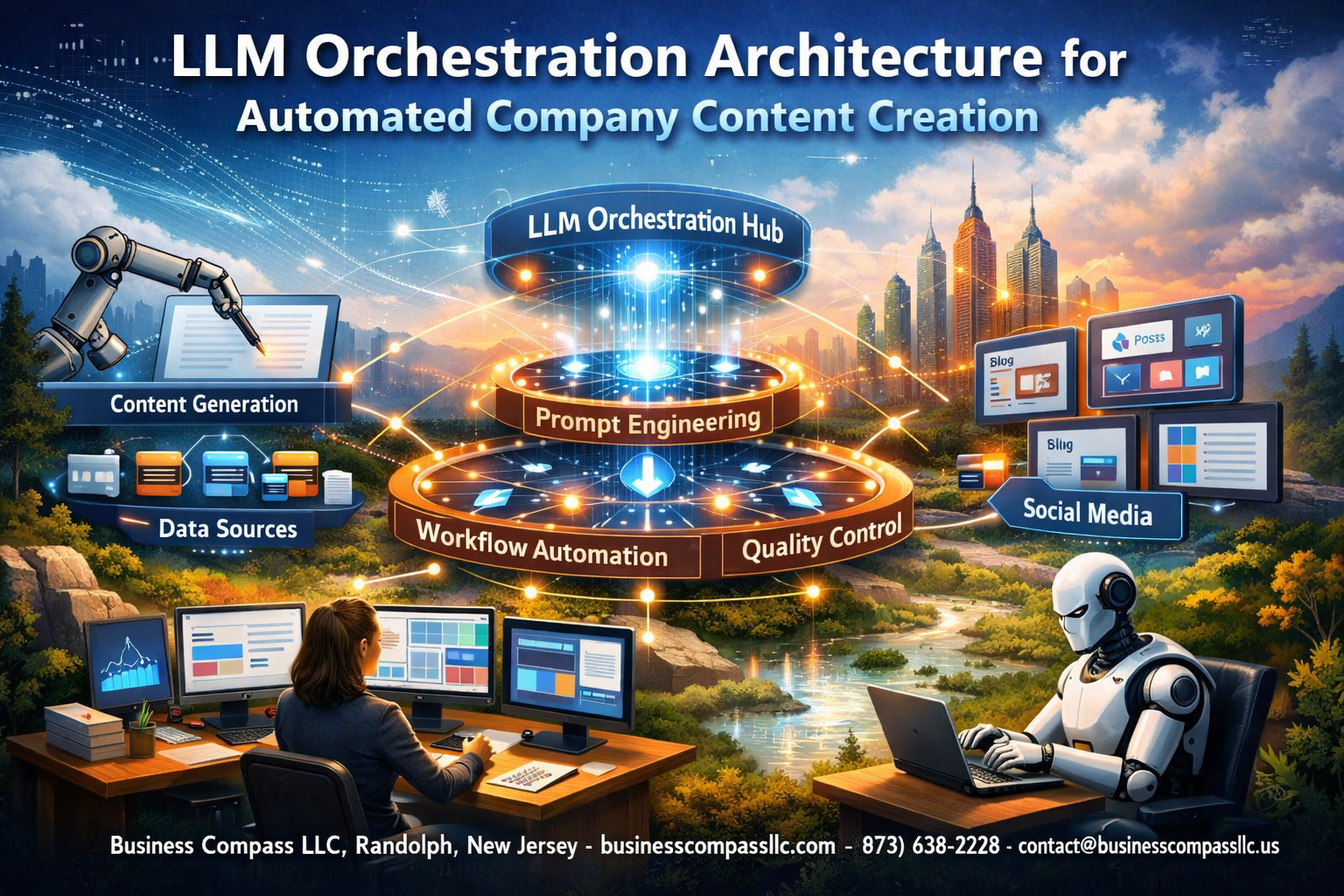

Modern companies are scrambling to scale their content creation while maintaining quality and brand consistency. LLM orchestration architecture solves this challenge by connecting multiple AI models, tools, and systems to automatically generate everything from blog posts to product descriptions.

This guide targets content managers, AI engineers, and business leaders who need to build or optimize automated content creation systems that actually work at scale. You’ll discover how enterprise AI workflows can transform your content operations from a manual bottleneck into a streamlined, intelligent process.

We’ll break down the essential content generation architecture components that power successful implementations. You’ll learn how to design LLM workflow automation systems that handle complex content requirements while maintaining your brand voice. Finally, we’ll cover the technical infrastructure needed to deploy these AI content orchestration platforms in enterprise environments.

Ready to move beyond simple AI writing tools? Let’s explore how strategic automated content systems can multiply your content output while reducing costs and improving consistency across all your marketing channels.

Understanding LLM Orchestration Fundamentals for Content Creation

Defining LLM orchestration and its role in automated workflows

LLM orchestration represents the coordinated management and execution of multiple large language models working together to complete complex content creation tasks. Think of it as conducting a digital orchestra where different AI models contribute their unique strengths to produce harmonious content output.

At its core, LLM workflow automation involves routing tasks through specialized models based on their capabilities. A research-focused model might handle fact-checking and data gathering, while a creative writing model crafts engaging narratives. A third model specializes in brand voice consistency, ensuring all content aligns with company standards.

This automated content creation approach transforms traditional single-model limitations into powerful multi-model capabilities. Instead of forcing one AI to handle every aspect of content generation, orchestration allows each model to excel in its designated role. The system intelligently manages handoffs between models, maintains context consistency, and ensures quality control throughout the process.

Modern AI content orchestration platforms handle the complex logistics of model coordination, including API management, token optimization, and failure recovery. They monitor performance metrics, adjust routing decisions based on real-time feedback, and scale resources dynamically based on demand.

The orchestration layer acts as the central nervous system for enterprise AI workflows, making decisions about which models to engage, when to parallelize tasks, and how to merge outputs into cohesive content pieces. This sophisticated coordination enables organizations to automate content creation at scale while maintaining quality standards.

Key benefits of orchestrated AI systems over single-model approaches

Orchestrated systems deliver superior performance through specialization and redundancy. While single models struggle with diverse content requirements, content generation architecture built on orchestration principles leverages multiple specialized models to handle different aspects of content creation.

Speed and efficiency improvements stand out as primary advantages. Automated content systems can process multiple content streams simultaneously, with different models working on various sections of the same project. This parallel processing capability reduces overall completion time from hours to minutes for complex content pieces.

Quality consistency represents another significant benefit. Single models often produce inconsistent outputs across different content types or topics. Orchestrated systems maintain specialized models for specific domains, ensuring technical documentation receives the same high-quality treatment as marketing copy, each handled by purpose-built AI components.

Cost optimization becomes achievable through intelligent resource allocation. LLM deployment architecture in orchestrated systems routes simple tasks to cost-effective models while reserving premium models for complex requirements. This approach can reduce operational costs by 40-60% compared to using high-end models for all tasks.

Risk mitigation improves substantially with orchestrated approaches. When one model experiences issues or produces subpar content, the system automatically reroutes tasks to backup models. This redundancy ensures enterprise content generation continues uninterrupted, protecting against single points of failure that plague monolithic AI implementations.

Error detection and correction become systematic rather than reactive. Orchestrated systems can implement quality gates where one model reviews and improves another’s output, creating natural feedback loops that enhance overall content quality.

Essential components of effective orchestration architecture

The orchestration engine serves as the central decision-making component, managing task routing, resource allocation, and workflow execution. This engine must handle complex logic trees that determine optimal model selection based on content type, urgency, quality requirements, and available resources.

AI orchestration platform infrastructure requires robust API gateway management to handle multiple model endpoints efficiently. This includes request throttling, authentication management, and intelligent load balancing across different model providers. The gateway must seamlessly integrate various model APIs while presenting a unified interface to content creation workflows.

Context management systems ensure information flows correctly between models throughout the orchestration process. These systems maintain conversation history, document metadata, and brand guidelines, making this information available to each model in the workflow chain. Without proper context management, content automation infrastructure fails to produce coherent, consistent outputs.

Quality assurance modules implement automated review processes that evaluate content against predefined criteria. These modules can assess factual accuracy, brand compliance, readability scores, and SEO optimization. Advanced implementations include feedback loops that help models learn from quality assessments to improve future performance.

Monitoring and analytics components track performance metrics across the entire orchestration pipeline. These systems monitor model response times, quality scores, cost per output, and user satisfaction ratings. This data drives optimization decisions and helps identify bottlenecks in automated content workflows.

Workflow definition tools allow non-technical users to design and modify content creation processes without programming knowledge. These visual workflow builders enable marketing teams to adjust orchestration logic based on changing requirements, seasonal campaigns, or new content strategies.

Core Architecture Components for Automated Content Generation

Multi-agent coordination systems for diverse content types

Modern LLM orchestration platforms rely on sophisticated multi-agent systems that can handle various content formats simultaneously. Each agent specializes in specific content types – one might excel at creating technical documentation while another focuses on marketing copy or social media posts. These agents work together like a well-coordinated team, sharing context and maintaining consistency across all content pieces.

The coordination happens through intelligent routing mechanisms that analyze incoming requests and assign them to the most suitable agents based on content type, complexity, and current workload. For example, when generating a product launch campaign, the system automatically distributes blog post creation to the long-form content agent, social media snippets to the micro-content specialist, and email sequences to the personalization-focused agent.

Cross-agent communication ensures that all generated content maintains the same brand voice and factual accuracy. When one agent discovers important information or makes stylistic decisions, this knowledge gets shared across the entire network, preventing inconsistencies that could damage brand reputation.

Task distribution engines for optimized workflow management

Effective automated content workflows depend on smart task distribution engines that maximize efficiency while minimizing bottlenecks. These engines analyze multiple factors when assigning work: current agent capacity, task complexity, deadline requirements, and historical performance data.

The distribution logic operates on priority-based queuing systems that can handle both scheduled content creation and urgent ad-hoc requests. High-priority tasks like crisis communication responses get immediate attention, while routine blog posts follow standard scheduling patterns.

Load balancing capabilities prevent any single agent from becoming overwhelmed while others remain idle. The system continuously monitors performance metrics and adjusts task allocation in real-time. If one content generation agent experiences delays, the engine automatically redistributes pending tasks to maintain overall throughput.

Resource optimization features include intelligent batching of similar tasks, which allows agents to work more efficiently by maintaining context across related content pieces. This approach significantly reduces the computational overhead associated with frequent context switching.

Quality control layers for consistent brand messaging

Enterprise-grade content automation requires multiple quality control checkpoints that ensure every piece of generated content meets brand standards. The first layer involves real-time content analysis that checks for tone consistency, factual accuracy, and adherence to style guidelines.

Automated brand voice verification compares generated content against established voice samples and flags deviations before publication. This prevents the common problem of AI-generated content that sounds robotic or off-brand. Advanced systems can even detect subtle tonal shifts that might occur when different agents contribute to the same piece.

Fact-checking mechanisms cross-reference generated claims against trusted knowledge bases and flag potentially inaccurate information for human review. These systems are particularly valuable for technical content where precision is critical.

Content compliance layers ensure that all generated material adheres to industry regulations and company policies. For heavily regulated industries like finance or healthcare, these automated checks prevent costly compliance violations.

Integration APIs for seamless tool connectivity

Robust API infrastructure connects LLM orchestration platforms with existing content management systems, design tools, and publishing workflows. RESTful APIs enable real-time data exchange between the orchestration platform and external tools, creating seamless end-to-end automation.

Content management system integrations allow generated content to flow directly into publishing pipelines without manual intervention. The system can automatically format content for different platforms, optimize images, and schedule publication according to content calendar requirements.

Marketing automation platform connections enable personalized content creation at scale. Customer data flows into the LLM orchestration system, which then generates personalized emails, product recommendations, and targeted landing page content based on individual user profiles.

Analytics integrations provide feedback loops that help optimize content performance. The system analyzes which content types perform best and adjusts future generation parameters accordingly. This creates a self-improving content creation engine that gets better over time.

Webhook support enables real-time notifications and triggers that keep all connected systems synchronized. When content gets approved or modified, all relevant platforms receive immediate updates, maintaining consistency across the entire content ecosystem.

Strategic Implementation of Content Creation Workflows

Mapping company content requirements to orchestration capabilities

Creating a successful LLM orchestration architecture starts with understanding your organization’s unique content landscape. Most companies struggle with this mapping process because they jump straight into technical implementation without properly analyzing their content ecosystem.

Begin by conducting a comprehensive content audit across all departments. Marketing teams might need blog posts, social media content, and product descriptions, while HR requires policy documents, training materials, and internal communications. Sales teams often need proposal templates, case studies, and competitive analysis reports. Each content type has specific requirements for tone, format, length, and approval processes.

Once you’ve cataloged content needs, match them to specific LLM orchestration capabilities. For instance, high-volume, standardized content like product descriptions pairs perfectly with template-based automation workflows. Complex, strategic content such as thought leadership articles benefits from multi-stage orchestration that includes research, drafting, and refinement phases.

Consider content velocity requirements when mapping capabilities. Daily social media posts need rapid generation and minimal review cycles, while quarterly reports require extensive fact-checking and stakeholder input. Your AI content orchestration platform should accommodate these varying timelines through configurable workflow speeds.

Don’t overlook compliance and brand consistency requirements. Financial services companies need content that adheres to regulatory guidelines, while retail brands require strict adherence to voice and tone standards. Modern LLM workflow automation systems can incorporate these constraints directly into the generation process.

Establishing content approval and review processes

Smart approval workflows prevent bottlenecks while maintaining quality standards. Traditional linear approval chains often create delays and confusion, especially when multiple stakeholders need input. Instead, design parallel review processes where appropriate team members can provide feedback simultaneously.

Implement role-based approval hierarchies that match your organization’s structure. Junior content pieces might only require departmental approval, while executive communications need C-suite sign-off. Your enterprise AI workflows should automatically route content based on predefined criteria such as topic sensitivity, target audience, or publication channel.

Create clear escalation paths for content that requires revisions. When automated content generation produces output that needs significant changes, the system should flag it for human review before entering standard approval flows. This prevents low-quality content from consuming reviewer time and maintains efficiency.

Build feedback loops that improve future content generation. When reviewers make consistent corrections—such as adjusting tone or adding specific technical details—capture these patterns to refine your content creation automation. This continuous learning approach reduces manual intervention over time.

Consider implementing conditional approval processes for different content types. Blog posts might require legal review only when discussing specific topics, while press releases always need PR team approval. Your automated content systems should apply these rules consistently without manual configuration for each piece.

Building scalable templates for different content formats

Effective templates form the backbone of any large language model architecture for content creation. Well-designed templates ensure consistency while providing enough flexibility for customization. Start by analyzing your highest-volume content types and identifying common structural patterns.

Create modular template components that can be mixed and matched. For example, product announcement templates might include standard sections for features, benefits, and technical specifications, but allow variable sections for different product categories. This modular approach enables your AI orchestration platform to generate diverse content while maintaining structural consistency.

Develop template hierarchies that cascade from general to specific formats. A master blog post template might define overall structure and tone, while child templates add specific elements for different topics like product reviews, industry news, or company updates. This hierarchy reduces maintenance overhead while ensuring brand consistency.

Include dynamic placeholder management in your templates. Rather than static fill-in-the-blank approaches, modern automated content workflows should intelligently adapt content length, complexity, and focus based on available input data. If product specifications are limited, the template should automatically emphasize available information while gracefully handling missing details.

Test template performance across different content scenarios before full deployment. A template that works well for software products might fail for services or consulting offerings. Your content automation infrastructure should include A/B testing capabilities that help optimize templates based on engagement metrics and user feedback.

Technical Infrastructure Requirements for Enterprise Deployment

Cloud-based orchestration platforms and deployment options

Modern LLM orchestration platforms offer multiple deployment pathways for enterprise automated content creation systems. Amazon Web Services provides orchestration through services like SageMaker Pipelines and Step Functions, enabling scalable AI content orchestration workflows. Microsoft Azure’s Machine Learning platform delivers robust pipeline management capabilities, while Google Cloud’s Vertex AI Pipelines supports complex content generation architecture requirements.

Kubernetes-based deployments offer containerized orchestration solutions that provide flexibility across cloud providers. Tools like Kubeflow and MLflow enable teams to build enterprise AI workflows that can migrate between environments seamlessly. Docker containers ensure consistent deployment environments for LLM workflow automation components.

Multi-cloud strategies reduce vendor lock-in while providing redundancy for critical automated content systems. Hybrid deployments combine on-premises infrastructure with cloud resources, allowing sensitive data processing locally while leveraging cloud scalability for compute-intensive tasks.

Data security protocols for sensitive company information

Enterprise content creation automation demands stringent security measures to protect proprietary information and intellectual property. Data encryption at rest and in transit forms the foundation of secure LLM deployment architecture. Advanced encryption standards (AES-256) protect stored content templates, brand guidelines, and generated materials.

Identity and access management (IAM) systems control user permissions and API access to AI orchestration platforms. Multi-factor authentication, role-based access controls, and audit logging track every interaction with sensitive content generation systems.

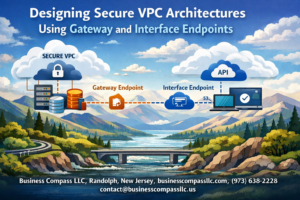

Data anonymization techniques scrub personally identifiable information before processing through large language model architecture components. Tokenization and masking preserve data utility while protecting customer privacy. Private cloud deployments or virtual private clouds (VPCs) isolate automated content workflows from external networks.

Regular security assessments and penetration testing validate the integrity of content automation infrastructure. Compliance frameworks like SOC 2, GDPR, and HIPAA guide implementation of appropriate safeguards for different industries and data types.

Performance monitoring and system optimization techniques

Real-time monitoring dashboards track key performance indicators across enterprise content generation pipelines. Metrics include response latency, throughput rates, error frequencies, and resource utilization patterns. Tools like Prometheus and Grafana provide visualization capabilities for complex AI-driven content creation workflows.

Load balancing distributes requests across multiple LLM orchestration instances, preventing bottlenecks during peak content generation periods. Auto-scaling policies automatically provision additional compute resources based on demand patterns, ensuring consistent performance for automated content systems.

Caching strategies reduce redundant API calls and improve response times. Content templates, frequently used prompts, and generated outputs benefit from intelligent caching mechanisms that balance freshness with performance.

Performance profiling identifies bottlenecks in content generation architecture components. Database query optimization, API response compression, and asynchronous processing techniques enhance overall system efficiency.

Cost management strategies for large-scale operations

Token usage optimization reduces operational costs for LLM workflow automation by implementing intelligent prompt engineering and response caching. Batch processing groups similar content requests, maximizing API efficiency and reducing per-request costs.

Resource scheduling aligns compute-intensive tasks with off-peak pricing periods. Spot instances and preemptible virtual machines offer significant cost savings for non-critical automated content workflows that can tolerate interruptions.

Cost allocation tracking attributes expenses to specific business units, projects, or content types. This granular visibility enables informed decisions about AI orchestration platform resource allocation and helps identify optimization opportunities.

Reserved instance purchasing provides predictable pricing for stable enterprise AI workflows. Committed use discounts from cloud providers can reduce costs by 30-50% for sustained workloads. Regular cost analysis and optimization reviews ensure content automation infrastructure remains financially efficient as usage scales.

Maximizing ROI Through Advanced Orchestration Features

Dynamic Content Personalization Based on Audience Segmentation

Modern LLM orchestration platforms deliver exceptional ROI by creating tailored content for specific audience segments. These systems analyze user behavior, demographic data, and engagement patterns to automatically generate personalized messaging that resonates with different customer groups.

Advanced AI content orchestration leverages machine learning algorithms to identify micro-segments within your audience. The system creates distinct content variations for executives, technical decision-makers, and end-users, adjusting tone, complexity, and focus areas accordingly. This personalization extends beyond simple template swapping – the LLM workflow automation intelligently modifies examples, case studies, and benefits statements to match each segment’s priorities.

Enterprise content generation systems track conversion rates across segments, continuously refining personalization strategies. When the platform detects that technical audiences respond better to detailed implementation guides while business leaders prefer ROI-focused summaries, it automatically adjusts future content creation to match these preferences.

The orchestration platform maintains consistency across personalized content while ensuring brand voice remains intact. Content creation automation rules prevent messaging conflicts and maintain coherent brand positioning across all segments.

Automated SEO Optimization and Keyword Integration

Sophisticated LLM deployment architecture includes built-in SEO optimization capabilities that dramatically improve content performance. These systems analyze search trends, competitor content, and keyword opportunities to automatically enhance content visibility without sacrificing quality or readability.

The automated content workflows integrate semantic keyword analysis, ensuring content naturally incorporates target phrases while maintaining conversational flow. Instead of forced keyword stuffing, the AI orchestration platform understands search intent and creates content that genuinely addresses user queries.

Advanced features include:

- Real-time keyword density monitoring that prevents over-optimization

- Competitor gap analysis identifying content opportunities

- Long-tail keyword discovery for niche topic targeting

- Meta description and title tag generation optimized for click-through rates

- Internal linking suggestions that improve site architecture

The system continuously monitors search algorithm changes and adjusts optimization strategies accordingly. When Google updates its ranking factors, the content automation infrastructure adapts its approach to maintain search performance.

Multi-language Content Generation for Global Markets

Enterprise AI workflows excel at scaling content across international markets through intelligent multi-language generation. These systems go beyond simple translation, creating culturally appropriate content that resonates with local audiences while maintaining brand consistency.

The LLM orchestration platform understands regional preferences, cultural nuances, and local market conditions. When creating content for Japanese markets, the system adjusts communication styles to reflect cultural preferences for indirect communication and consensus-building. For German audiences, it emphasizes technical specifications and thorough documentation.

Automated content systems handle:

- Cultural adaptation of examples and case studies

- Local compliance requirements for different regions

- Currency and measurement conversions

- Regional product availability mentions

- Time zone and holiday considerations

The platform maintains translation memory databases, ensuring consistent terminology across all content pieces. When technical terms or brand-specific language appears, the system applies approved translations automatically.

Quality assurance workflows include native speaker reviews for critical content pieces, while routine communications receive automated cultural appropriateness scoring. This hybrid approach balances efficiency with quality for different content types.

Real-time Analytics and Performance Tracking Capabilities

Advanced AI orchestration platforms provide comprehensive analytics that transform content creation from guesswork into data-driven strategy. These systems track content performance across multiple channels, providing insights that continuously improve automated content workflows.

Real-time dashboards display engagement metrics, conversion rates, and audience behavior patterns. The large language model architecture learns from this feedback, automatically adjusting future content generation to improve performance. When blog posts with specific formatting styles show higher engagement, the system incorporates these preferences into new content.

Key tracking capabilities include:

- Content performance scoring across different platforms

- A/B testing automation for headlines and calls-to-action

- Audience engagement heatmaps showing content section performance

- Conversion attribution linking content to business outcomes

- ROI calculation for different content types and campaigns

The system identifies top-performing content patterns and automatically applies these insights to new content creation. If data shows that listicle formats generate 40% more social shares than traditional articles, the content creation automation prioritizes list-based structures for relevant topics.

Predictive analytics help forecast content performance before publication, allowing teams to optimize pieces proactively. The platform suggests improvements based on historical data and current market trends, ensuring each piece of content maximizes its potential impact.

Performance data feeds back into the orchestration engine, creating a self-improving system that becomes more effective over time. This continuous learning approach ensures sustained ROI growth as the platform accumulates more performance data and refines its content generation strategies.

Building an effective LLM orchestration system for automated content creation requires careful attention to both technical infrastructure and strategic workflow design. The key lies in understanding how core components work together—from API management and model selection to content validation and quality control mechanisms. Companies that invest time in mapping out their content workflows and choosing the right technical foundation will see the biggest returns on their automation efforts.

The real magic happens when your orchestration system becomes smart enough to handle different content types while maintaining your brand voice and quality standards. Start small with one content type, get that working smoothly, then expand your system to handle more complex workflows. Focus on building robust monitoring and feedback loops early on—they’ll save you countless hours of troubleshooting later. Remember, the goal isn’t just to automate content creation, but to create a system that consistently delivers value while freeing up your team to focus on higher-level strategy and creative work.