AWS Bedrock makes running AI inference simple by giving you access to powerful foundation models through a single API. This guide is for developers, ML engineers, and cloud architects who want to understand how AWS Bedrock inference works and start building AI applications without managing infrastructure.

You’ll learn about AWS Bedrock’s architecture and how foundation model deployment works behind the scenes. We’ll walk through setting up Bedrock API operations for your first inference requests and show you AWS AI inference optimization techniques that keep costs down while boosting performance. Finally, we’ll cover Bedrock security best practices that keep your AWS generative AI models safe in production environments.

By the end, you’ll know exactly how to use AWS machine learning inference capabilities to build scalable AI applications that your users will love.

Understanding AWS Bedrock Architecture and Core Components

Exploring the foundational infrastructure of AWS Bedrock

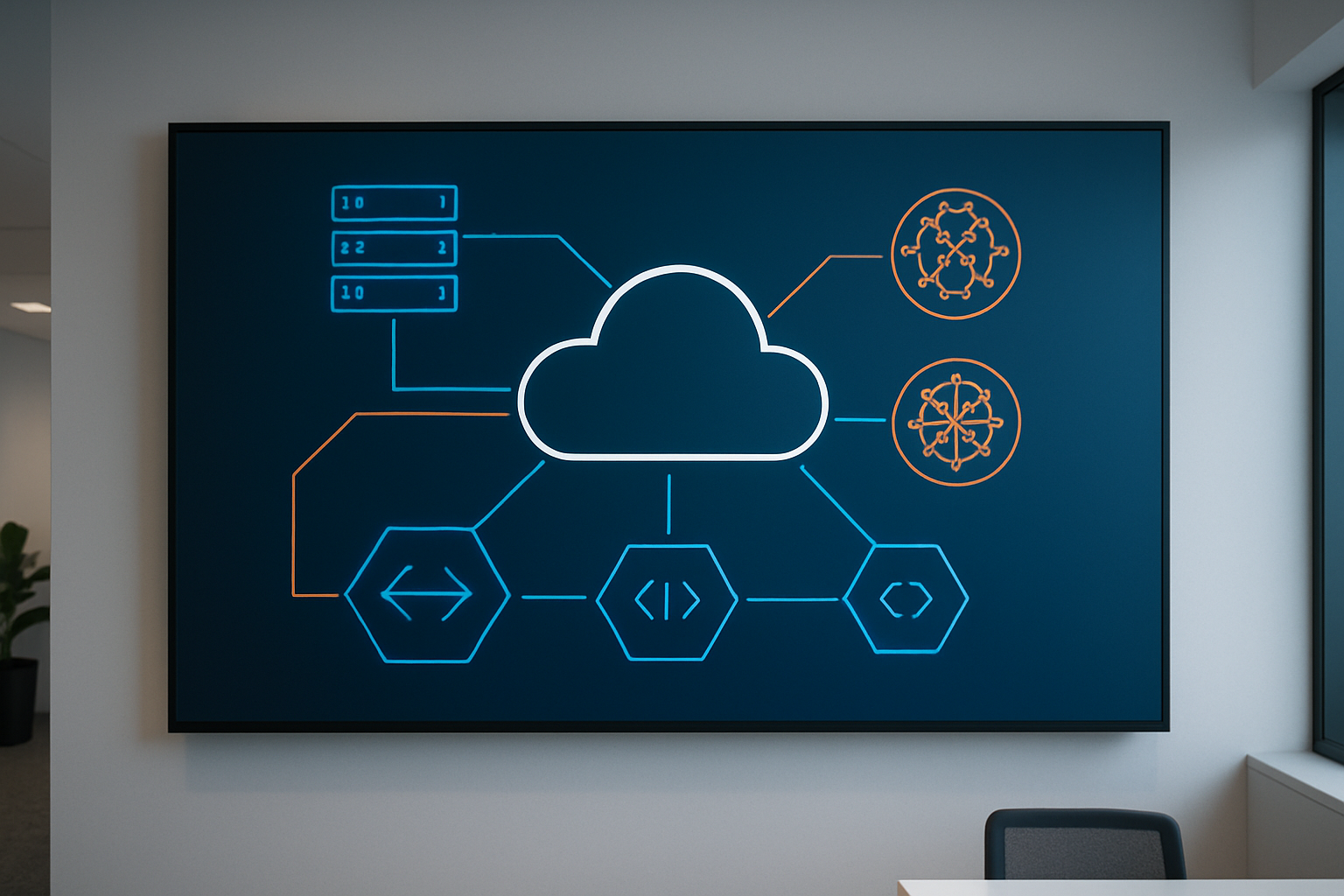

AWS Bedrock sits on top of Amazon’s robust cloud infrastructure, designed specifically for generative AI workloads. The service runs on dedicated compute resources optimized for machine learning inference, with specialized hardware including GPUs and custom silicon that can handle the massive computational requirements of foundation models.

The underlying architecture follows a serverless model, meaning you don’t need to provision or manage any infrastructure yourself. When you make an inference request, AWS Bedrock automatically handles the scaling, load balancing, and resource allocation behind the scenes. This approach eliminates the complexity of managing GPU clusters or worrying about capacity planning for your AI applications.

The service operates across multiple AWS regions, providing low-latency access to foundation models regardless of where your applications are deployed. Each region maintains its own model endpoints, ensuring data residency requirements are met while delivering consistent performance.

Identifying key service components for inference operations

AWS Bedrock inference revolves around several core components that work together seamlessly. The Model Registry serves as the central catalog where all available foundation models are listed with their specifications, capabilities, and pricing information. This registry includes models from providers like Anthropic, AI21 Labs, Cohere, and Amazon’s own Titan models.

Inference Endpoints act as the communication layer between your applications and the foundation models. These endpoints are managed REST APIs that accept your prompts and return generated responses. Each model family has its own set of endpoints with specific request and response formats.

The Model Serving Infrastructure handles the actual model execution. This component automatically manages model loading, memory allocation, and parallel processing to ensure optimal performance. The infrastructure can dynamically scale based on demand, spawning additional instances when traffic increases and scaling down during quieter periods.

Request Processing includes built-in throttling, queue management, and error handling mechanisms. When requests exceed capacity limits, the system queues them intelligently rather than dropping them, ensuring reliable service delivery even during traffic spikes.

Understanding the role of foundation models in the ecosystem

Foundation models serve as the brain of AWS Bedrock, each trained on vast datasets to perform specific types of tasks. These models aren’t just simple APIs – they’re sophisticated neural networks with billions of parameters that can understand context, generate human-like text, create images, and even write code.

Text Generation Models like Claude and Titan Text excel at conversational AI, content creation, and document analysis. They can maintain context across long conversations and adapt their writing style based on prompts. These models power chatbots, content generation tools, and automated writing assistants.

Embedding Models transform text into numerical representations that capture semantic meaning. These vectors enable similarity searches, recommendation systems, and knowledge base queries. They’re particularly valuable for building RAG (Retrieval-Augmented Generation) applications that combine your private data with foundation model capabilities.

Multimodal Models can process and generate both text and images, opening up possibilities for visual content creation, image analysis, and cross-modal applications. These models understand the relationship between visual and textual information, enabling more sophisticated AI applications.

Recognizing integration points with other AWS services

AWS Bedrock integrates deeply with the broader AWS ecosystem, creating powerful workflows for AI-powered applications. Amazon S3 serves as the natural storage layer for training data, prompt templates, and generated content. You can store large datasets in S3 and reference them in your Bedrock applications without moving data around.

AWS Lambda enables serverless AI applications where you can trigger Bedrock inference operations in response to events like file uploads, API calls, or scheduled tasks. This combination allows you to build cost-effective AI workflows that only run when needed.

Amazon DynamoDB pairs well with Bedrock for storing conversation history, user preferences, and application state. The fast, NoSQL database can handle the high-throughput requirements of real-time AI applications while maintaining low latency.

Amazon API Gateway provides a managed way to create secure, scalable APIs that front your Bedrock applications. You can add authentication, rate limiting, and monitoring to your AI services without building these features from scratch.

AWS CloudWatch delivers comprehensive monitoring and logging for your Bedrock usage, tracking metrics like request volume, latency, and error rates. This observability helps you optimize performance and troubleshoot issues in production environments.

The integration extends to AWS IAM for fine-grained access control, AWS CloudFormation for infrastructure as code deployments, and Amazon EventBridge for building event-driven AI architectures. These connections make AWS Bedrock feel like a natural extension of your existing AWS infrastructure rather than a separate service bolted on top.

Foundation Models Available for Inference Tasks

Comparing Text Generation Models and Their Capabilities

AWS Bedrock hosts several powerful text generation models, each bringing unique strengths to your inference tasks. Amazon Titan Text models excel at general-purpose tasks with strong cost-effectiveness, making them perfect for content generation, summarization, and question-answering. These models handle multiple languages well and integrate seamlessly with other AWS services.

Anthropic’s Claude models stand out for their safety-first approach and nuanced understanding of context. Claude 3 Sonnet offers balanced performance across creative writing, analysis, and coding tasks, while Claude 3 Haiku delivers lightning-fast responses for high-volume applications. The Claude family particularly shines when you need detailed reasoning or want to minimize harmful outputs.

AI21 Labs’ Jurassic models bring exceptional multilingual capabilities and excel at tasks requiring deep comprehension. Their strong performance in languages beyond English makes them valuable for global applications. Cohere’s Command models focus on business use cases, offering reliable performance for customer service, content creation, and data analysis tasks.

| Model Family | Best For | Key Strengths | Typical Use Cases |

|---|---|---|---|

| Amazon Titan | General purpose | Cost-effective, AWS integration | Content generation, Q&A |

| Anthropic Claude | Safety-critical apps | Context awareness, reasoning | Analysis, coding assistance |

| AI21 Jurassic | Multilingual tasks | Language diversity | Global content, translation |

| Cohere Command | Business applications | Enterprise features | Customer service, analytics |

Evaluating Image and Multimodal Model Options

AWS Bedrock’s multimodal capabilities open doors to sophisticated applications that process both text and visual content. Amazon Titan Multimodal Embeddings transform images and text into numerical representations, enabling powerful search and recommendation systems. These embeddings help you build applications that understand the relationship between visual and textual content.

Anthropic’s Claude 3 models (Sonnet and Haiku) process images alongside text, making them ideal for document analysis, visual question-answering, and content moderation. You can upload images directly through the Bedrock API and ask Claude to describe, analyze, or answer questions about visual content. This capability proves especially valuable for processing charts, diagrams, screenshots, and real-world photos.

Stability AI’s models focus on image generation and editing tasks. These foundation models create high-quality images from text descriptions and modify existing images based on prompts. The models handle various artistic styles and can generate everything from photorealistic images to abstract art.

When choosing multimodal models, consider your specific requirements:

- Image analysis: Claude 3 models for understanding and describing images

- Visual search: Titan Multimodal Embeddings for similarity matching

- Content generation: Stability AI models for creating visual content

- Document processing: Claude models for extracting information from visual documents

Understanding Model Licensing and Usage Terms

Each Bedrock foundation model comes with specific licensing agreements that affect how you can use them in production. Amazon Titan models operate under AWS’s standard service terms, giving you broad commercial usage rights without additional licensing fees beyond the standard inference costs.

Third-party models like Claude, Jurassic, and Cohere maintain their own licensing frameworks. These agreements typically allow commercial use but may include restrictions on certain applications or require attribution. Most providers prohibit using their models to generate illegal content, create deepfakes, or engage in harmful activities.

Key licensing considerations include:

- Commercial usage rights: Most models allow commercial applications

- Data retention policies: Some providers don’t store your prompts or outputs

- Geographic restrictions: Certain models may have regional availability limits

- Volume limitations: Enterprise usage might require direct partnerships

- Compliance requirements: Industry-specific regulations may apply

Always review the current licensing terms before deploying models in production. AWS provides documentation links to each provider’s terms of service, and these agreements can evolve as providers update their policies.

Selecting the Right Model for Specific Use Cases

Choosing the optimal Bedrock foundation model requires matching your application requirements with each model’s strengths. Start by defining your primary use case, performance requirements, and budget constraints.

For content creation and marketing, consider Titan Text for cost-effective blog posts and product descriptions, or Claude 3 Sonnet when you need creative, engaging copy with brand voice consistency. Marketing teams often prefer Claude’s ability to maintain tone and style across different content types.

Customer service applications benefit from Claude models’ safety features and context understanding. These models handle sensitive customer interactions better and provide more helpful, accurate responses. Cohere Command also performs well in customer-facing scenarios with its business-focused training.

Technical documentation and coding assistance work best with Claude 3 Sonnet, which understands programming concepts and can explain complex technical topics clearly. The model’s reasoning capabilities help it provide accurate code examples and troubleshooting guidance.

Multilingual applications should leverage AI21 Jurassic models for their strong performance across different languages. These models maintain quality when working with non-English content and handle cultural nuances better than alternatives.

High-volume, cost-sensitive applications often choose Titan models or Claude 3 Haiku for their favorable pricing and fast response times. These options work well for chatbots, automated content generation, and real-time applications where speed matters more than premium features.

Consider running parallel tests with multiple models using your actual use cases. AWS Bedrock’s pay-per-use pricing makes it affordable to experiment with different options before committing to a primary model for production deployment.

Setting Up Your First Bedrock Inference Environment

Configuring IAM permissions and access policies

Getting your IAM permissions right is the foundation of working with AWS Bedrock inference. You’ll need to create specific policies that grant access to the Bedrock service and the foundation models you plan to use.

Start by creating an IAM role or user with the AmazonBedrockFullAccess managed policy for development environments. For production, create a custom policy with more restrictive permissions:

{

"Version": "2012-10-17",

"Statement": [

{

"Effect": "Allow",

"Action": [

"bedrock:InvokeModel",

"bedrock:ListFoundationModels"

],

"Resource": "arn:aws:bedrock:*::foundation-model/*"

}

]

}

The resource ARN pattern allows you to specify which models your application can access. Replace the wildcard with specific model IDs like anthropic.claude-v2 if you want tighter control.

Don’t forget about model access permissions. Each foundation model requires explicit access approval through the AWS console. Navigate to the Bedrock console, select “Model access” and request access to the models you need. This approval process can take several minutes to hours depending on the model.

Establishing API connections and authentication

AWS Bedrock inference uses the standard AWS SDK authentication methods. The most common approaches include AWS credentials files, environment variables, or IAM roles for EC2 instances.

For local development, configure your AWS credentials using the AWS CLI:

aws configure

This creates credential files that the SDK automatically picks up. For programmatic access, you can use environment variables:

export AWS_ACCESS_KEY_ID=your_access_key

export AWS_SECRET_ACCESS_KEY=your_secret_key

export AWS_DEFAULT_REGION=us-east-1

When working with AWS SDKs, initialize the Bedrock client with your preferred region:

import boto3

bedrock_client = boto3.client(

'bedrock-runtime',

region_name='us-east-1'

)

The bedrock-runtime service handles inference requests, while the bedrock service manages model information and configurations. Most inference operations use the runtime client.

For production applications, use IAM roles attached to your compute resources rather than hardcoded credentials. This approach provides better security and automatic credential rotation.

Understanding regional availability and model access

AWS Bedrock foundation models aren’t available in all regions, and model availability varies by location. The primary regions supporting Bedrock include US East (N. Virginia), US West (Oregon), and Europe (Frankfurt), with ongoing expansion to additional regions.

| Region | Available Models | Notes |

|---|---|---|

| us-east-1 | Most complete selection | Primary region for new models |

| us-west-2 | Most models available | Good alternative to us-east-1 |

| eu-central-1 | Limited selection | Expanding gradually |

Check the AWS Bedrock documentation for current regional availability, as this changes frequently. Some models like Claude or Llama might be available in one region but not another.

Model access also depends on your AWS account type. New accounts might have restricted access to certain high-capacity models. Enterprise accounts typically get faster approval for model access requests.

Choose your region based on three factors: model availability, latency requirements, and data residency needs. If you’re building a global application, consider using multiple regions with region-specific model deployments.

Testing connectivity with sample inference requests

Once your permissions and authentication are configured, test your setup with a simple inference request. Start with a basic text generation model to verify everything works:

import json

import boto3

client = boto3.client('bedrock-runtime', region_name='us-east-1')

prompt = "Explain quantum computing in simple terms."

body = json.dumps({

"prompt": f"\n\nHuman: {prompt}\n\nAssistant:",

"max_tokens_to_sample": 300,

"temperature": 0.7

})

response = client.invoke_model(

body=body,

modelId='anthropic.claude-v2',

contentType='application/json'

)

response_body = json.loads(response.get('body').read())

print(response_body['completion'])

This example uses Claude v2, but you can substitute any model ID you have access to. Different models have varying request formats, so check the specific documentation for each foundation model.

Test different scenarios like error handling, timeout situations, and rate limiting. AWS Bedrock has built-in throttling mechanisms that might affect your application under high load.

Monitor your test requests in CloudWatch to understand latency patterns and costs. Each model has different pricing structures, and testing helps you estimate production costs before deploying your application.

Inference API Operations and Request Structures

Crafting Effective Prompt Structures for Optimal Results

The quality of your AWS Bedrock inference results directly depends on how well you structure your prompts. Think of prompts as detailed instructions you’d give to a skilled assistant – the clearer and more specific you are, the better the output.

Start with context setting. Foundation models perform significantly better when they understand the scenario. For example, instead of asking “Write an email,” try “Write a professional follow-up email to a potential client who attended yesterday’s product demo but hasn’t responded to our initial proposal.” This approach gives the model essential background information.

Use role-based prompting to establish the perspective you want. Phrases like “As a senior data analyst…” or “Acting as a customer service representative…” help the model adopt the appropriate tone and expertise level for your specific use case.

Structure complex requests using clear separators and numbered steps. When working with Claude or Llama models through Bedrock, organize multi-part requests like this:

Task: Create a marketing strategy

Context: SaaS startup targeting small businesses

Requirements:

1. Target audience analysis

2. Three main messaging pillars

3. Recommended channels

Format: Executive summary with bullet points

Include examples when possible, especially for specific formatting requirements. Few-shot prompting – providing 2-3 examples of the desired output – dramatically improves consistency across AWS Bedrock inference calls.

Configuring Inference Parameters and Settings

AWS Bedrock inference parameters act as fine-tuning controls that shape how foundation models generate responses. These settings can transform a generic output into precisely what you need for your application.

Temperature controls randomness in responses. Values between 0.1-0.3 work best for factual, consistent outputs like documentation or data analysis. For creative writing or brainstorming, increase temperature to 0.7-0.9. Most production applications benefit from temperatures around 0.4-0.6, balancing creativity with reliability.

Top-p (nucleus sampling) determines the diversity of word choices during generation. Setting top-p to 0.8-0.9 maintains quality while allowing some variation. Lower values (0.6-0.7) create more focused, predictable responses.

Max tokens directly impacts response length and your costs. Calculate your needs carefully – a typical paragraph contains 50-100 tokens, while a detailed article might require 1,000-2,000 tokens. Set limits slightly above your expected output to avoid truncated responses.

Stop sequences prevent models from continuing past your intended endpoint. Common stop sequences include specific punctuation marks, custom delimiters like “—“, or phrases like “End of response.” This parameter proves especially valuable for structured outputs or when generating multiple items in sequence.

Here’s a practical configuration example for different use cases:

| Use Case | Temperature | Top-p | Max Tokens | Stop Sequences |

|---|---|---|---|---|

| Code Generation | 0.2 | 0.8 | 500 | [““`”, “//END”] |

| Creative Writing | 0.8 | 0.9 | 1500 | [“THE END”] |

| Data Analysis | 0.1 | 0.7 | 800 | [“—“, “Summary:”] |

| Customer Service | 0.4 | 0.8 | 300 | [“Thanks”, “Best regards”] |

Understanding Token Limits and Pricing Implications

Token management directly affects both your AWS Bedrock costs and application performance. Each foundation model has specific token limits that include both input prompts and generated responses combined.

Input tokens include your prompt, conversation history, and any system messages. Output tokens represent the model’s generated response. Most Bedrock models charge separately for input and output tokens, with output tokens typically costing 2-3 times more than input tokens.

Model-specific limits vary significantly across AWS Bedrock’s foundation model selection:

- Claude models: 100K-200K total tokens

- Llama models: 4K-8K total tokens

- Cohere Command: 4K total tokens

- AI21 Jurassic: 8K total tokens

Calculate costs before deployment using these approximate rates per 1,000 tokens:

| Model Family | Input Token Cost | Output Token Cost |

|---|---|---|

| Claude Instant | $0.80 | $2.40 |

| Claude v2 | $8.00 | $24.00 |

| Llama 2 70B | $0.65 | $2.75 |

| Command | $1.50 | $2.00 |

Optimize token usage through prompt engineering. Remove unnecessary words, use abbreviations where appropriate, and avoid repetitive context in multi-turn conversations. Implement conversation summarization for long-running chat applications to prevent hitting context limits.

Monitor token consumption using AWS CloudWatch metrics. Set up alerts when token usage exceeds expected thresholds, helping you catch runaway costs from poorly optimized prompts or unexpected usage patterns.

For production applications, implement token counting before API calls to prevent expensive failed requests. Most AWS SDKs include token estimation functions that help you validate requests before sending them to Bedrock.

Advanced Inference Techniques and Optimization Strategies

Implementing streaming responses for real-time applications

When building conversational AI applications or real-time content generation systems with AWS Bedrock, streaming responses transform the user experience from waiting for complete outputs to watching content appear dynamically. Instead of blocking users while the foundation model generates lengthy responses, streaming delivers tokens as they’re produced.

The Bedrock API supports streaming through the invoke-model-with-response-stream operation. Your application receives chunks of data through Amazon EventBridge events, allowing you to display partial results immediately. This approach works particularly well for chatbots, code generation tools, and creative writing applications where users benefit from seeing progress.

response = bedrock_runtime.invoke_model_with_response_stream(

modelId='anthropic.claude-v2',

body=json.dumps({

"prompt": "Write a story about space exploration",

"max_tokens_to_sample": 1000

})

)

for event in response['body']:

if 'chunk' in event:

chunk = json.loads(event['chunk']['bytes'])

print(chunk['completion'], end='', flush=True)

Managing streaming connections requires careful error handling and timeout strategies. Network interruptions can break streams, so implement reconnection logic and consider storing partial responses to resume generation seamlessly.

Batch processing for high-volume inference tasks

Large-scale inference workloads benefit dramatically from batch processing strategies that maximize throughput while minimizing costs. AWS Bedrock inference optimization becomes crucial when processing thousands of documents, analyzing customer feedback at scale, or generating content for multiple campaigns simultaneously.

Design batch workflows that group similar requests together. Models perform more efficiently when processing related content types or maintaining consistent parameter settings across multiple calls. Create batches based on content length, model requirements, or processing priority to optimize resource allocation.

| Batch Strategy | Use Case | Benefits |

|---|---|---|

| Size-based batching | Mixed content lengths | Predictable processing times |

| Content-type batching | Documents, emails, code | Model context optimization |

| Priority batching | Time-sensitive vs. background tasks | Resource allocation control |

Implement queue management systems using Amazon SQS or AWS Step Functions to orchestrate batch processing workflows. These services handle retry logic, error management, and scaling automatically, allowing your application to focus on business logic rather than infrastructure concerns.

Monitor batch performance through CloudWatch metrics to identify bottlenecks and optimization opportunities. Track metrics like requests per second, token throughput, and error rates to fine-tune your batching strategy over time.

Fine-tuning parameters for improved model performance

Model parameters significantly impact both response quality and computational costs in AWS Bedrock inference scenarios. Temperature controls creativity and randomness—lower values produce focused, deterministic outputs while higher values encourage creative exploration. Start with moderate settings around 0.7 and adjust based on your specific use case requirements.

Token limits directly affect both cost and response completeness. Setting appropriate max_tokens_to_sample values prevents runaway generation costs while ensuring responses contain sufficient detail. Monitor actual token usage patterns to establish baseline requirements for different content types.

Top-p and top-k sampling parameters provide additional control over output diversity. Top-p (nucleus sampling) maintains quality by considering only the most probable tokens that sum to the specified probability mass. Top-k limits consideration to the k most likely tokens, providing more predictable but potentially less creative results.

{

"temperature": 0.7,

"max_tokens_to_sample": 500,

"top_p": 0.9,

"top_k": 50,

"stop_sequences": ["\n\n", "Human:"]

}

Experiment with stop sequences to control response boundaries and prevent models from generating unwanted continuations. Custom stop sequences work particularly well for structured outputs like JSON, code blocks, or conversational formats.

Managing costs through efficient request patterns

Cost optimization in AWS Bedrock requires understanding the relationship between model selection, request patterns, and token consumption. Different foundation models have varying cost structures—Claude models charge per input and output token, while others may have different pricing mechanisms.

Implement request caching for frequently asked questions or common content generation tasks. Cache responses at the application level or use AWS ElastiCache to avoid redundant API calls. This strategy works exceptionally well for FAQ systems, product descriptions, or template-based content generation.

Optimize prompt engineering to reduce token consumption without sacrificing quality. Concise, well-structured prompts often produce better results than verbose instructions while consuming fewer input tokens. Test different prompt formulations to find the sweet spot between clarity and brevity.

Consider request consolidation for related tasks. Instead of making separate API calls for similar content pieces, combine multiple requests into single calls when appropriate. This approach reduces overhead and can improve cost efficiency, especially for batch processing scenarios.

Monitor usage patterns through AWS Cost Explorer and Bedrock-specific metrics to identify optimization opportunities. Track cost per use case, peak usage periods, and model performance to make data-driven decisions about resource allocation and model selection strategies.

Security and Compliance Considerations for Production Use

Implementing data privacy and encryption best practices

Data privacy in AWS Bedrock starts with understanding that your prompts and model responses never leave your AWS account when using on-demand inference. Amazon doesn’t store or use your data to train foundation models, giving you complete control over your sensitive information.

Enable encryption at rest for all your Bedrock resources by configuring AWS Key Management Service (KMS) keys. You can use AWS-managed keys for basic protection or customer-managed keys for granular access control. Set up separate KMS keys for different environments and data classification levels.

Configure encryption in transit by ensuring all API calls use TLS 1.2 or higher. The Bedrock SDK handles this automatically, but verify your application doesn’t downgrade connection security. When building custom integrations, always validate certificate chains and implement proper SSL/TLS configurations.

Implement data loss prevention by establishing clear data handling policies. Tag your Bedrock resources with data classification labels and create IAM policies that enforce access based on these tags. Never include personally identifiable information (PII) in your prompts without proper anonymization or tokenization.

Consider using Amazon Macie to monitor your S3 buckets if you’re storing model inputs or outputs. Set up automated scanning to detect sensitive data patterns and receive alerts when compliance violations occur.

Understanding compliance requirements and certifications

AWS Bedrock inherits AWS’s comprehensive compliance certifications, including SOC 1/2/3, PCI DSS, HIPAA, FedRAMP, and GDPR readiness. Each foundation model provider may have additional compliance considerations that you need to evaluate based on your specific use case.

Review the AWS Bedrock compliance documentation to understand which certifications apply to your deployment region. Some foundation models might only be available in specific regions due to data residency requirements or regulatory constraints.

Create a compliance checklist that includes data residency verification, audit trail requirements, and retention policies. Document your model selection rationale and maintain records of all configuration changes for compliance audits.

For healthcare applications requiring HIPAA compliance, sign a Business Associate Agreement (BAA) with AWS and configure Bedrock within a HIPAA-eligible environment. Ensure all data flows remain within HIPAA-compliant services and avoid storing protected health information in logs.

Financial services organizations should review PCI DSS requirements if processing payment data. While Bedrock doesn’t directly handle payment information, ensure your application architecture maintains PCI compliance boundaries when integrating AI-generated content with financial workflows.

Monitoring and logging inference activities

Set up comprehensive logging using AWS CloudTrail to capture all Bedrock API calls, including request metadata, response codes, and error messages. Configure CloudTrail to deliver logs to a dedicated S3 bucket with lifecycle policies that meet your retention requirements.

Enable Amazon CloudWatch monitoring for real-time visibility into your inference operations. Track key metrics like request count, latency, error rates, and throttling events. Create custom dashboards that display inference patterns and help identify anomalies.

Configure CloudWatch alarms for critical thresholds such as error rate spikes, unusual request volumes, or latency degradation. Set up notifications to your operations team through Amazon SNS when these conditions occur.

Use AWS X-Ray for distributed tracing when Bedrock is part of a larger application architecture. This helps you understand the complete request flow and identify performance bottlenecks across your entire stack.

Implement application-level logging that captures business context around your AI inference requests. Log prompt templates, user interactions, and model responses (where appropriate) to support debugging and compliance audits.

Consider using Amazon EventBridge to create event-driven monitoring workflows. You can trigger automated responses to specific Bedrock events, such as scaling resources during high usage periods or alerting security teams about unusual access patterns.

Setting up access controls for team collaboration

Design a role-based access control strategy that aligns with your organization’s structure and data sensitivity requirements. Create separate IAM roles for different personas such as AI engineers, data scientists, application developers, and operations teams.

Grant least-privilege access by starting with minimal permissions and gradually adding capabilities as needed. Use AWS IAM Access Analyzer to identify unused permissions and regularly audit role assignments to prevent permission creep.

Implement resource-based policies that restrict access to specific foundation models based on team requirements. Data science teams might need access to multiple models for experimentation, while production applications should only access approved, tested models.

Set up cross-account access patterns for organizations with multiple AWS accounts. Use IAM roles for cross-account access rather than sharing credentials, and implement AWS Organizations service control policies to enforce guardrails across all accounts.

Create development, staging, and production environments with appropriate access controls. Developers should have broader access in development environments but restricted access to production Bedrock resources. Use AWS Account separation or resource tagging to enforce these boundaries.

Configure session duration limits and require multi-factor authentication for sensitive operations. Implement just-in-time access patterns using AWS Systems Manager Session Manager or third-party privileged access management tools for administrative activities.

Establish clear approval workflows for production model deployments and configuration changes. Use AWS Service Catalog to standardize Bedrock resource provisioning and ensure all deployments follow your security and compliance requirements.

AWS Bedrock offers a powerful gateway to foundation models that can transform how you approach AI inference tasks. From understanding the core architecture to mastering API operations, you now have the building blocks to create sophisticated AI applications. The platform’s diverse model selection gives you flexibility to choose the right tool for your specific use case, while advanced optimization techniques help you get the most out of your inference calls.

Security and compliance shouldn’t be an afterthought when working with AI systems. Take time to implement proper access controls, monitor your usage patterns, and understand the data handling requirements for your organization. Start small with a simple inference setup, experiment with different models, and gradually scale up as you become more comfortable with the platform. The investment in learning these concepts will pay dividends as AI becomes increasingly central to modern applications.