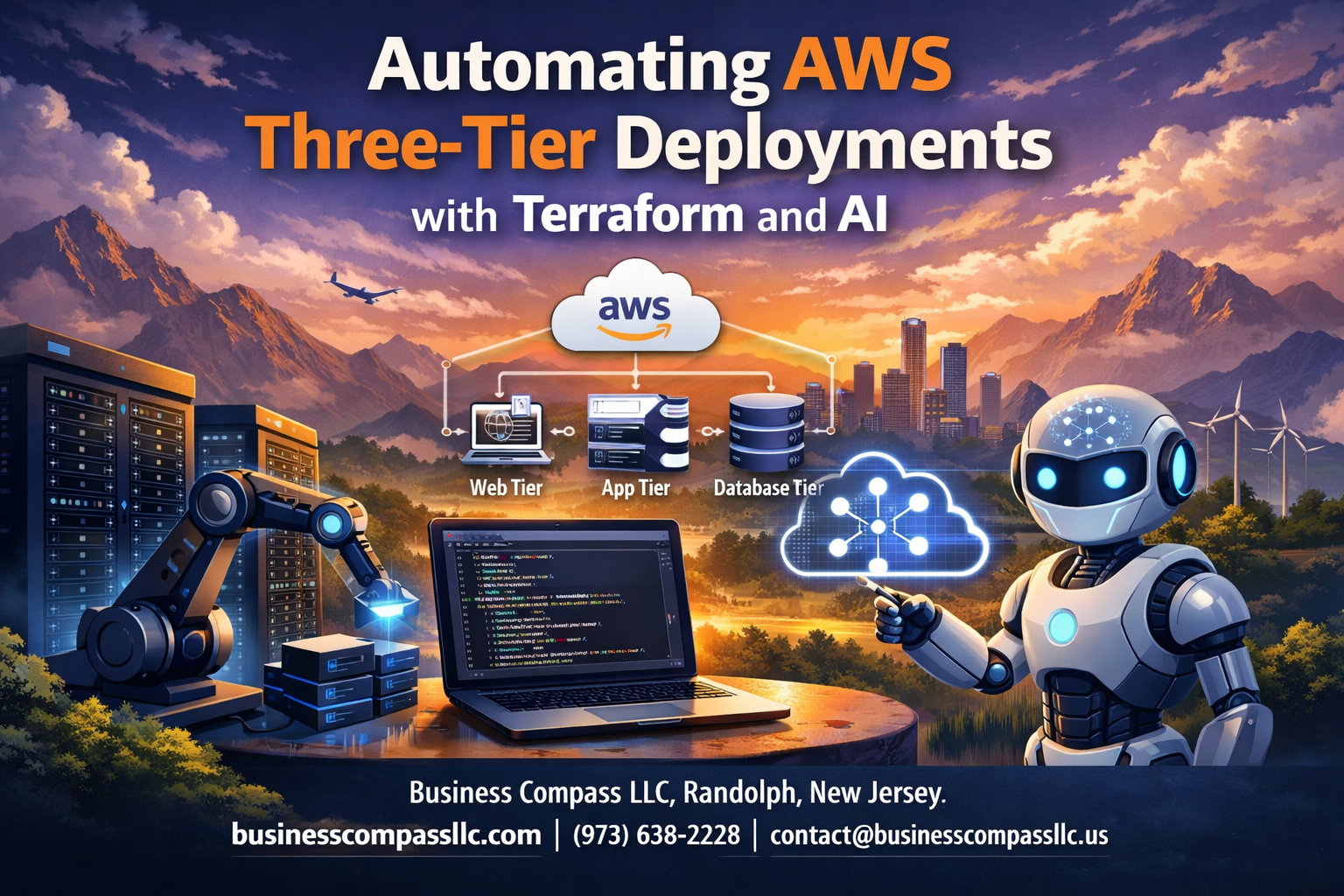

Managing AWS three-tier architecture deployments manually takes hours and leaves room for costly mistakes. This guide shows DevOps engineers, cloud architects, and infrastructure teams how to automate multi-tier AWS deployment using Terraform automation and AI infrastructure optimization.

You’ll discover why three-tier architecture benefits make this approach perfect for scalable applications, from improved security isolation to better resource management. We’ll walk through the essential Terraform components needed to build rock-solid infrastructure as code, including modules for presentation, application, and database tiers.

You’ll also learn how AI-powered optimization can automatically tune your infrastructure for cost and performance, plus get a complete step-by-step automation process you can implement today. Finally, we’ll cover monitoring strategies that keep your automated AWS provisioning running smoothly in production.

Understanding Three-Tier Architecture Benefits for AWS Applications

Separating presentation, application, and data layers for scalability

Three-tier architecture transforms how AWS applications handle growth by creating distinct boundaries between your web interface, business logic, and database components. Each layer operates independently, allowing you to scale resources based on specific demands rather than upgrading entire systems. When your application experiences traffic spikes, you can instantly spin up additional web servers without touching your database infrastructure.

Achieving high availability through distributed components

Distributed components across multiple Availability Zones eliminate single points of failure while maintaining seamless user experiences. Your presentation layer can serve traffic from multiple regions while application servers process requests across different zones. Database replication ensures data remains accessible even during infrastructure outages, creating redundancy that keeps applications running smoothly.

Implementing security best practices with isolated network layers

Network isolation creates powerful security boundaries that protect sensitive data and business logic from potential threats. Private subnets house your application and database tiers, while public subnets only expose necessary web components. Security groups act as virtual firewalls, controlling traffic flow between layers and preventing unauthorized access to critical infrastructure components.

Reducing operational costs through optimized resource allocation

Smart resource allocation cuts AWS costs by matching infrastructure capacity to actual usage patterns across different tiers. Auto-scaling groups adjust server counts based on real-time demand, while database instances can use reserved pricing for predictable workloads. Load balancers distribute traffic efficiently, preventing over-provisioning and ensuring you pay only for resources your application actually needs.

Essential Terraform Components for Multi-Tier Infrastructure

Creating VPC and networking foundations with modules

Building robust networking infrastructure starts with well-designed Terraform modules that define your VPC boundaries and subnet configurations. Your Terraform automation should establish separate subnets across multiple availability zones for each tier – public subnets for load balancers, private subnets for application servers, and isolated database subnets. This modular approach enables consistent multi-tier AWS deployment patterns while maintaining security isolation between components.

Route tables and security groups act as traffic controllers, directing communication flows between tiers while blocking unauthorized access. Network Access Control Lists (NACLs) provide an additional security layer, creating stateless firewalls that complement your security group rules for comprehensive protection.

Building auto-scaling groups for application tier flexibility

Auto-scaling groups transform static infrastructure into dynamic, responsive systems that adapt to changing workload demands. Configure launch templates with your application server specifications, including instance types, security groups, and user data scripts for automated configuration. Set scaling policies based on CPU utilization, memory consumption, or custom CloudWatch metrics that reflect your application’s unique performance characteristics.

Target tracking policies automatically adjust capacity to maintain optimal performance levels, while step scaling provides granular control during traffic spikes. Health check configurations ensure failed instances are quickly replaced, maintaining service availability even during unexpected failures.

Configuring load balancers for traffic distribution efficiency

Application Load Balancers serve as intelligent traffic distributors, routing requests to healthy application instances based on path patterns, host headers, or request attributes. Configure target groups that define health check endpoints and protocols, ensuring traffic only reaches responsive servers. Sticky sessions can maintain user state when needed, while cross-zone load balancing distributes traffic evenly across availability zones.

SSL termination at the load balancer level offloads encryption processing from application servers, improving overall performance. Integration with AWS Certificate Manager automates SSL certificate management, reducing operational overhead while maintaining security standards for your AWS three-tier architecture.

AI-Powered Infrastructure Optimization Strategies

Implementing machine learning for resource prediction and scaling

Machine learning algorithms analyze historical usage patterns and traffic spikes to predict resource demands across your AWS three-tier architecture. These predictive models automatically adjust EC2 instances, RDS connections, and load balancer capacity before bottlenecks occur, ensuring seamless performance during peak loads.

Automating security configurations with intelligent policy generation

AI-driven security automation creates dynamic IAM policies and security group rules based on application behavior and threat intelligence. Smart policy generators analyze network traffic patterns and access requirements to build least-privilege configurations that adapt to changing deployment needs while maintaining robust protection.

Optimizing costs through AI-driven resource recommendations

Cost optimization engines continuously monitor resource utilization and recommend right-sizing opportunities for your multi-tier AWS deployment. These systems identify underutilized instances, suggest reserved instance purchases, and automatically schedule non-production environments to reduce expenses without compromising application availability.

Enhancing monitoring with predictive analytics capabilities

Predictive analytics transform traditional monitoring by forecasting potential failures and performance degradation before they impact users. Machine learning models process metrics from application tiers, database performance, and network latency to trigger proactive remediation actions through Terraform automation workflows.

Step-by-Step Deployment Automation Process

Setting up Terraform state management for team collaboration

Remote state management becomes critical when multiple developers work on AWS three-tier architecture deployments. Store your Terraform state files in AWS S3 buckets with DynamoDB locking to prevent concurrent modifications. This setup ensures team members can safely collaborate on infrastructure changes without conflicts or state corruption.

Configure your backend with versioning enabled and proper IAM permissions for secure access control. Use separate state files for different environments (dev, staging, production) to isolate changes and reduce risk during deployments.

Creating reusable modules for consistent deployments

Terraform modules streamline multi-tier AWS deployment by packaging common infrastructure patterns into reusable components. Create modules for each tier – presentation, application, and data layers – with configurable variables for different environments. This approach ensures consistency across deployments while reducing code duplication.

Structure your modules with clear input variables, outputs, and documentation. Include validation rules to catch configuration errors early and maintain standardized naming conventions across your infrastructure as code implementations.

Implementing CI/CD pipelines with infrastructure validation

Automated pipelines validate Terraform configurations before applying changes to your AWS infrastructure. Integrate terraform plan, validate, and security scanning tools into your CI/CD workflow to catch issues early. Use tools like Checkov or tfsec to identify security misconfigurations and compliance violations.

Set up approval gates for production deployments and implement automated testing of your infrastructure code. This validation process prevents broken configurations from reaching live environments and maintains deployment quality standards.

Configuring automated rollback mechanisms for deployment safety

Implement robust rollback strategies to quickly recover from failed deployments in your AWS three-tier architecture. Use Terraform workspaces or state snapshots to maintain previous infrastructure versions. Create automated monitoring that triggers rollbacks when health checks fail or performance metrics drop below thresholds.

Design your deployment process with blue-green or canary strategies to minimize downtime. Configure CloudWatch alarms and Lambda functions to automatically initiate rollback procedures when deployment issues are detected, ensuring system reliability and user experience.

Monitoring and Maintenance Best Practices

Establishing comprehensive logging across all infrastructure layers

Effective monitoring starts with robust logging strategies that capture activities across your presentation, application, and data tiers. AWS CloudWatch serves as the central hub for collecting logs from EC2 instances, RDS databases, and load balancers, while VPC Flow Logs provide network-level visibility. Configure structured logging with consistent formats across all components to enable efficient searching and correlation during troubleshooting scenarios.

Application-level logs should include user interactions, API responses, and database query performance metrics. Terraform automation makes log configuration repeatable by defining CloudWatch log groups, retention policies, and shipping rules as code. This approach ensures your three-tier architecture maintains consistent logging standards across development, staging, and production environments without manual intervention.

Creating automated alerts for proactive issue resolution

Smart alerting prevents minor issues from becoming major outages by triggering notifications based on predefined thresholds and patterns. Set up CloudWatch alarms for CPU utilization, memory consumption, database connection counts, and application response times across all tiers. Multi-dimensional alerts that consider relationships between components provide better context than isolated metrics.

AI infrastructure optimization enhances traditional alerting by identifying unusual patterns and predicting potential failures before they occur. Machine learning algorithms can analyze historical performance data to establish dynamic baselines, reducing false positives while catching anomalies that static thresholds might miss.

Implementing infrastructure drift detection and correction

Infrastructure drift occurs when live environments deviate from their defined Terraform configurations, creating security risks and operational inconsistencies. Terraform plan commands run on schedules can detect these differences, while tools like Terragrunt provide additional drift detection capabilities for complex multi-tier AWS deployments.

Automated correction workflows can immediately remediate detected drift by applying approved Terraform configurations. This approach maintains infrastructure as code principles while preventing configuration creep that commonly affects manually managed resources. Regular drift detection becomes especially critical for three-tier architectures where changes in one layer can impact dependent components.

Terraform revolutionizes how we deploy AWS three-tier architectures, turning what used to be hours of manual work into automated, repeatable processes. The combination of Infrastructure as Code principles with AI-powered optimization creates reliable, scalable applications that adapt to changing demands without breaking the bank.

The real game-changer comes from treating your infrastructure like software – version controlled, tested, and continuously improved. Start small with a basic three-tier setup, then gradually incorporate AI optimization and advanced monitoring as your team gets comfortable with the workflow. Your future self will thank you for building systems that practically run themselves while delivering the performance your users expect.